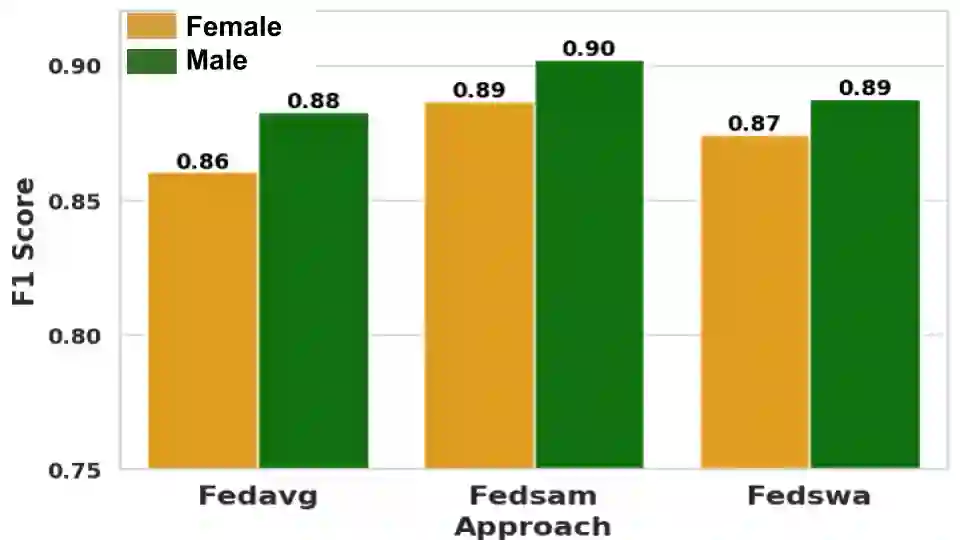

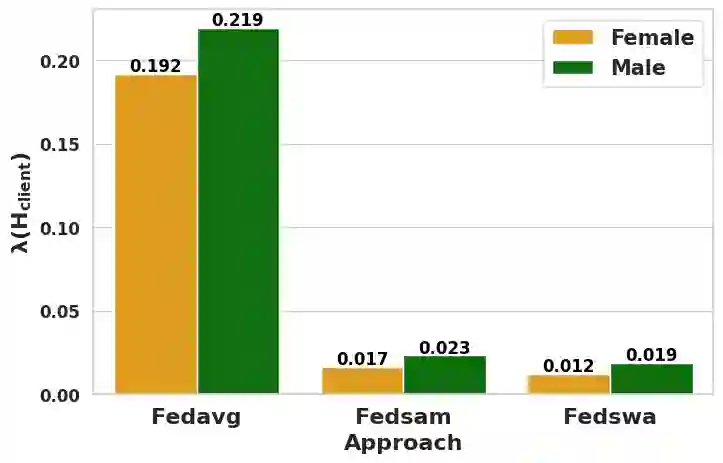

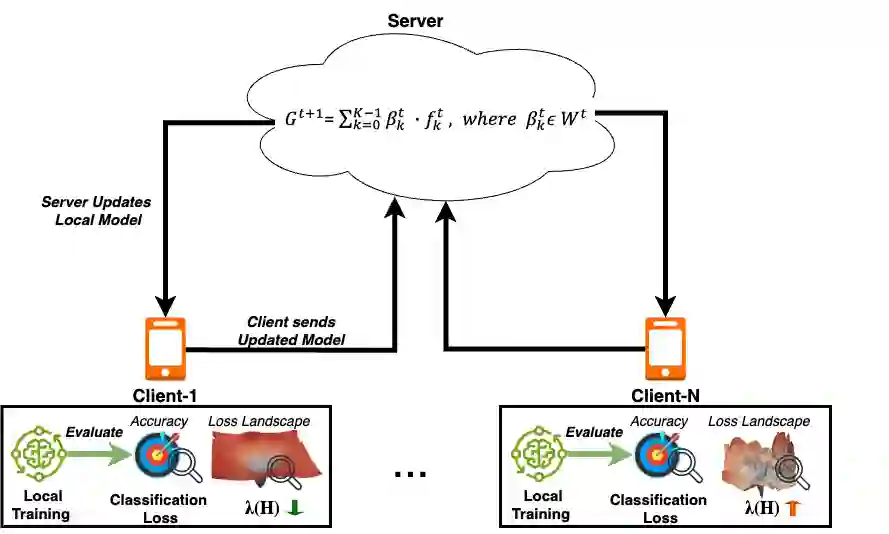

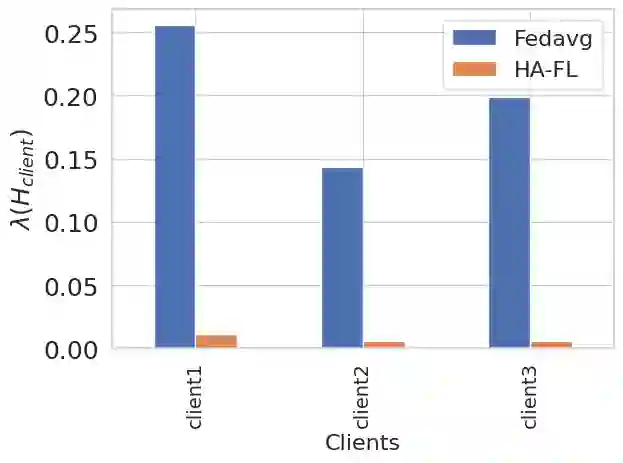

Federated learning (FL) enables collaborative model training while preserving data privacy, making it suitable for decentralized human-centered AI applications. However, a significant research gap remains in ensuring fairness in these systems. Current fairness strategies in FL require knowledge of bias-creating/sensitive attributes, clashing with FL's privacy principles. Moreover, in human-centered datasets, sensitive attributes may remain latent. To tackle these challenges, we present a novel bias mitigation approach inspired by "Fairness without Demographics" in machine learning. The presented approach achieves fairness without needing knowledge of sensitive attributes by minimizing the top eigenvalue of the Hessian matrix during training, ensuring equitable loss landscapes across FL participants. Notably, we introduce a novel FL aggregation scheme that promotes participating models based on error rates and loss landscape curvature attributes, fostering fairness across the FL system. This work represents the first approach to attaining "Fairness without Demographics" in human-centered FL. Through comprehensive evaluation, our approach demonstrates effectiveness in balancing fairness and efficacy across various real-world applications, FL setups, and scenarios involving single and multiple bias-inducing factors, representing a significant advancement in human-centered FL.

翻译:联邦学习(FL)支持在保护数据隐私的同时进行协作模型训练,因此适用于去中心化的以人为本人工智能应用。然而,当前研究在确保这些系统公平性方面仍存在显著空白。FL中现有的公平性策略需要预知产生偏见/敏感属性的信息,这与FL的隐私原则相冲突。此外,在以人为本数据集中,敏感属性可能以潜在形式存在。为应对这些挑战,我们提出了一种新颖的偏见缓解方法,其灵感源于机器学习中的"无人口统计公平性"。该方法通过训练中最小化Hessian矩阵的最大特征值,实现无需知晓敏感属性即可达成公平性,确保FL参与者间学习景观的均衡。值得注意的是,我们引入了一种新型FL聚合方案,该方案基于错误率和损失景观曲率属性提升参与模型的权重,从而促进FL系统的整体公平性。本研究首次在以人为本FL中实现了"无人口统计公平性"。通过全面评估,我们的方法在涵盖单重与多重偏见诱发因素的各种现实应用、FL配置及场景中,均展现出平衡公平性与有效性的能力,标志着以人为本FL领域的重要进展。