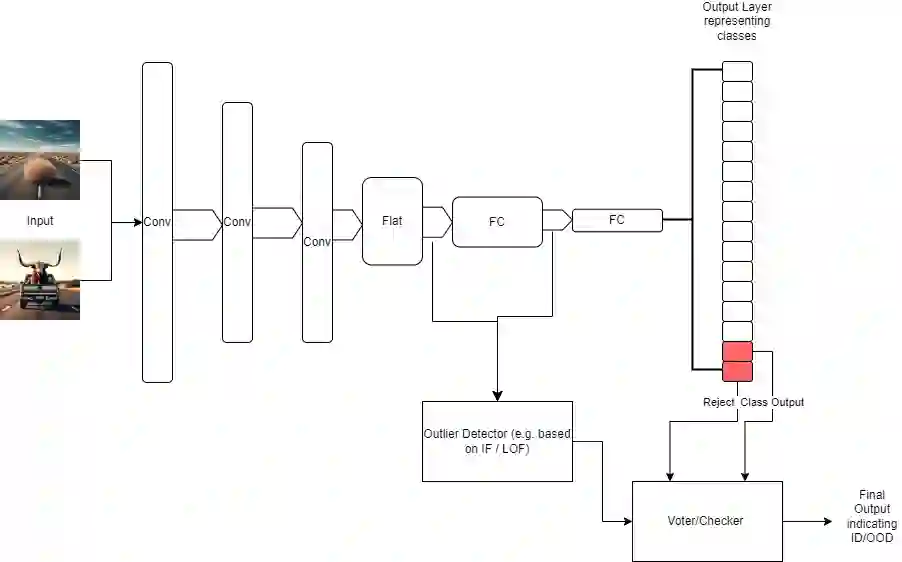

This paper explores the role and challenges of Artificial Intelligence (AI) algorithms, specifically AI-based software elements, in autonomous driving systems. These AI systems are fundamental in executing real-time critical functions in complex and high-dimensional environments. They handle vital tasks like multi-modal perception, cognition, and decision-making tasks such as motion planning, lane keeping, and emergency braking. A primary concern relates to the ability (and necessity) of AI models to generalize beyond their initial training data. This generalization issue becomes evident in real-time scenarios, where models frequently encounter inputs not represented in their training or validation data. In such cases, AI systems must still function effectively despite facing distributional or domain shifts. This paper investigates the risk associated with overconfident AI models in safety-critical applications like autonomous driving. To mitigate these risks, methods for training AI models that help maintain performance without overconfidence are proposed. This involves implementing certainty reporting architectures and ensuring diverse training data. While various distribution-based methods exist to provide safety mechanisms for AI models, there is a noted lack of systematic assessment of these methods, especially in the context of safety-critical automotive applications. Many methods in the literature do not adapt well to the quick response times required in safety-critical edge applications. This paper reviews these methods, discusses their suitability for safety-critical applications, and highlights their strengths and limitations. The paper also proposes potential improvements to enhance the safety and reliability of AI algorithms in autonomous vehicles in the context of rapid and accurate decision-making processes.

翻译:本文探讨了人工智能算法,特别是基于AI的软件元素,在自动驾驶系统中的角色与挑战。这些AI系统在复杂且高维度的环境中执行实时关键功能时具有基础性作用,负责处理多模态感知、认知等关键任务,以及运动规划、车道保持和紧急制动等决策任务。一个核心关切涉及AI模型超越初始训练数据进行泛化的能力(及必要性)。这种泛化问题在实时场景中尤为明显,因为模型经常遇到训练或验证数据中未出现的输入。在此类情况下,尽管面临分布或领域偏移,AI系统仍需有效运行。本文研究了在自动驾驶等安全关键应用中,过度自信的AI模型所伴随的风险。为缓解这些风险,提出了训练AI模型的方法,以帮助其在不过度自信的情况下保持性能,具体包括构建确定性报告架构并确保训练数据的多样性。尽管存在多种基于分布的方法来为AI模型提供安全机制,但针对这些方法的系统评估仍显不足,尤其是在安全关键型汽车应用的背景下。文献中的许多方法难以适应安全关键边缘应用所需的快速响应时间。本文对此类方法进行了综述,探讨了它们在安全关键应用中的适用性,并强调了其优势与局限性。此外,本文还提出了潜在改进措施,以在快速而准确的决策过程中增强自动驾驶车辆中AI算法的安全性与可靠性。