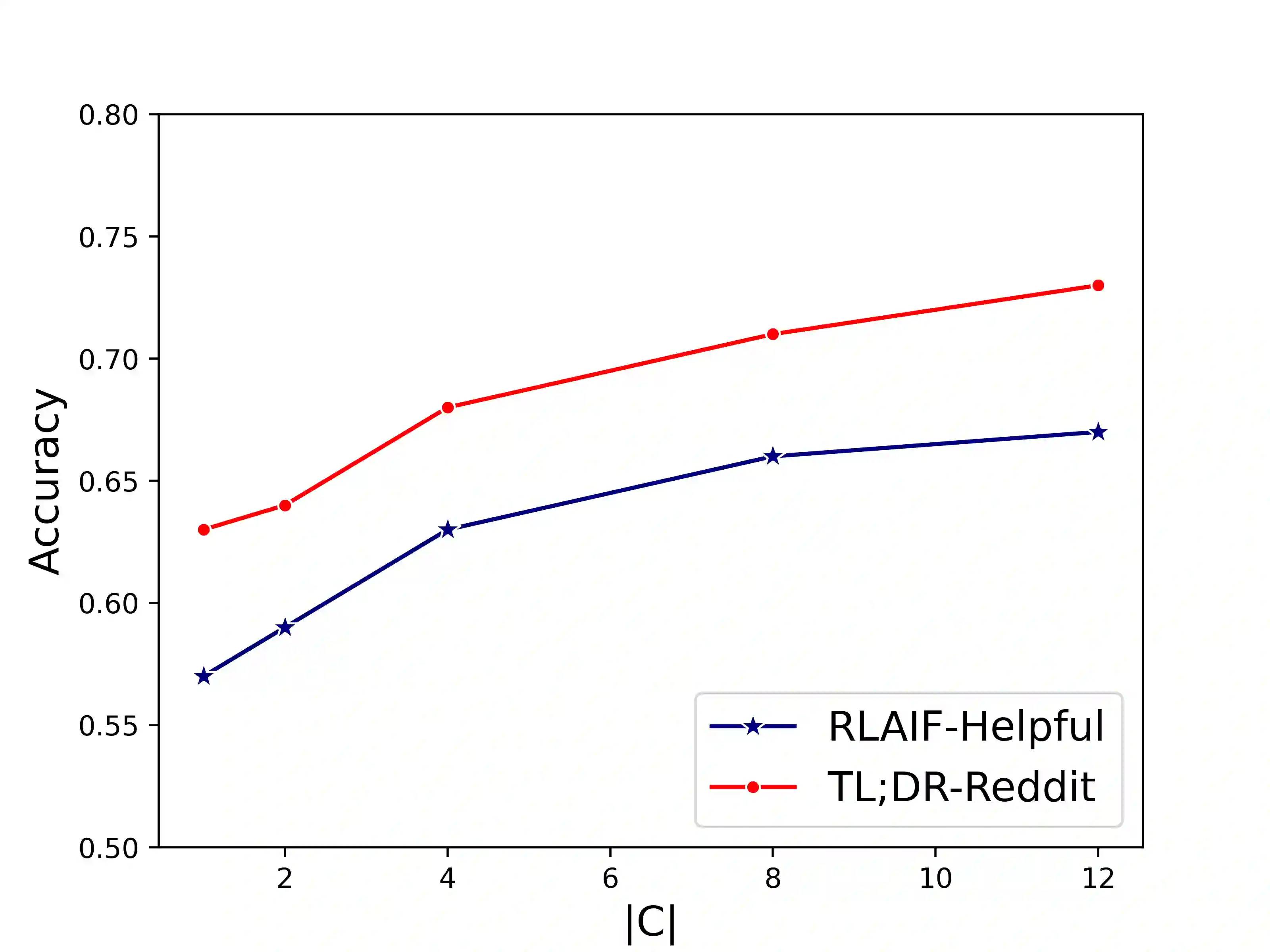

Comparative reasoning plays a crucial role in text preference prediction; however, large language models (LLMs) often demonstrate inconsistencies in their reasoning. While approaches like Chain-of-Thought improve accuracy in many other settings, they struggle to consistently distinguish the similarities and differences of complex texts. We introduce SC, a prompting approach that predicts text preferences by generating structured intermediate comparisons. SC begins by proposing aspects of comparison, followed by generating textual comparisons under each aspect. We select consistent comparisons with a pairwise consistency comparator that ensures each aspect's comparisons clearly distinguish differences between texts, significantly reducing hallucination and improving consistency. Our comprehensive evaluations across various NLP tasks, including summarization, retrieval, and automatic rating, demonstrate that SC equips LLMs to achieve state-of-the-art performance in text preference prediction.

翻译:比较推理在文本偏好预测中起着关键作用;然而,大型语言模型(LLMs)在其推理过程中常表现出不一致性。尽管诸如思维链(Chain-of-Thought)等方法在其他许多场景中提升了准确性,但它们难以稳定地区分复杂文本间的相似性与差异性。本文提出SC,一种通过生成结构化中间比较来预测文本偏好的提示方法。SC首先提出比较的维度,随后在每个维度下生成文本比较。我们通过一个成对一致性比较器来选择一致的比较,该比较器确保每个维度的比较能清晰区分文本间的差异,从而显著减少幻觉并提升一致性。我们在包括摘要、检索和自动评分在内的多种自然语言处理任务上进行的全面评估表明,SC使LLMs在文本偏好预测任务中达到了最先进的性能。