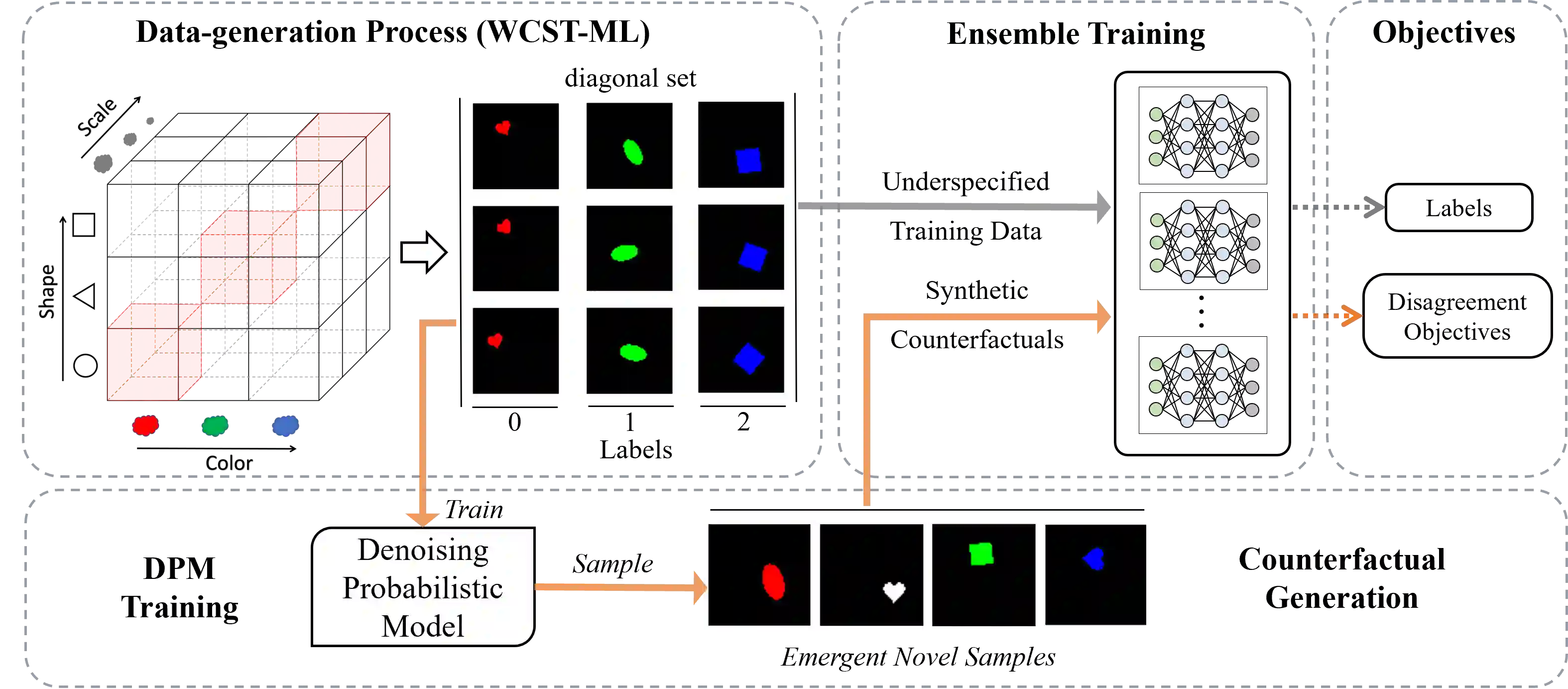

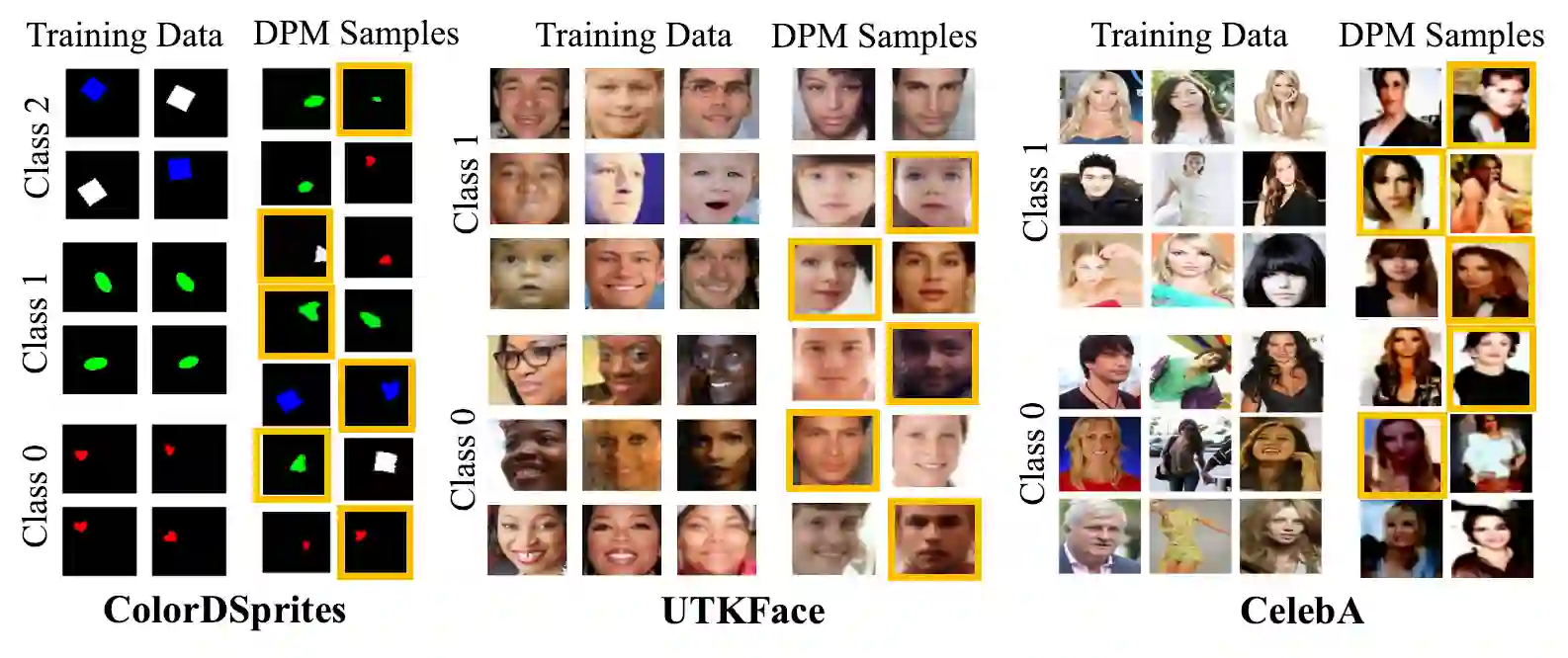

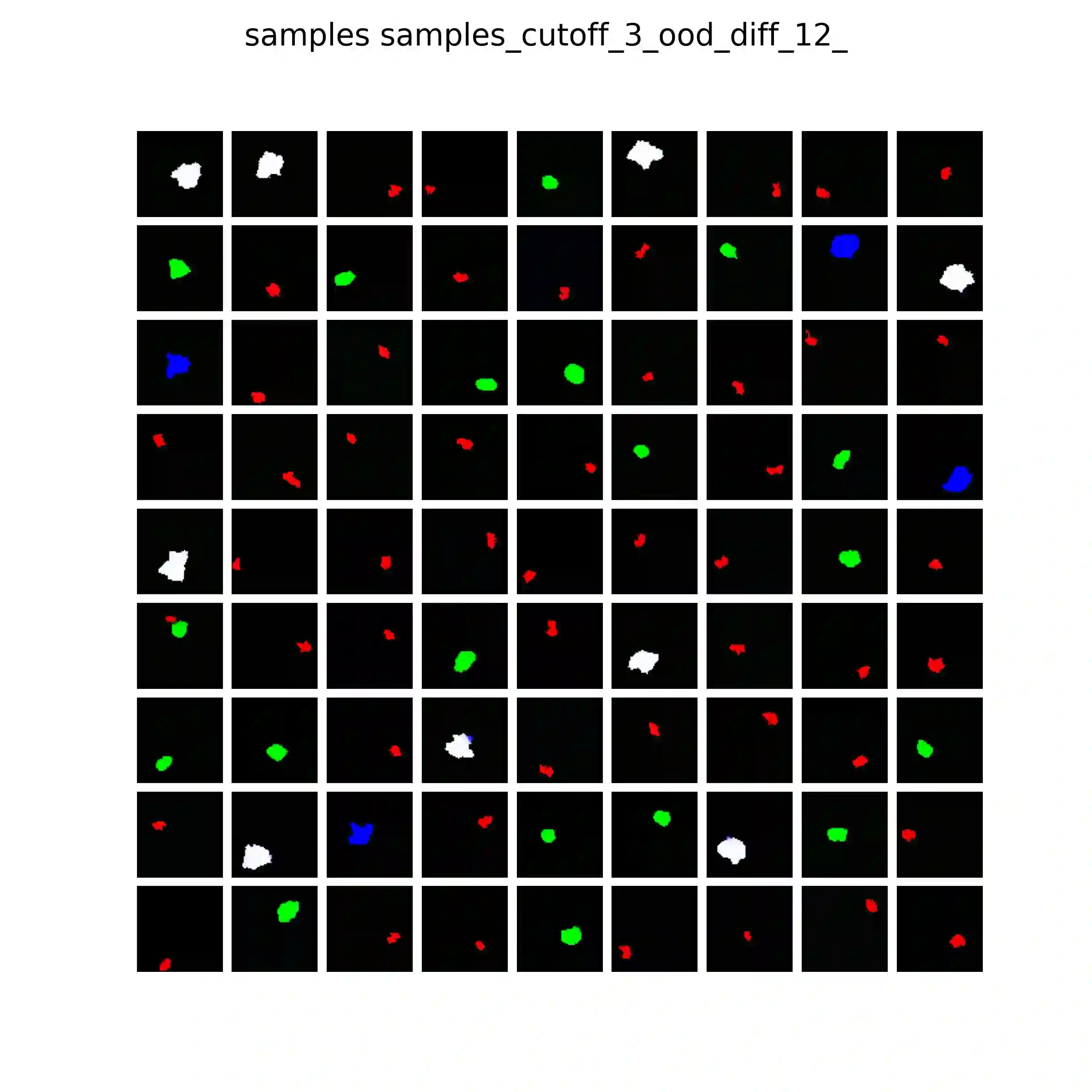

Spurious correlations in the data, where multiple cues are predictive of the target labels, often lead to a phenomenon known as shortcut learning, where a model relies on erroneous, easy-to-learn cues while ignoring reliable ones. In this work, we propose DiffDiv an ensemble diversification framework exploiting Diffusion Probabilistic Models (DPMs) to mitigate this form of bias. We show that at particular training intervals, DPMs can generate images with novel feature combinations, even when trained on samples displaying correlated input features. We leverage this crucial property to generate synthetic counterfactuals to increase model diversity via ensemble disagreement. We show that DPM-guided diversification is sufficient to remove dependence on shortcut cues, without a need for additional supervised signals. We further empirically quantify its efficacy on several diversification objectives, and finally show improved generalization and diversification on par with prior work that relies on auxiliary data collection.

翻译:数据中的伪相关性(即多个线索均可预测目标标签)常导致一种称为“捷径学习”的现象,即模型依赖错误且易于学习的线索,而忽略可靠的线索。本文提出DiffDiv——一种利用扩散概率模型(DPMs)的集成多样化框架,以缓解此类偏差。我们证明,在特定训练阶段,即使基于显示相关输入特征的样本进行训练,DPMs仍能生成具有新颖特征组合的图像。我们利用这一关键特性生成合成反事实样本,通过集成分歧增强模型多样性。研究表明,基于DPM的多样化方法足以消除对捷径线索的依赖,且无需额外的监督信号。我们进一步通过实验量化了该方法在多种多样化目标上的有效性,最终证明其泛化能力与多样化效果达到与依赖辅助数据收集的现有方法相当的水平。