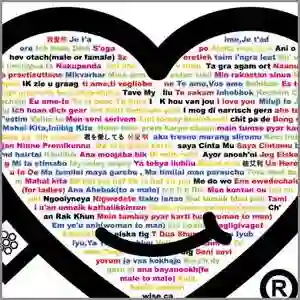

In this paper, we introduce LDGen, a novel method for integrating large language models (LLMs) into existing text-to-image diffusion models while minimizing computational demands. Traditional text encoders, such as CLIP and T5, exhibit limitations in multilingual processing, hindering image generation across diverse languages. We address these challenges by leveraging the advanced capabilities of LLMs. Our approach employs a language representation strategy that applies hierarchical caption optimization and human instruction techniques to derive precise semantic information,. Subsequently, we incorporate a lightweight adapter and a cross-modal refiner to facilitate efficient feature alignment and interaction between LLMs and image features. LDGen reduces training time and enables zero-shot multilingual image generation. Experimental results indicate that our method surpasses baseline models in both prompt adherence and image aesthetic quality, while seamlessly supporting multiple languages. Project page: https://zrealli.github.io/LDGen.

翻译:本文提出LDGen,一种将大语言模型(LLMs)集成到现有文本到图像扩散模型中的新方法,同时最小化计算需求。传统文本编码器(如CLIP和T5)在多语言处理方面存在局限,阻碍了跨多样语言的图像生成。我们通过利用LLMs的先进能力应对这些挑战。该方法采用一种语言表征策略,应用分层描述优化和人类指令技术以获取精确语义信息。随后,我们引入轻量级适配器和跨模态优化器,以促进LLMs与图像特征之间的高效特征对齐与交互。LDGen减少了训练时间,并实现了零样本多语言图像生成。实验结果表明,该方法在提示遵循度和图像美学质量上均超越基线模型,同时无缝支持多种语言。项目页面:https://zrealli.github.io/LDGen。