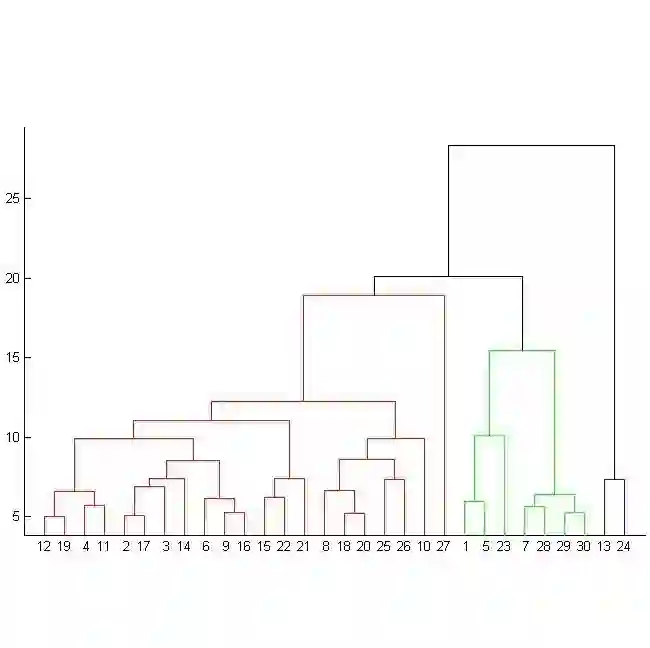

In many real-world scenarios, such as meetings, multiple speakers are present with an unknown number of participants, and their utterances often overlap. We address these multi-speaker challenges by a novel attention-based encoder-decoder method augmented with special speaker class tokens obtained by speaker clustering. During inference, we select multiple recognition hypotheses conditioned on predicted speaker cluster tokens, and these hypotheses are merged by agglomerative hierarchical clustering (AHC) based on the normalized edit distance. The clustered hypotheses result in the multi-speaker transcriptions with the appropriate number of speakers determined by AHC. Our experiments on the LibriMix dataset demonstrate that our proposed method was particularly effective in complex 3-mix environments, achieving a 55% relative error reduction on clean data and a 36% relative error reduction on noisy data compared with conventional serialized output training.

翻译:在许多现实场景中,例如会议环境,通常存在未知数量的说话人,且其语音经常发生重叠。我们通过一种新颖的基于注意力机制的编码器-解码器方法来解决这些多说话人识别难题,该方法通过说话人聚类获取特殊的说话人类别标记进行增强。在推理过程中,我们根据预测的说话人聚类标记选择多个识别假设,并基于归一化编辑距离通过凝聚层次聚类(AHC)对这些假设进行合并。经聚类的假设最终形成多说话人转录文本,其说话人数量由AHC动态确定。我们在LibriMix数据集上的实验表明,所提出的方法在复杂的三说话人混合场景中效果尤为显著,与传统的序列化输出训练方法相比,在纯净语音数据上实现了55%的相对错误率降低,在含噪语音数据上实现了36%的相对错误率降低。