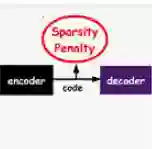

The locally competitive algorithm (LCA) can solve sparse coding problems across a wide range of use cases. Recently, convolution-based LCA approaches have been shown to be highly effective for enhancing robustness for image recognition tasks in vision pipelines. To additionally maximize representational sparsity, LCA with hard-thresholding can be applied. While this combination often yields very good solutions satisfying an $\ell_0$ sparsity criterion, it comes with significant drawbacks for practical application: (i) LCA is very inefficient, typically requiring hundreds of optimization cycles for convergence; (ii) the use of hard-thresholding results in a non-convex loss function, which might lead to suboptimal minima. To address these issues, we propose the Locally Competitive Algorithm with State Warm-up via Predictive Priming (WARP-LCA), which leverages a predictor network to provide a suitable initial guess of the LCA state based on the current input. Our approach significantly improves both convergence speed and the quality of solutions, while maintaining and even enhancing the overall strengths of LCA. We demonstrate that WARP-LCA converges faster by orders of magnitude and reaches better minima compared to conventional LCA. Moreover, the learned representations are more sparse and exhibit superior properties in terms of reconstruction and denoising quality as well as robustness when applied in deep recognition pipelines. Furthermore, we apply WARP-LCA to image denoising tasks, showcasing its robustness and practical effectiveness. Our findings confirm that the naive use of LCA with hard-thresholding results in suboptimal minima, whereas initializing LCA with a predictive guess results in better outcomes. This research advances the field of biologically inspired deep learning by providing a novel approach to convolutional sparse coding.

翻译:局部竞争算法(LCA)能够解决广泛使用场景中的稀疏编码问题。最近的研究表明,基于卷积的LCA方法在视觉处理流程中对于提升图像识别任务的鲁棒性极为有效。为了进一步最大化表示稀疏性,可采用带硬阈值的LCA。虽然这种组合通常能产生满足$\ell_0$稀疏准则的优质解,但在实际应用中存在显著缺陷:(i)LCA效率低下,通常需要数百次优化循环才能收敛;(ii)硬阈值的使用导致损失函数非凸,可能陷入次优极小值。为解决这些问题,我们提出通过预测预热进行状态初始化的局部竞争算法(WARP-LCA),该方法利用预测网络根据当前输入为LCA状态提供合适的初始猜测。我们的方法在保持乃至增强LCA整体优势的同时,显著提升了收敛速度和解的质量。实验证明,与传统LCA相比,WARP-LCA的收敛速度提高数个数量级,并能达到更优的极小值。此外,学习到的表示具有更高稀疏性,在重建与去噪质量以及应用于深度识别流程时的鲁棒性方面均表现出更优越的特性。我们进一步将WARP-LCA应用于图像去噪任务,展示了其鲁棒性与实际有效性。研究结果证实:直接使用带硬阈值的LCA会导致次优极小值,而通过预测猜测初始化LCA则能获得更优结果。本研究通过提出一种新颖的卷积稀疏编码方法,推动了生物启发式深度学习领域的发展。