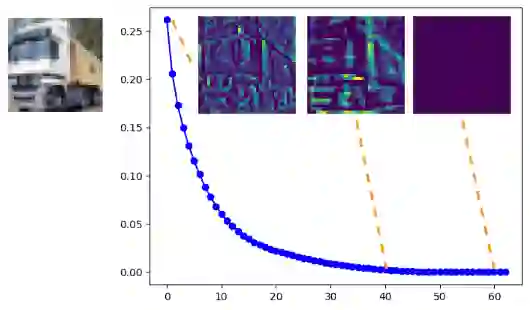

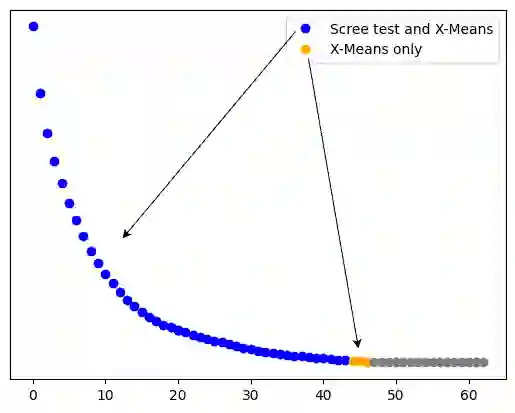

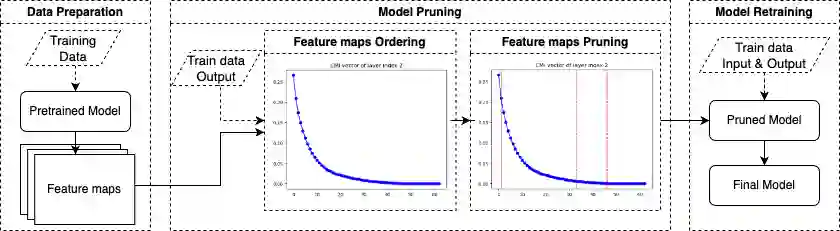

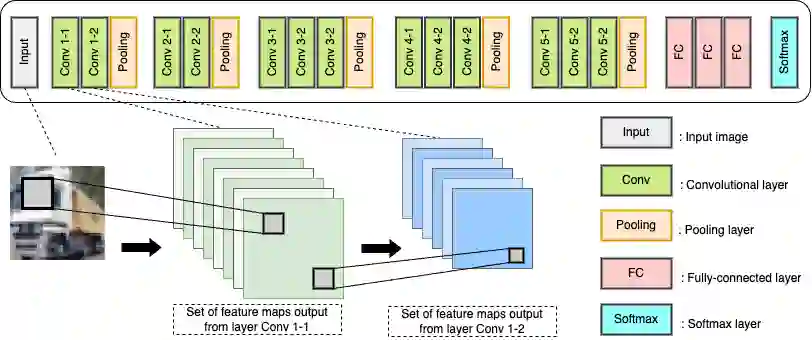

Convolutional Neural Networks (CNNs) achieve high performance in image classification tasks but are challenging to deploy on resource-limited hardware due to their large model sizes. To address this issue, we leverage Mutual Information, a metric that provides valuable insights into how deep learning models retain and process information through measuring the shared information between input features or output labels and network layers. In this study, we propose a structured filter-pruning approach for CNNs that identifies and selectively retains the most informative features in each layer. Our approach successively evaluates each layer by ranking the importance of its feature maps based on Conditional Mutual Information (CMI) values, computed using a matrix-based Renyi {\alpha}-order entropy numerical method. We propose several formulations of CMI to capture correlation among features across different layers. We then develop various strategies to determine the cutoff point for CMI values to prune unimportant features. This approach allows parallel pruning in both forward and backward directions and significantly reduces model size while preserving accuracy. Tested on the VGG16 architecture with the CIFAR-10 dataset, the proposed method reduces the number of filters by more than a third, with only a 0.32% drop in test accuracy.

翻译:卷积神经网络(CNN)在图像分类任务中表现出色,但由于其庞大的模型规模,在资源受限的硬件上部署面临挑战。为解决这一问题,我们利用互信息这一度量指标,通过测量输入特征或输出标签与网络层之间的共享信息,为深度学习模型如何保留和处理信息提供了有价值的洞察。本研究提出一种针对CNN的结构化滤波器剪枝方法,旨在识别并选择性保留每层中最具信息量的特征。我们的方法通过基于条件互信息(CMI)值对每层特征图的重要性进行排序来逐层评估网络,其中CMI值采用基于矩阵的Renyi {\alpha}阶熵数值方法计算。我们提出了多种CMI的表述形式,以捕捉不同层间特征的相关性。随后,我们开发了多种策略来确定CMI值的截断点,以剪除不重要的特征。该方法支持前向与后向并行剪枝,能在保持精度的同时显著减小模型规模。在基于CIFAR-10数据集的VGG16架构上的测试表明,所提方法能减少超过三分之一的滤波器数量,而测试精度仅下降0.32%。