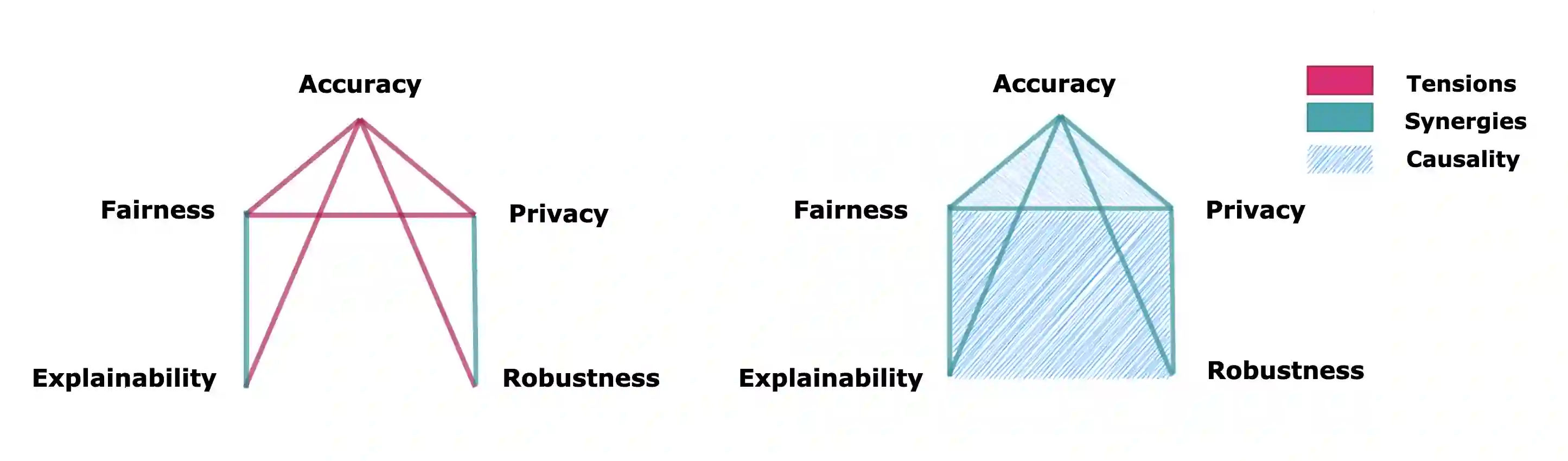

Ensuring trustworthiness in machine learning (ML) systems is crucial as they become increasingly embedded in high-stakes domains. This paper advocates for integrating causal methods into machine learning to navigate the trade-offs among key principles of trustworthy ML, including fairness, privacy, robustness, accuracy, and explainability. While these objectives should ideally be satisfied simultaneously, they are often addressed in isolation, leading to conflicts and suboptimal solutions. Drawing on existing applications of causality in ML that successfully align goals such as fairness and accuracy or privacy and robustness, this paper argues that a causal approach is essential for balancing multiple competing objectives in both trustworthy ML and foundation models. Beyond highlighting these trade-offs, we examine how causality can be practically integrated into ML and foundation models, offering solutions to enhance their reliability and interpretability. Finally, we discuss the challenges, limitations, and opportunities in adopting causal frameworks, paving the way for more accountable and ethically sound AI systems.

翻译:随着机器学习系统日益深入高风险领域,确保其可信性变得至关重要。本文主张将因果方法整合到机器学习中,以权衡可信机器学习的关键原则之间的平衡,包括公平性、隐私性、鲁棒性、准确性和可解释性。尽管这些目标理想上应同时满足,但现有研究往往孤立处理,导致冲突和次优解。借鉴因果方法在机器学习中的现有应用(例如成功协调公平性与准确性或隐私性与鲁棒性),本文论证了因果方法对于平衡可信机器学习及基础模型中多重竞争目标具有核心作用。除了揭示这些权衡关系,我们还探讨了如何将因果性实际整合到机器学习及基础模型中,为提升其可靠性与可解释性提供解决方案。最后,我们讨论了采用因果框架所面临的挑战、局限与机遇,为构建更具问责性和伦理健全的人工智能系统铺平道路。