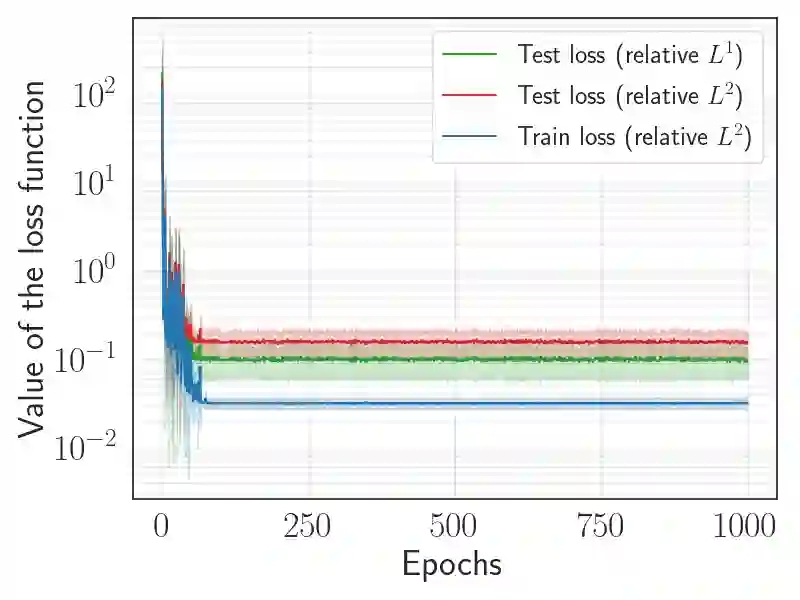

Neural Operators (NOs) are a powerful deep learning framework designed to learn the solution operator that arise from partial differential equations. This study investigates NOs ability to capture the stiff spatio-temporal dynamics of the FitzHugh-Nagumo model, which describes excitable cells. A key contribution of this work is evaluating the translation invariance using a novel training strategy. NOs are trained using an applied current with varying spatial locations and intensities at a fixed time, and the test set introduces a more challenging out-of-distribution scenario in which the applied current is translated in both time and space. This approach significantly reduces the computational cost of dataset generation. Moreover we benchmark seven NOs architectures: Convolutional Neural Operators (CNOs), Deep Operator Networks (DONs), DONs with CNN encoder (DONs-CNN), Proper Orthogonal Decomposition DONs (POD-DONs), Fourier Neural Operators (FNOs), Tucker Tensorized FNOs (TFNOs), Localized Neural Operators (LocalNOs). We evaluated these models based on training and test accuracy, efficiency, and inference speed. Our results reveal that CNOs performs well on translated test dynamics. However, they require higher training costs, though their performance on the training set is similar to that of the other considered architectures. In contrast, FNOs achieve the lowest training error, but have the highest inference time. Regarding the translated dynamics, FNOs and their variants provide less accurate predictions. Finally, DONs and their variants demonstrate high efficiency in both training and inference, however they do not generalize well to the test set. These findings highlight the current capabilities and limitations of NOs in capturing complex ionic model dynamics and provide a comprehensive benchmark including their application to scenarios involving translated dynamics.

翻译:神经算子是一种强大的深度学习框架,旨在学习由偏微分方程导出的解算子。本研究探讨了神经算子捕捉FitzHugh-Nagumo模型(描述可兴奋细胞)刚性时空动力学的能力。本工作的一个关键贡献在于通过一种新颖的训练策略评估其平移不变性。我们使用在固定时间具有不同空间位置和强度的外加电流训练神经算子,并在测试集中引入更具挑战性的分布外场景——外加电流在时间和空间上均发生平移。该方法显著降低了数据集生成的计算成本。此外,我们对七种神经算子架构进行了基准测试:卷积神经算子、深度算子网络、带CNN编码器的深度算子网络、本征正交分解深度算子网络、傅里叶神经算子、Tucker张量化傅里叶神经算子以及局部化神经算子。我们从训练与测试精度、效率和推理速度等方面评估了这些模型。结果表明,卷积神经算子在平移后的测试动力学上表现良好,但其训练成本较高,尽管其在训练集上的性能与其他架构相似。相比之下,傅里叶神经算子实现了最低的训练误差,但推理时间最长。对于平移动力学,傅里叶神经算子及其变体的预测精度较低。最后,深度算子网络及其变体在训练和推理方面均表现出高效性,但其对测试集的泛化能力不足。这些发现揭示了当前神经算子在捕捉复杂离子模型动力学方面的能力与局限,并提供了一个包含平移动力学应用场景的综合基准。