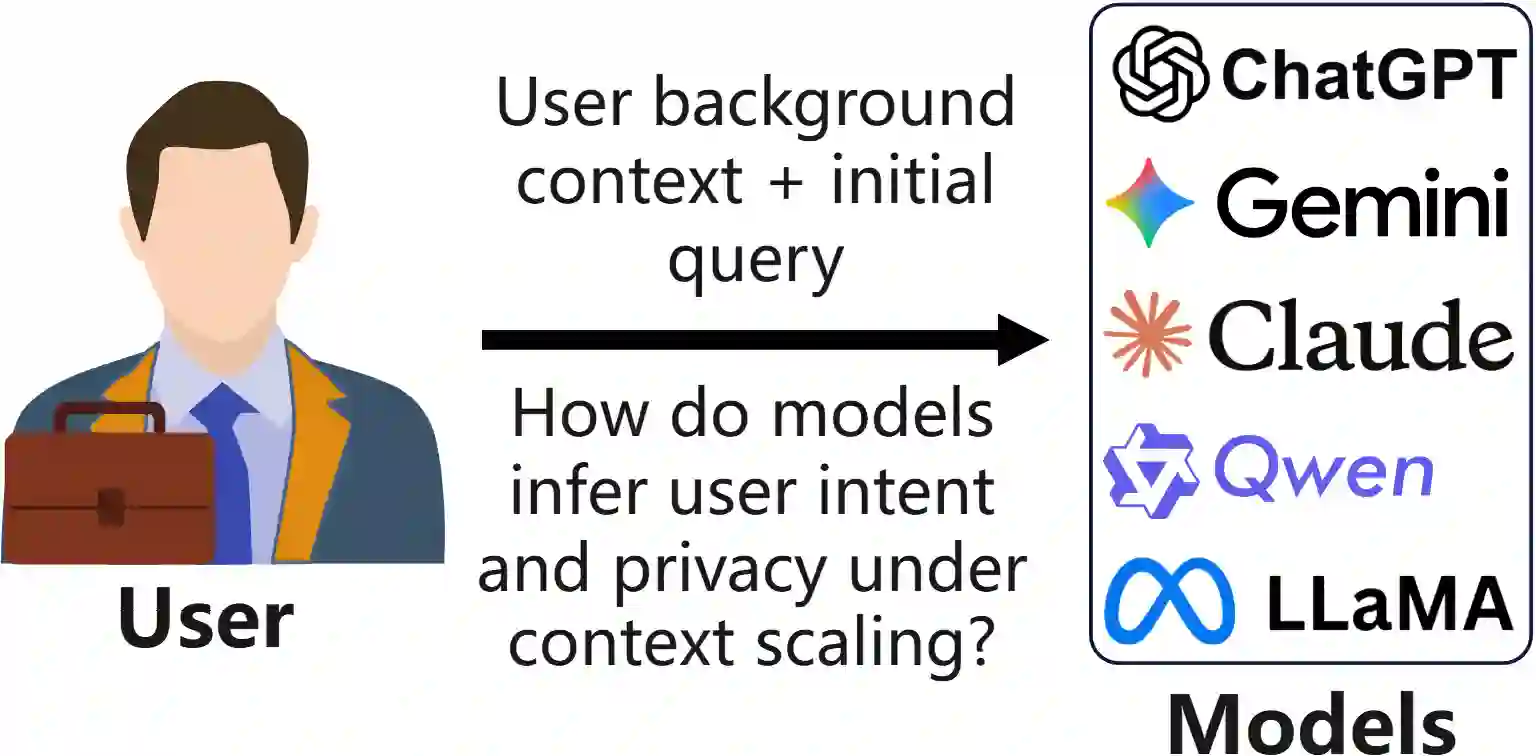

Large language models (LLMs) are increasingly deployed in privacy-critical and personalization-oriented scenarios, yet the role of context length in shaping privacy leakage and personalization effectiveness remains largely unexplored. We introduce a large-scale benchmark, PAPerBench, to systematically study how increasing context length influences both personalization quality and privacy protection in LLMs. The benchmark comprises approximately 29,000 instances with context lengths ranging from 1K to 256K tokens, yielding a total of 377K evaluation questions. It jointly evaluates personalization performance and privacy risks across diverse scenarios, enabling controlled analysis of long-context model behavior. Extensive evaluations across state-of-the-art LLMs reveal consistent performance degradation in both personalization and privacy as context length increases. We further provide a theoretical analysis of attention dilution under context scaling, explaining this behavior as an inherent limitation of soft attention in fixed-capacity Transformers. The empirical and theoretical findings together suggest a general scaling gap in current models -- long context, less focus. We release the benchmark to support reproducible evaluation and future research on scalable privacy and personalization. Code and data are available at https://github.com/SafeRL-Lab/PAPerBench

翻译:大型语言模型(LLM)正日益部署于隐私敏感和个性化导向的场景中,然而上下文长度在塑造隐私泄露与个性化有效性方面的作用仍很大程度上未被探索。我们提出了一个大规模基准测试PAPerBench,以系统研究在LLM中增加上下文长度如何同时影响个性化质量与隐私保护。该基准包含约29,000个实例,上下文长度覆盖1K至256K个词元,共计生成377K个评估问题。它能够在多样化场景中联合评估个性化性能与隐私风险,从而实现对长上下文模型行为的受控分析。通过对前沿LLM的广泛评估,我们发现随着上下文长度的增加,个性化和隐私保护性能均出现一致性的退化。我们进一步对上下文扩展下的注意力稀释现象进行了理论分析,将这种行为解释为固定容量Transformer中软注意力机制的内在局限。实证与理论发现共同揭示了当前模型存在普遍的扩展鸿沟——长上下文导致弱聚焦。我们公开此基准测试以支持可复现的评估,并推动可扩展隐私与个性化领域的未来研究。代码与数据可在 https://github.com/SafeRL-Lab/PAPerBench 获取。