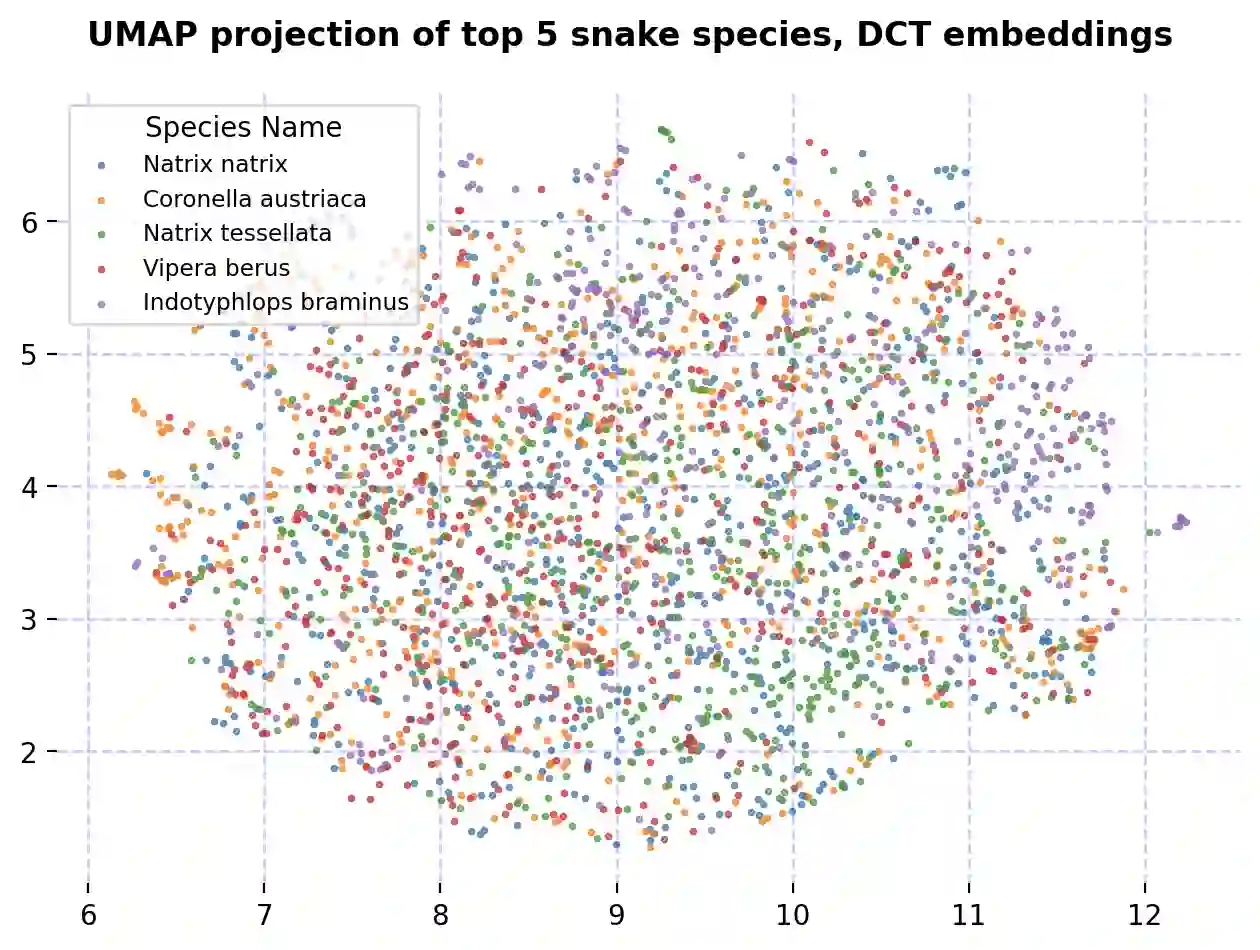

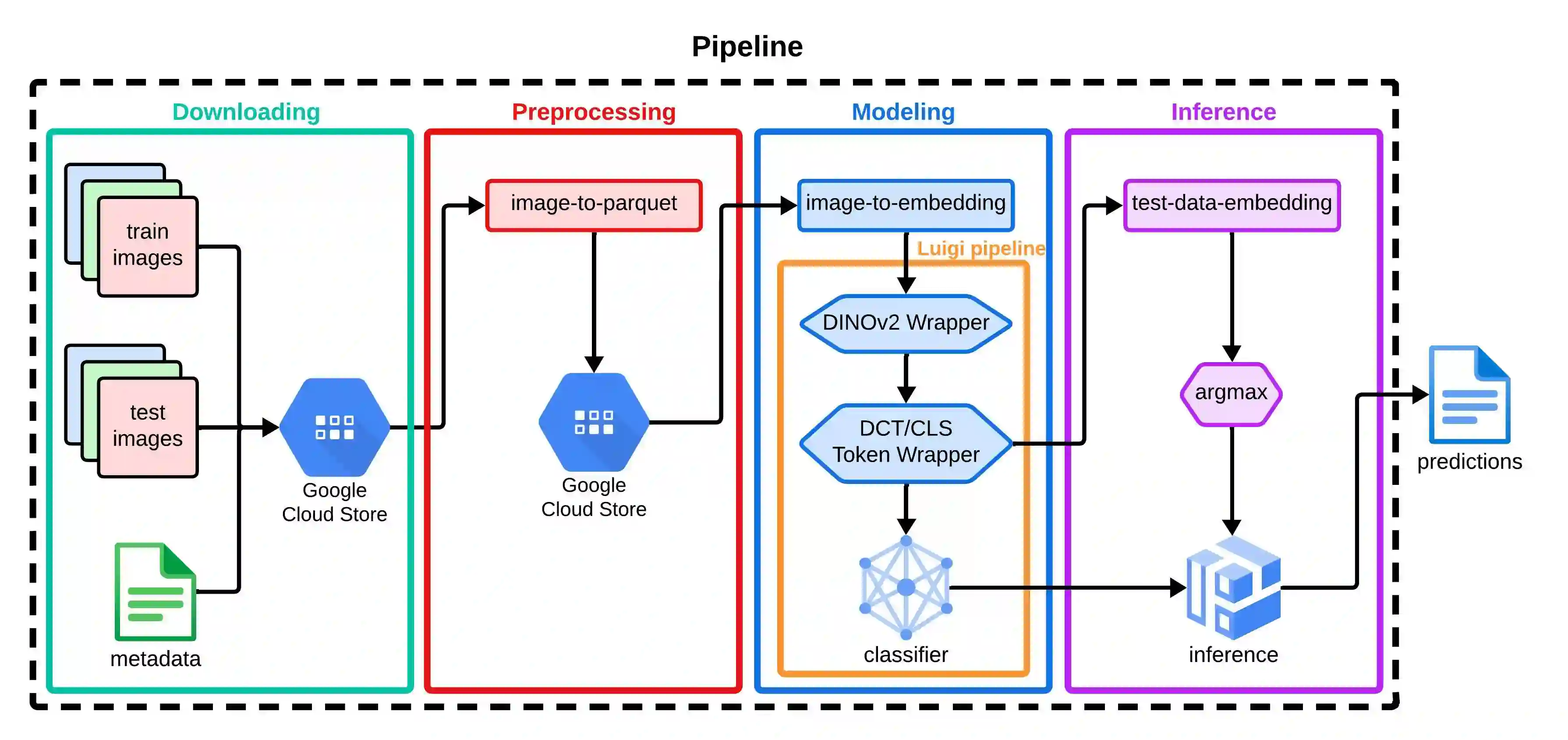

We present our approach for the SnakeCLEF 2024 competition to predict snake species from images. We explore and use Meta's DINOv2 vision transformer model for feature extraction to tackle species' high variability and visual similarity in a dataset of 182,261 images. We perform exploratory analysis on embeddings to understand their structure, and train a linear classifier on the embeddings to predict species. Despite achieving a score of 39.69, our results show promise for DINOv2 embeddings in snake identification. All code for this project is available at https://github.com/dsgt-kaggle-clef/snakeclef-2024.

翻译:本文介绍了我们为SnakeCLEF 2024竞赛提出的从图像中预测蛇类物种的方法。为应对包含182,261张图像的数据集中物种高度变异性和视觉相似性的挑战,我们探索并采用Meta的DINOv2视觉Transformer模型进行特征提取。通过对嵌入表示进行探索性分析以理解其结构特征,并在嵌入表示上训练线性分类器进行物种预测。尽管最终得分为39.69,但我们的结果表明DINOv2嵌入表示在蛇类识别中具有应用潜力。本项目所有代码已公开于https://github.com/dsgt-kaggle-clef/snakeclef-2024。