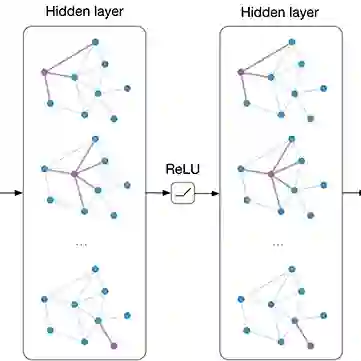

Current state-of-the-art (SOTA) methods in 3D Human Pose Estimation (HPE) are primarily based on Transformers. However, existing Transformer-based 3D HPE backbones often encounter a trade-off between accuracy and computational efficiency. To resolve the above dilemma, in this work, leveraging recent advances in state space models, we utilize Mamba for high-quality and efficient long-range modeling. Nonetheless, Mamba still faces challenges in precisely exploiting the local dependencies between joints. To address these issues, we propose a new attention-free hybrid spatiotemporal architecture named Hybrid Mamba-GCN (Pose Magic). This architecture introduces local enhancement with GCN by capturing relationships between neighboring joints, thus producing new representations to complement Mamba's outputs. By adaptively fusing representations from Mamba and GCN, Pose Magic demonstrates superior capability in learning the underlying 3D structure. To meet the requirements of real-time inference, we also provide a fully causal version. Extensive experiments show that Pose Magic achieves new SOTA results ($\downarrow 0.9 mm$) while saving $74.1\%$ FLOPs. In addition, Pose Magic exhibits optimal motion consistency and the ability to generalize to unseen sequence lengths.

翻译:当前三维人体姿态估计(HPE)领域最先进的方法主要基于Transformer。然而,现有的基于Transformer的三维HPE骨干网络常常面临精度与计算效率之间的权衡。为解决上述困境,本研究利用状态空间模型的最新进展,采用Mamba进行高质量、高效的长程建模。尽管如此,Mamba在精确利用关节间局部依赖关系方面仍面临挑战。针对这些问题,我们提出了一种新的无注意力混合时空架构,命名为混合Mamba-GCN(姿态魔法)。该架构通过图卷积网络(GCN)捕获相邻关节间的关系以引入局部增强,从而生成新的表征来补充Mamba的输出。通过自适应融合来自Mamba和GCN的表征,姿态魔法在学习底层三维结构方面展现出卓越的能力。为满足实时推理的需求,我们还提供了一个完全因果的版本。大量实验表明,姿态魔法在节省74.1%浮点运算次数的同时,取得了新的最先进结果($\downarrow 0.9 mm$)。此外,姿态魔法展现出最优的运动一致性以及泛化到未见序列长度的能力。