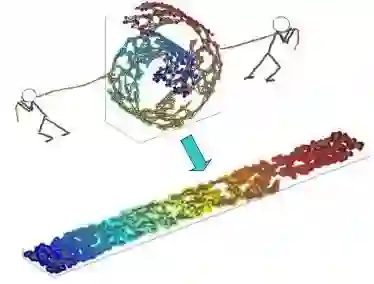

The conventional wisdom of manifold learning is based on nonlinear dimensionality reduction techniques such as IsoMAP and locally linear embedding (LLE). We challenge this paradigm by exploiting the blessing of dimensionality. Our intuition is simple: it is easier to untangle a low-dimensional manifold in a higher-dimensional space due to its vastness, as guaranteed by Whitney embedding theorem. A new insight brought by this work is to introduce class labels as the context variables in the lifted higher-dimensional space (so supervised learning becomes unsupervised learning). We rigorously show that manifold untangling leads to linearly separable classifiers in the lifted space. To correct the inevitable overfitting, we consider the dual process of manifold untangling -- tangling or aliasing -- which is important for generalization. Using context as the bonding element, we construct a pair of manifold untangling and tangling operators, known as tangling-untangling cycle (TUC). Untangling operator maps context-independent representations (CIR) in low-dimensional space to context-dependent representations (CDR) in high-dimensional space by inducing context as hidden variables. The tangling operator maps CDR back to CIR by a simple integral transformation for invariance and generalization. We also present the hierarchical extensions of TUC based on the Cartesian product and the fractal geometry. Despite the conceptual simplicity, TUC admits a biologically plausible and energy-efficient implementation based on the time-locking behavior of polychronization neural groups (PNG) and sleep-wake cycle (SWC). The TUC-based theory applies to the computational modeling of various cognitive functions by hippocampal-neocortical systems.

翻译:流形学习的传统观念基于非线性降维技术,如IsoMAP和局部线性嵌入(LLE)。我们通过利用维度优势挑战这一范式。我们的直觉很简单:根据惠特尼嵌入定理,在高维空间中解缠低维流形更容易,因为该空间足够广阔。本研究带来的新见解是,将类别标签作为提升的高维空间中的上下文变量(从而将监督学习转化为无监督学习)。我们严格证明,流形解缠可在提升空间中产生线性可分分类器。为修正不可避免的过拟合,我们考虑流形解缠的对偶过程——缠绕或混叠——这对泛化至关重要。使用上下文作为链接元素,我们构建了一对流形解缠与缠绕算子,称为缠绕-解缠循环(TUC)。解缠算子通过引入上下文作为隐变量,将低维空间中的上下文无关表示(CIR)映射为高维空间中的上下文相关表示(CDR)。缠绕算子通过简单的积分变换将CDR映射回CIR,以实现不变性和泛化。我们还基于笛卡尔积和分形几何提出了TUC的层次化扩展。尽管概念简洁,TUC基于多时延神经群(PNG)的锁时行为与睡眠-觉醒周期(SWC)实现了生物可解释且节能的机制。基于TUC的理论可应用于海马-新皮层系统的多种认知功能计算建模。