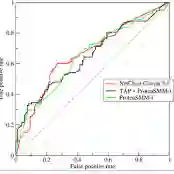

Deep Click-Through Rate (CTR) prediction models play an important role in modern industrial recommendation scenarios. However, high memory overhead and computational costs limit their deployment in resource-constrained environments. Low-rank approximation is an effective method for computer vision and natural language processing models, but its application in compressing CTR prediction models has been less explored. Due to the limited memory and computing resources, compression of CTR prediction models often confronts three fundamental challenges, i.e., (1). How to reduce the model sizes to adapt to edge devices? (2). How to speed up CTR prediction model inference? (3). How to retain the capabilities of original models after compression? Previous low-rank compression research mostly uses tensor decomposition, which can achieve a high parameter compression ratio, but brings in AUC degradation and additional computing overhead. To address these challenges, we propose a unified low-rank decomposition framework for compressing CTR prediction models. We find that even with the most classic matrix decomposition SVD method, our framework can achieve better performance than the original model. To further improve the effectiveness of our framework, we locally compress the output features instead of compressing the model weights. Our unified low-rank compression framework can be applied to embedding tables and MLP layers in various CTR prediction models. Extensive experiments on two academic datasets and one real industrial benchmark demonstrate that, with 3-5x model size reduction, our compressed models can achieve both faster inference and higher AUC than the uncompressed original models. Our code is at https://github.com/yuhao318/Atomic_Feature_Mimicking.

翻译:深度点击率预测模型在现代工业推荐场景中扮演着重要角色。然而,高内存开销和计算成本限制了其在资源受限环境中的部署。低秩近似是计算机视觉和自然语言处理模型的有效方法,但其在压缩点击率预测模型中的应用尚未得到充分探索。由于内存和计算资源有限,点击率预测模型的压缩通常面临三个基本挑战,即:(1) 如何减小模型规模以适应边缘设备?(2) 如何加速点击率预测模型的推理?(3) 如何在压缩后保留原始模型的能力?以往的低秩压缩研究主要采用张量分解,虽然可以实现较高的参数压缩比,但会导致AUC下降和额外计算开销。为解决这些挑战,我们提出了一种统一低秩分解框架用于压缩点击率预测模型。我们发现,即使使用最经典的矩阵分解SVD方法,我们的框架也能实现比原始模型更优的性能。为进一步提升框架的有效性,我们采用局部压缩输出特征的方式,而非压缩模型权重。我们的统一低秩压缩框架可应用于各类点击率预测模型中的嵌入表和MLP层。在两个学术数据集和一个真实工业基准上的大量实验表明,在模型规模缩减3-5倍的情况下,我们的压缩模型不仅推理速度更快,而且AUC高于未压缩的原始模型。我们的代码位于 https://github.com/yuhao318/Atomic_Feature_Mimicking。