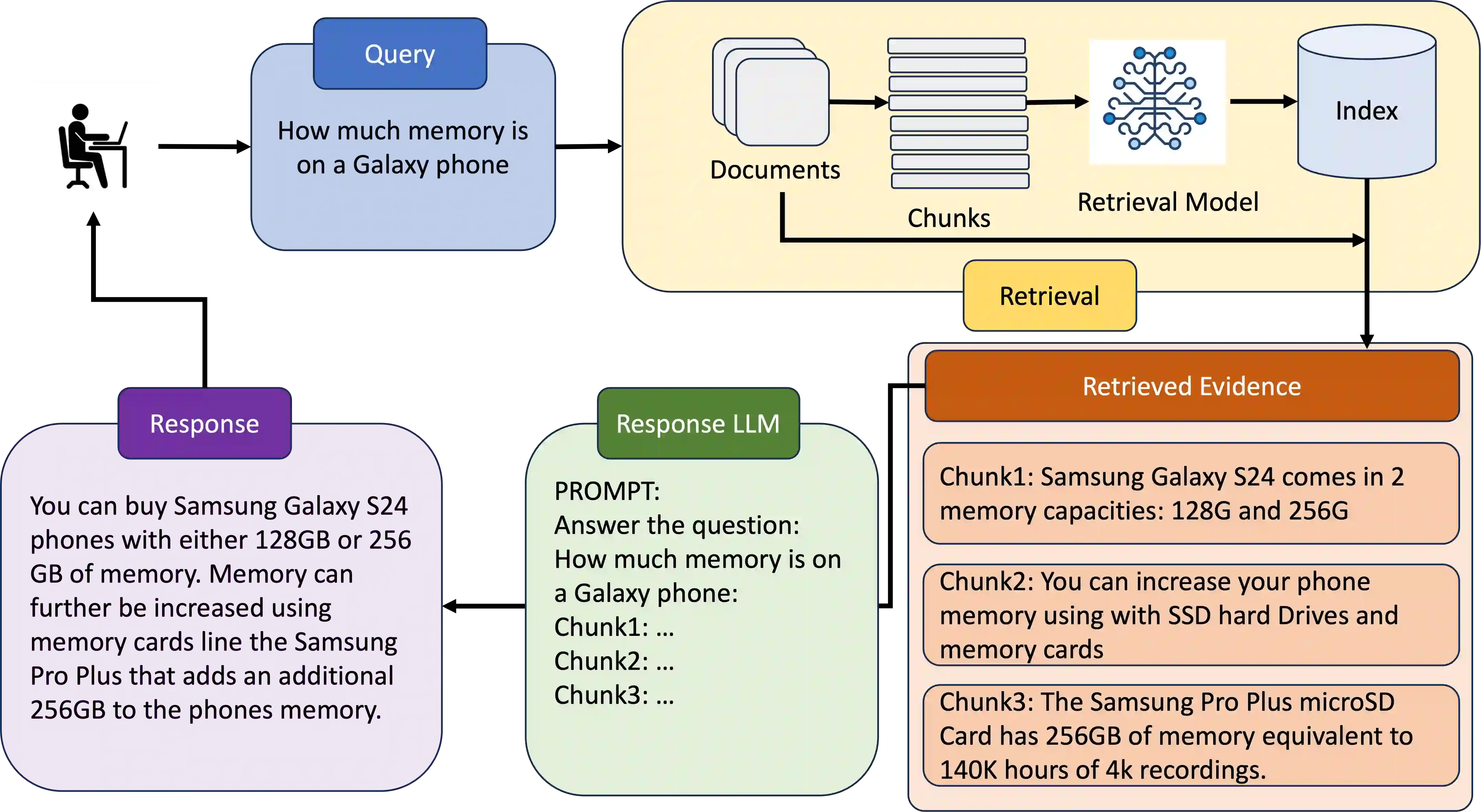

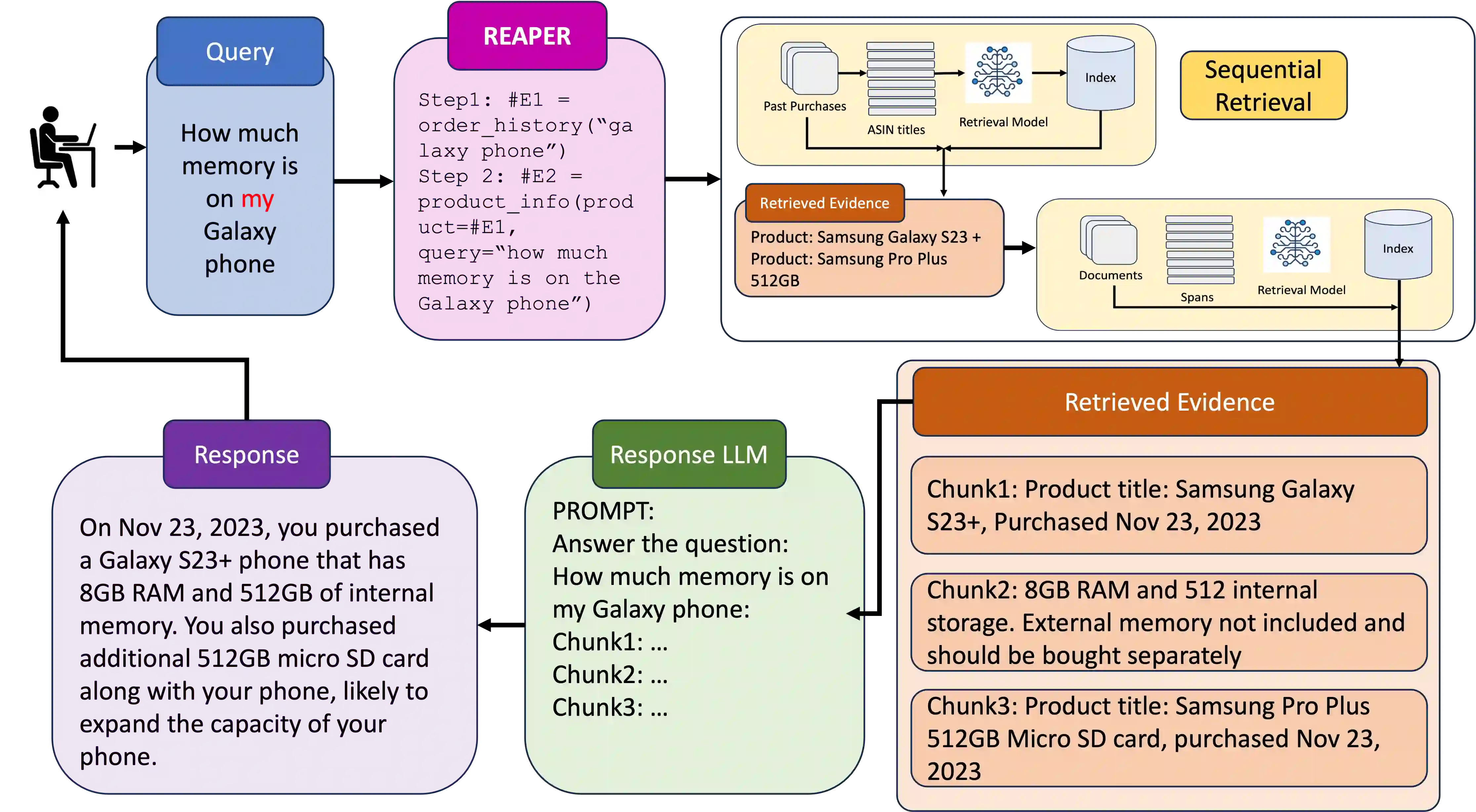

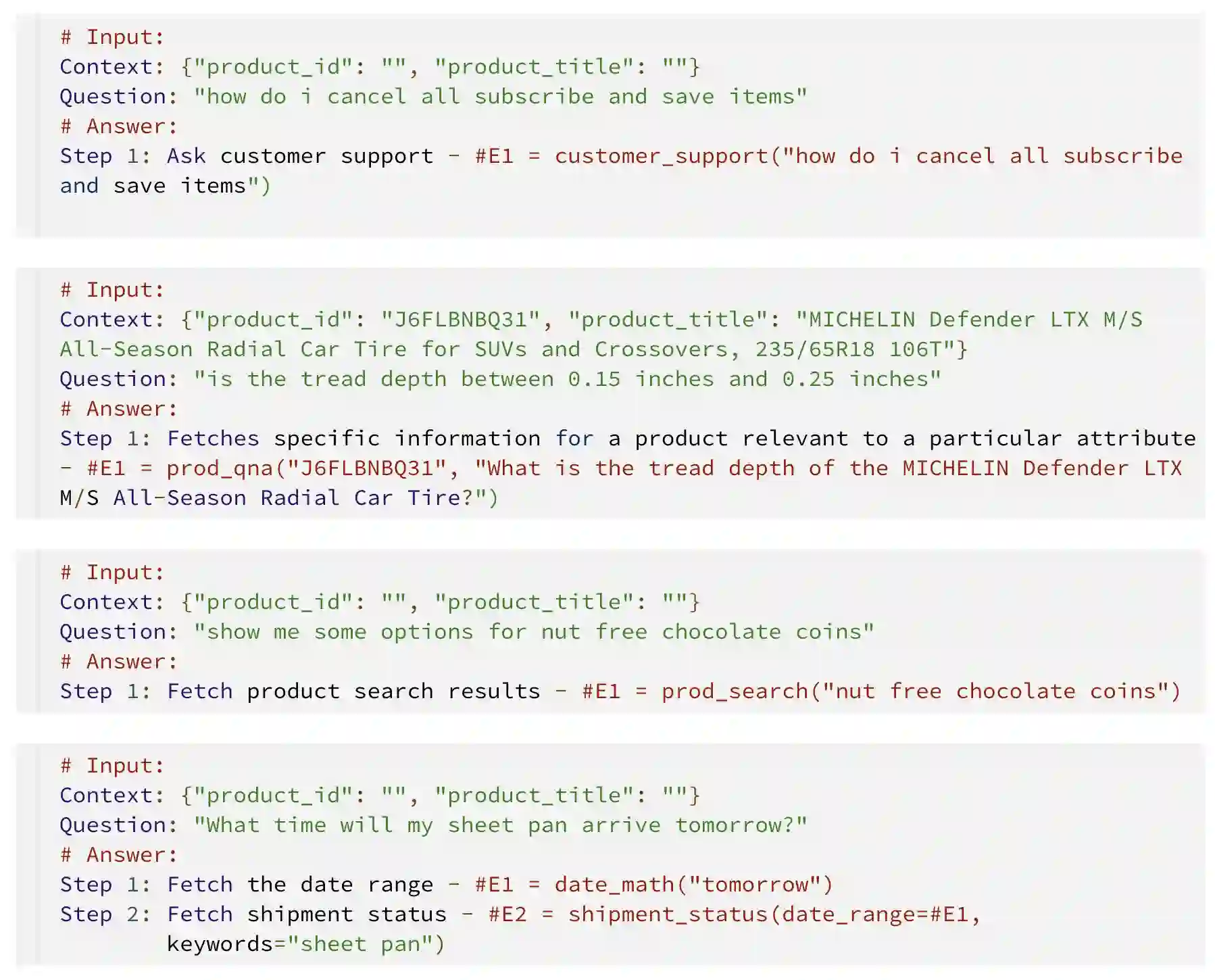

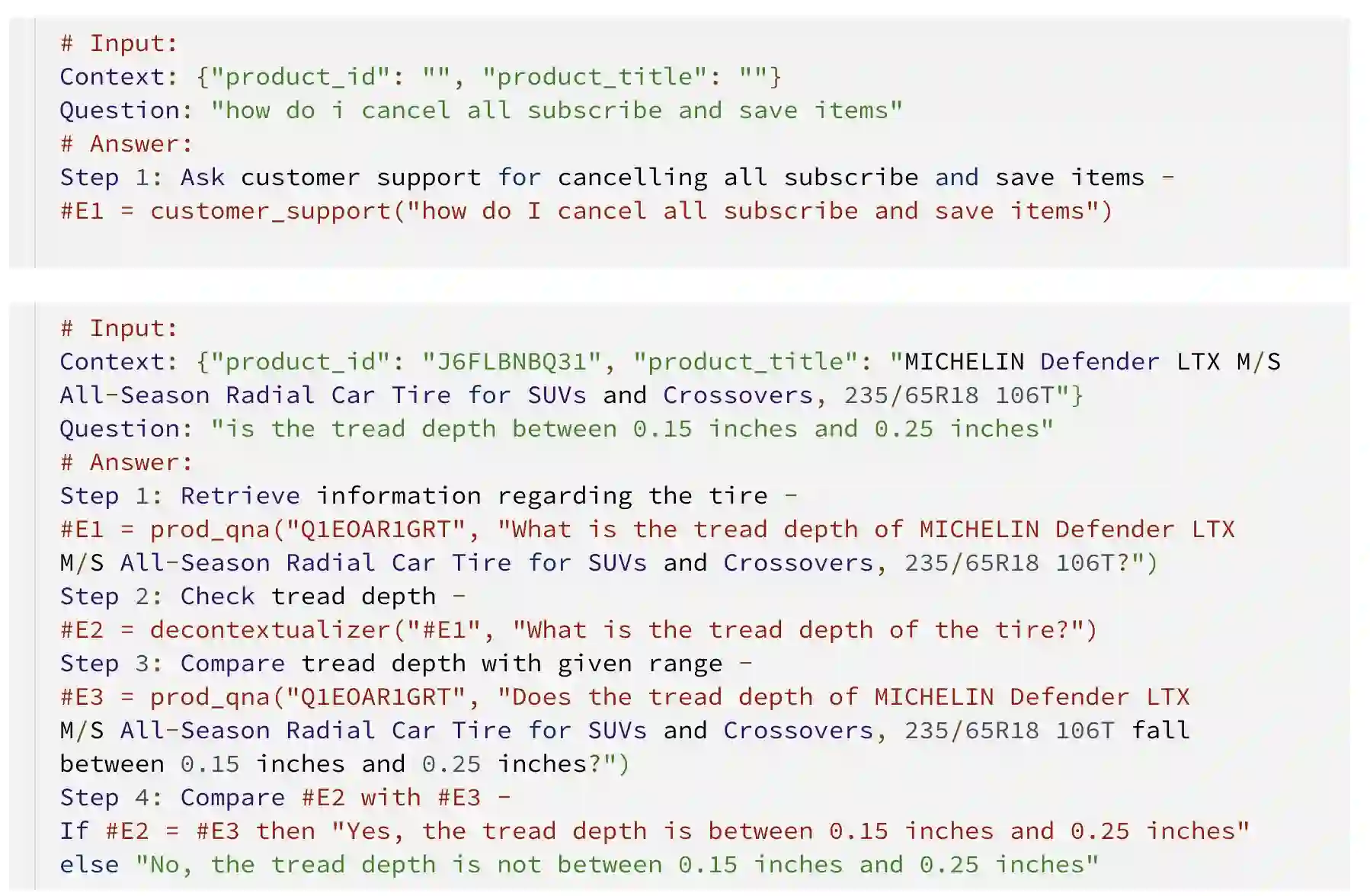

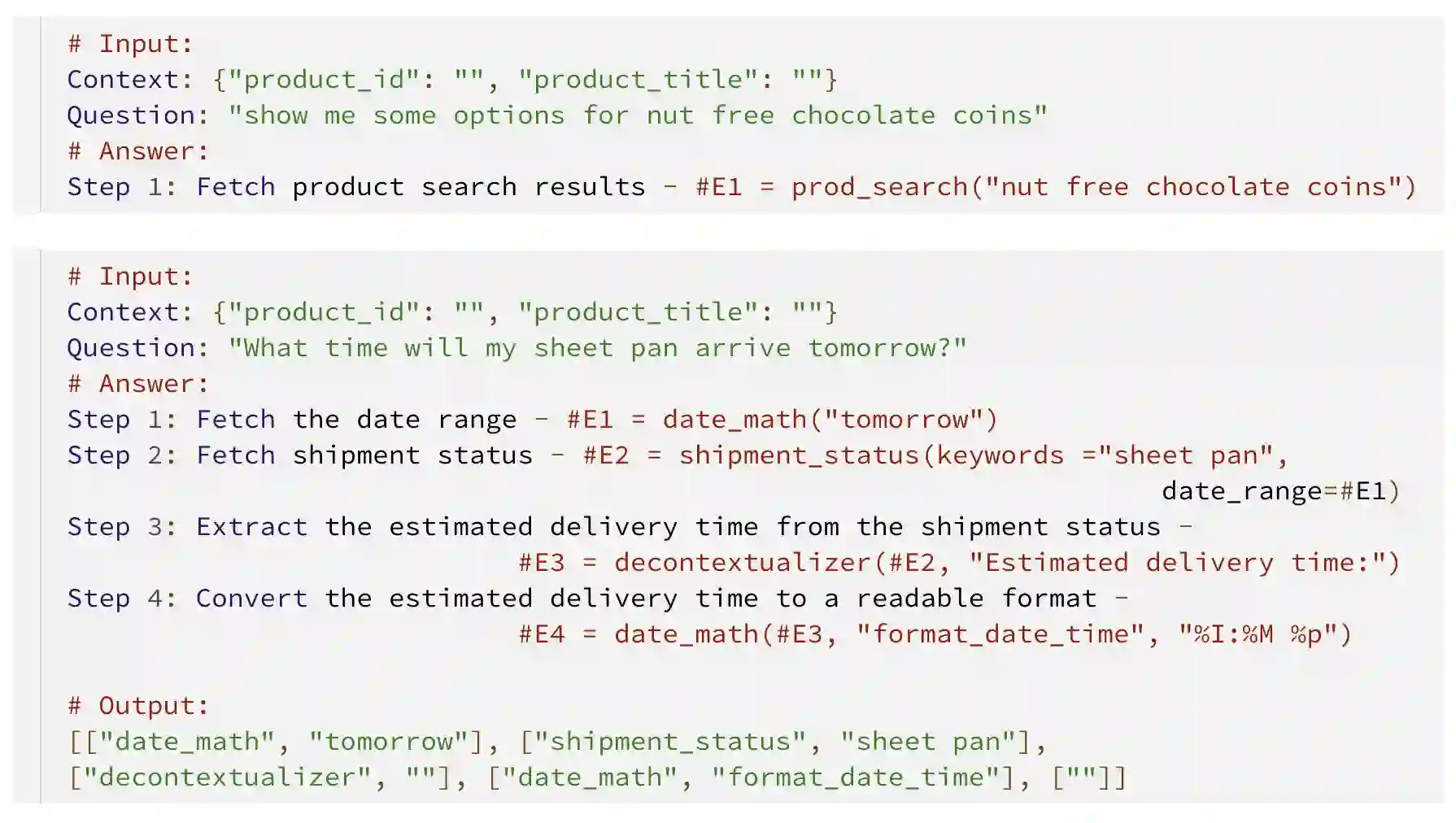

Complex dialog systems often use retrieved evidence to facilitate factual responses. Such RAG (Retrieval Augmented Generation) systems retrieve from massive heterogeneous data stores that are usually architected as multiple indexes or APIs instead of a single monolithic source. For a given query, relevant evidence needs to be retrieved from one or a small subset of possible retrieval sources. Complex queries can even require multi-step retrieval. For example, a conversational agent on a retail site answering customer questions about past orders will need to retrieve the appropriate customer order first and then the evidence relevant to the customer's question in the context of the ordered product. Most RAG Agents handle such Chain-of-Thought (CoT) tasks by interleaving reasoning and retrieval steps. However, each reasoning step directly adds to the latency of the system. For large models this latency cost is significant -- in the order of multiple seconds. Multi-agent systems may classify the query to a single Agent associated with a retrieval source, though this means that a (small) classification model dictates the performance of a large language model. In this work we present REAPER (REAsoning-based PlannER) - an LLM based planner to generate retrieval plans in conversational systems. We show significant gains in latency over Agent-based systems and are able to scale easily to new and unseen use cases as compared to classification-based planning. Though our method can be applied to any RAG system, we show our results in the context of a conversational shopping assistant.

翻译:复杂对话系统常借助检索证据来生成事实性回答。此类检索增强生成(RAG)系统通常从海量异构数据存储中检索信息,这些存储多被设计为多个独立索引或API接口,而非单一集中式数据源。针对给定查询,需从可能的一个或少数几个检索源中获取相关证据。复杂查询甚至需要多步检索,例如零售网站对话代理在回答客户关于历史订单的提问时,需先检索对应客户订单,再基于所订购商品上下文获取与客户问题相关的证据。多数RAG代理通过交错执行推理与检索步骤来处理此类思维链任务,但每个推理步骤都会直接增加系统延迟。对于大模型而言,这种延迟代价尤为显著——通常达到数秒量级。多智能体系统虽可将查询分类至关联特定检索源的单个智能体,但这意味着(小型)分类模型的性能将决定大语言模型的表现。本研究提出REAPER(基于推理的规划器)——一种基于大语言模型的规划器,用于在对话系统中生成检索计划。实验表明,相较于基于智能体的系统,本方法在延迟方面取得显著提升;与基于分类的规划方法相比,能更轻松地扩展到新的未知应用场景。尽管该方法可应用于任何RAG系统,我们通过在购物对话助手场景中的实验验证了其有效性。