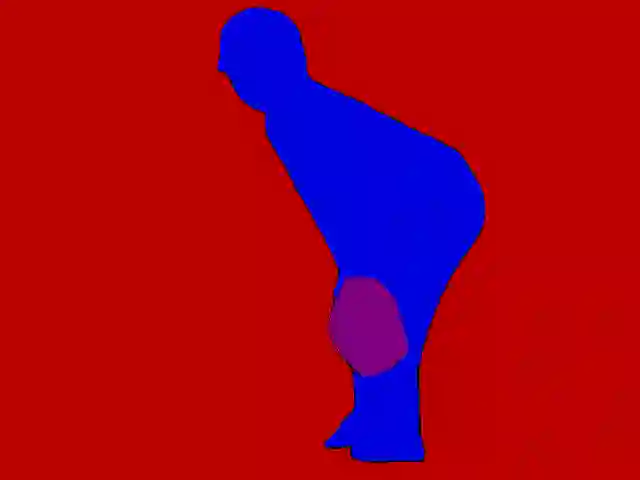

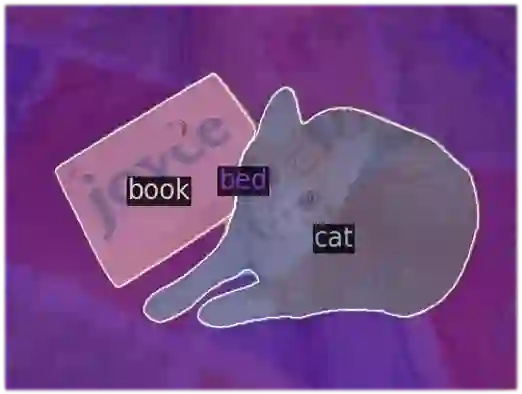

In recent years, multimodal large language models (MLLMs) have made significant strides by training on vast high-quality image-text datasets, enabling them to generally understand images well. However, the inherent difficulty in explicitly conveying fine-grained or spatially dense information in text, such as masks, poses a challenge for MLLMs, limiting their ability to answer questions requiring an understanding of detailed or localized visual elements. Drawing inspiration from the Retrieval-Augmented Generation (RAG) concept, this paper proposes a new visual prompt approach to integrate fine-grained external knowledge, gleaned from specialized vision models (e.g., instance segmentation/OCR models), into MLLMs. This is a promising yet underexplored direction for enhancing MLLMs' performance. Our approach diverges from concurrent works, which transform external knowledge into additional text prompts, necessitating the model to indirectly learn the correspondence between visual content and text coordinates. Instead, we propose embedding fine-grained knowledge information directly into a spatial embedding map as a visual prompt. This design can be effortlessly incorporated into various MLLMs, such as LLaVA and Mipha, considerably improving their visual understanding performance. Through rigorous experiments, we demonstrate that our method can enhance MLLM performance across nine benchmarks, amplifying their fine-grained context-aware capabilities.

翻译:近年来,多模态大语言模型(MLLMs)通过在大量高质量图文数据集上进行训练取得了显著进展,使其能够较好地理解图像内容。然而,文本在显式传达细粒度或空间密集信息(如掩码)方面存在固有困难,这对MLLMs构成了挑战,限制了其回答需要理解细节或局部视觉元素的问题的能力。受检索增强生成(RAG)概念的启发,本文提出了一种新的视觉提示方法,将从专用视觉模型(例如实例分割/OCR模型)中获取的细粒度外部知识整合到MLLMs中。这是提升MLLMs性能的一个有前景但尚未充分探索的方向。我们的方法与现有并行研究不同,后者将外部知识转化为额外的文本提示,迫使模型间接学习视觉内容与文本坐标之间的对应关系。相反,我们提出将细粒度知识信息直接嵌入到空间嵌入图中作为视觉提示。这种设计可以轻松集成到各种MLLMs(如LLaVA和Mipha)中,显著提升其视觉理解性能。通过严谨的实验,我们证明该方法能够在九个基准测试中提升MLLM的性能,增强其细粒度上下文感知能力。