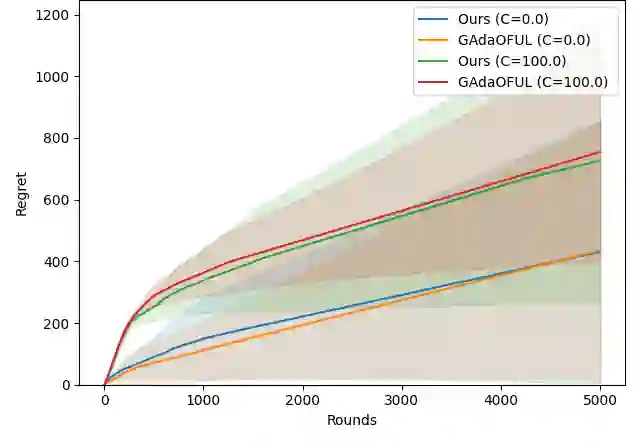

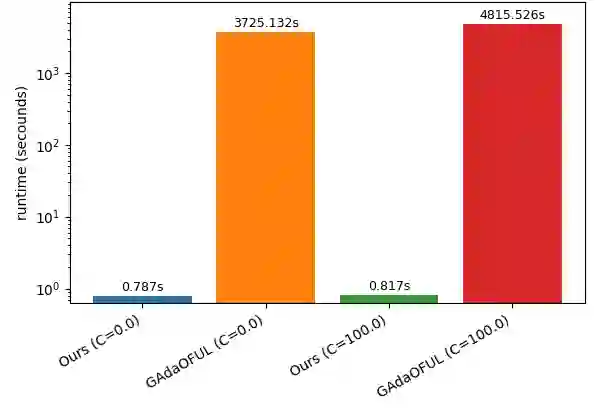

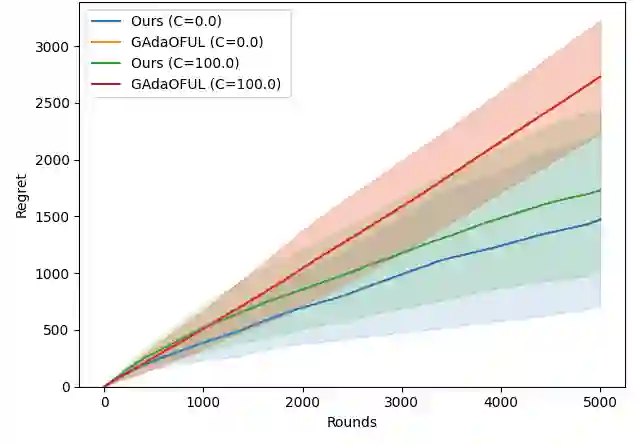

We study linear contextual bandits under adversarial corruption and heavy-tailed noise with finite $(1+ε)$-th moments for some $ε\in (0,1]$. Existing work that addresses both adversarial corruption and heavy-tailed noise relies on a finite variance (i.e., finite second-moment) assumption and suffers from computational inefficiency. We propose a computationally efficient algorithm based on online mirror descent that achieves robustness to both adversarial corruption and heavy-tailed noise. While the existing algorithm incurs $\mathcal{O}(t\log T)$ computational cost, our algorithm reduces this to $\mathcal{O}(1)$ per round. We establish an additive regret bound consisting of a term depending on the $(1+ε)$-moment bound of the noise and a term depending on the total amount of corruption. In particular, when $ε= 1$, our result recovers existing guarantees under finite-variance assumptions. When no corruption is present, it matches the best-known rates for linear contextual bandits with heavy-tailed noise. Moreover, the algorithm requires no prior knowledge of the noise moment bound or the total amount of corruption and still guarantees sublinear regret.

翻译:本文研究线性上下文赌博机在对抗性污染与重尾噪声下的性能,其中噪声具有有限的$(1+ε)$阶矩($ε\in (0,1]$)。现有同时处理对抗性污染与重尾噪声的研究依赖于有限方差(即有限二阶矩)假设,且存在计算效率低下的问题。我们提出一种基于在线镜像下降的计算高效算法,该算法能同时抵抗对抗性污染与重尾噪声。现有算法需要$\mathcal{O}(t\log T)$的计算成本,而我们的算法将每轮计算成本降至$\mathcal{O}(1)$。我们建立了一个加性遗憾界,其中包含依赖于噪声$(1+ε)$阶矩界的项,以及依赖于污染总量的项。特别地,当$ε= 1$时,我们的结果恢复了有限方差假设下的现有保证。当不存在污染时,其匹配重尾噪声下线性上下文赌博机的最佳已知收敛率。此外,该算法无需预先知道噪声矩界或污染总量,仍能保证次线性遗憾。