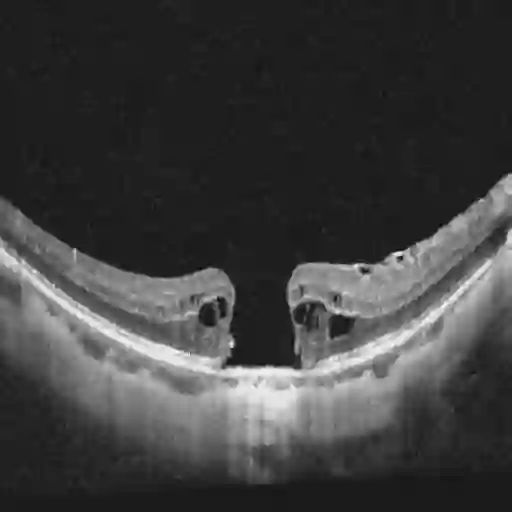

Ophthalmology relies heavily on detailed image analysis for diagnosis and treatment planning. While large vision-language models (LVLMs) have shown promise in understanding complex visual information, their performance on ophthalmology images remains underexplored. We introduce LMOD, a dataset and benchmark for evaluating LVLMs on ophthalmology images, covering anatomical understanding, diagnostic analysis, and demographic extraction. LMODincludes 21,993 images spanning optical coherence tomography, scanning laser ophthalmoscopy, eye photos, surgical scenes, and color fundus photographs. We benchmark 13 state-of-the-art LVLMs and find that they are far from perfect for comprehending ophthalmology images. Models struggle with diagnostic analysis and demographic extraction, reveal weaknesses in spatial reasoning, diagnostic analysis, handling out-of-domain queries, and safeguards for handling biomarkers of ophthalmology images.

翻译:眼科学高度依赖于详细的图像分析来进行诊断与治疗规划。尽管大型视觉语言模型在理解复杂视觉信息方面展现出潜力,但其在眼科图像上的性能仍未得到充分探索。我们提出了LMOD,一个用于评估大型视觉语言模型在眼科图像上性能的数据集与基准,涵盖解剖结构理解、诊断分析和人口统计学信息提取。LMOD包含21,993张图像,涵盖光学相干断层扫描、扫描激光检眼镜、眼部照片、手术场景以及彩色眼底照相。我们对13个最先进的大型视觉语言模型进行了基准测试,发现它们在理解眼科图像方面远未达到理想水平。这些模型在诊断分析和人口统计学信息提取方面存在困难,并暴露出在空间推理、诊断分析、处理领域外查询以及处理眼科图像生物标志物的安全防护措施方面的弱点。