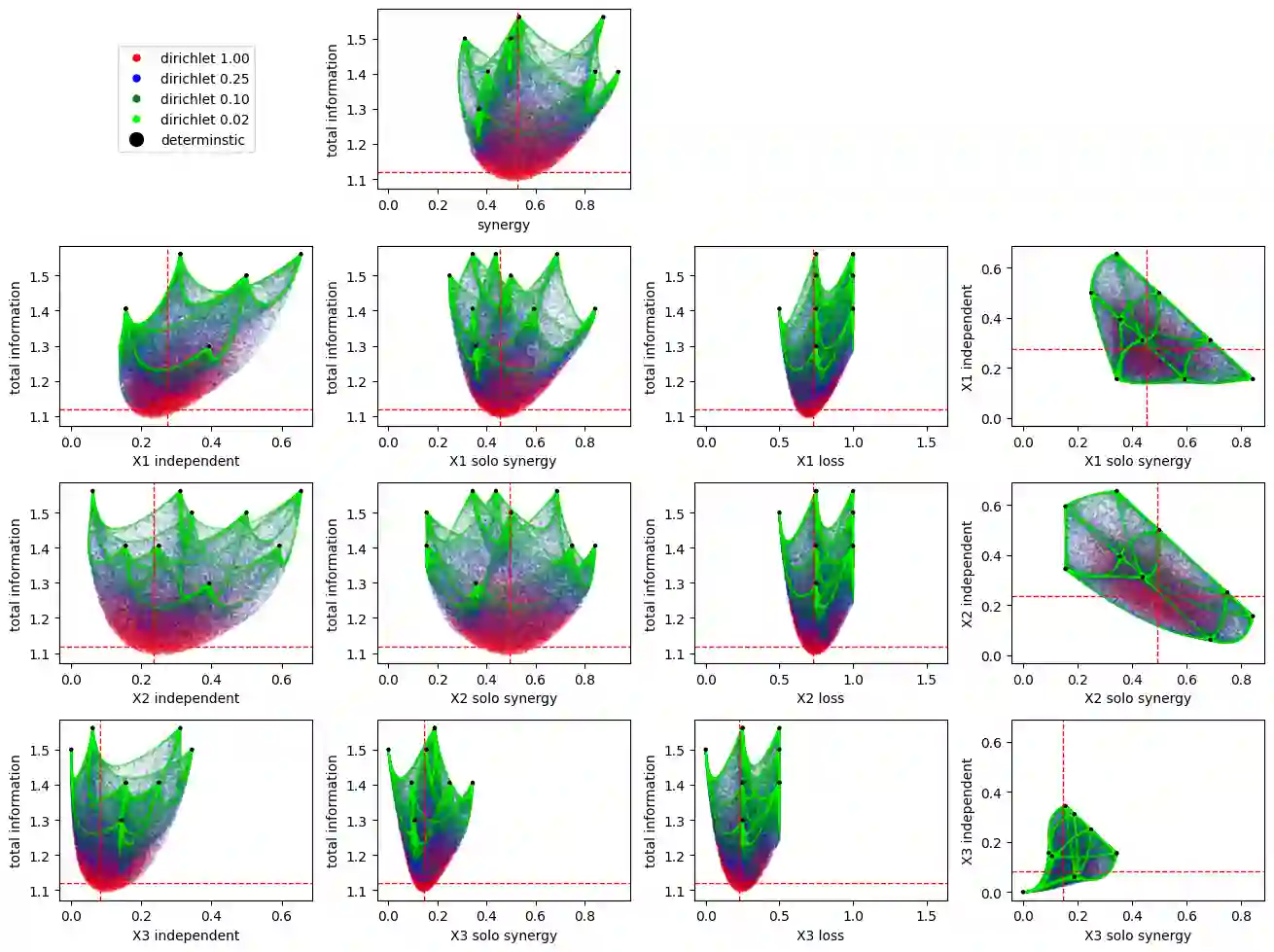

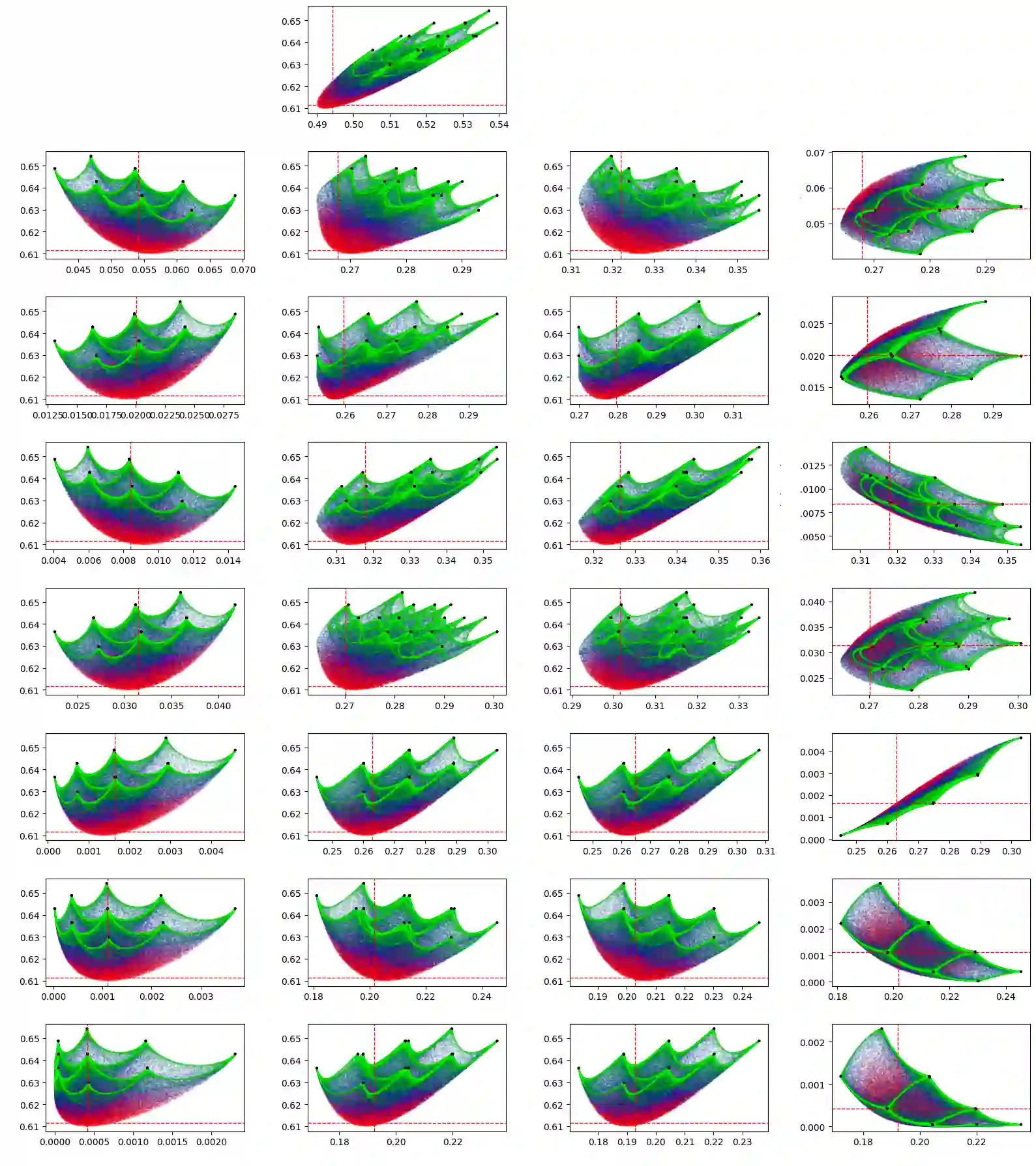

A central challenge in analyzing multivariate interactions within complex systems is to decompose how multiple inputs jointly determine an output. Existing approaches generally operate on observed probability distributions and can conflate a system's intrinsic functional logic with statistical artifacts of limited data. As a result, distinct systems can yield identical observations, rendering information decomposition fundamentally underdetermined and obscuring true higher-order interactions. We introduce Functional Information Decomposition (FID), both a computational and theoretical framework, which defines informational components with respect to a system's complete input-output mapping, thereby addressing a core cross-scale inference problem: determining how information carried by individual components combines to shape system-level behavior. When the mapping is fully specified, FID provides a unique decomposition into independent and synergistic contributions. Crucially, given only partial observations, FID characterizes the entire space of consistent decompositions by sampling compatible functions, making inferential limits explicit. A complementary geometric perspective clarifies the structural origin of informational components. We demonstrate FID's interdisciplinary utility on canonical logical functions, Conway's Game of Life, and gene-expression-based prediction of cancer drug response, and provide an open-source implementation. By separating functional architecture from observational distribution, FID offers a principled foundation for analyzing multivariate dependence in both fully and partially observed complex systems.

翻译:分析复杂系统中多元相互作用的一个核心挑战在于分解多个输入如何共同决定一个输出。现有方法通常基于观测到的概率分布进行操作,可能将系统内在的功能逻辑与有限数据产生的统计假象相混淆。因此,不同的系统可能产生相同的观测结果,导致信息分解从根本上具有不确定性,并掩盖了真实的高阶相互作用。我们提出了功能信息分解(FID),这是一个兼具计算与理论性质的框架,它依据系统的完整输入-输出映射来定义信息组分,从而解决了一个核心的跨尺度推断问题:确定由各个组分携带的信息如何组合以塑造系统层面的行为。当映射被完全指定时,FID能够提供一种将信息分解为独立贡献与协同贡献的唯一分解。至关重要的是,在仅给定部分观测数据的情况下,FID通过采样兼容的函数来刻画所有一致分解所构成的空间,从而明确推断的极限。一个互补的几何视角阐明了信息组分的结构起源。我们在经典逻辑函数、康威生命游戏以及基于基因表达的癌症药物反应预测上展示了FID的跨学科实用性,并提供了开源实现。通过将功能架构与观测分布分离开来,FID为分析完全观测与部分观测的复杂系统中的多元依赖性提供了一个原理性的基础。