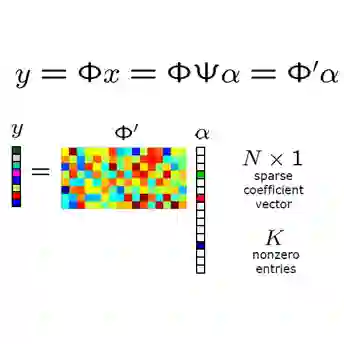

This work addresses the fundamental linear inverse problem in compressive sensing (CS) by introducing a new type of regularizing generative prior. Our proposed method utilizes ideas from classical dictionary-based CS and, in particular, sparse Bayesian learning (SBL), to integrate a strong regularization towards sparse solutions. At the same time, by leveraging the notion of conditional Gaussianity, it also incorporates the adaptability from generative models to training data. However, unlike most state-of-the-art generative models, it is able to learn from a few compressed and noisy data samples and requires no optimization algorithm for solving the inverse problem. Additionally, similar to Dirichlet prior networks, our model parameterizes a conjugate prior enabling its application for uncertainty quantification. We support our approach theoretically through the concept of variational inference and validate it empirically using different types of compressible signals.

翻译:本研究通过引入一种新型正则化生成先验,解决了压缩感知中的基本线性逆问题。所提出的方法借鉴了经典基于字典的压缩感知思想,特别是稀疏贝叶斯学习技术,以集成对稀疏解的强正则化约束。同时,通过利用条件高斯性概念,该方法还融合了生成模型对训练数据的自适应能力。然而,与大多数先进生成模型不同,该方法能够从少量压缩且含噪声的数据样本中学习,且无需优化算法即可求解逆问题。此外,类似于狄利克雷先验网络,我们的模型通过参数化共轭先验实现了不确定性量化功能。我们通过变分推断理论框架为该方法提供理论支撑,并利用多种类型的可压缩信号进行了实证验证。