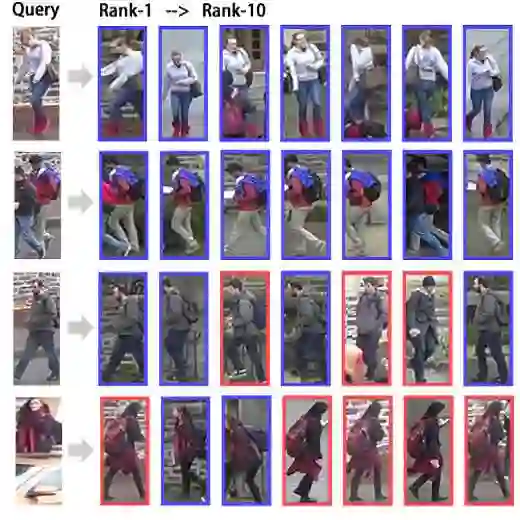

Aerial-Ground person re-identification (AG-ReID) is an emerging yet challenging task that aims to match pedestrian images captured from drastically different viewpoints, typically from unmanned aerial vehicles (UAVs) and ground-based surveillance cameras. The task poses significant challenges due to extreme viewpoint discrepancies, occlusions, and domain gaps between aerial and ground imagery. While prior works have made progress by learning cross-view representations, they remain limited in handling severe pose variations and spatial misalignment. To address these issues, we propose a Geometric and Semantic Alignment Network (GSAlign) tailored for AG-ReID. GSAlign introduces two key components to jointly tackle geometric distortion and semantic misalignment in aerial-ground matching: a Learnable Thin Plate Spline (LTPS) Module and a Dynamic Alignment Module (DAM). The LTPS module adaptively warps pedestrian features based on a set of learned keypoints, effectively compensating for geometric variations caused by extreme viewpoint changes. In parallel, the DAM estimates visibility-aware representation masks that highlight visible body regions at the semantic level, thereby alleviating the negative impact of occlusions and partial observations in cross-view correspondence. A comprehensive evaluation on CARGO with four matching protocols demonstrates the effectiveness of GSAlign, achieving significant improvements of +18.8\% in mAP and +16.8\% in Rank-1 accuracy over previous state-of-the-art methods on the aerial-ground setting.

翻译:空地行人重识别(AG-ReID)是一项新兴且极具挑战性的任务,其目标是在视角差异极大的条件下(通常来自无人机与地面监控摄像头)对行人图像进行匹配。由于极端视角差异、遮挡以及空地图像间的域差异,该任务面临重大挑战。尽管先前的研究通过学习跨视角表征已取得进展,但在处理剧烈姿态变化与空间错位方面仍存在局限。为解决这些问题,我们提出了一种专为AG-ReID设计的几何与语义对齐网络(GSAlign)。GSAlign引入了两个关键组件以共同应对空地匹配中的几何畸变与语义错位:可学习薄板样条(LTPS)模块与动态对齐模块(DAM)。LTPS模块基于一组学习得到的关键点自适应地扭曲行人特征,有效补偿由极端视角变化引起的几何变异。同时,DAM通过估计可见性感知的表征掩码,在语义层面突出可见的身体区域,从而减轻遮挡与局部观测在跨视角对应关系中的负面影响。在CARGO数据集上使用四种匹配协议进行的综合评估验证了GSAlign的有效性,其在空地设定下相比先前最优方法实现了显著提升,mAP提高+18.8%,Rank-1准确率提高+16.8%。