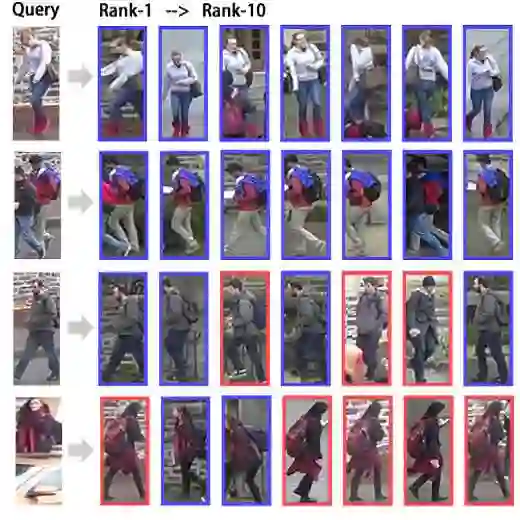

Unlike conventional person re-identification (ReID), clothes-changing ReID (CC-ReID) presents severe challenges due to substantial appearance variations introduced by clothing changes. In this work, we propose the Quality-Aware Dual-Branch Matching (QA-ReID), which jointly leverages RGB-based features and parsing-based representations to model both global appearance and clothing-invariant structural cues. These heterogeneous features are adaptively fused through a multi-modal attention module. At the matching stage, we further design the Quality-Aware Query Adaptive Convolution (QAConv-QA), which incorporates pixel-level importance weighting and bidirectional consistency constraints to enhance robustness against clothing variations. Extensive experiments demonstrate that QA-ReID achieves state-of-the-art performance on multiple benchmarks, including PRCC, LTCC, and VC-Clothes, and significantly outperforms existing approaches under cross-clothing scenarios.

翻译:与传统的行人重识别不同,换装行人重识别因服装变化带来的显著外观差异而面临严峻挑战。本研究提出质量感知双分支匹配方法,该方法联合利用基于RGB的特征与基于解析的表征,以同时建模全局外观和服装不变的结构线索。这些异构特征通过一个多模态注意力模块进行自适应融合。在匹配阶段,我们进一步设计了质量感知查询自适应卷积,该模块引入像素级重要性加权和双向一致性约束,以增强对服装变化的鲁棒性。大量实验表明,QA-ReID在PRCC、LTCC和VC-Clothes等多个基准数据集上取得了最先进的性能,并在跨服装场景下显著优于现有方法。