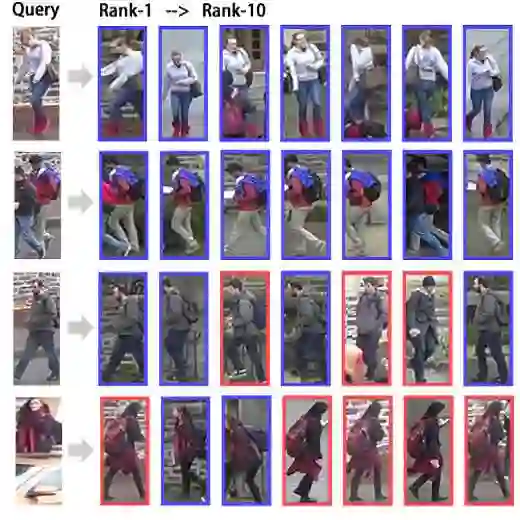

Generalizable image-based person re-identification (Re-ID) aims to recognize individuals across cameras in unseen domains without retraining. While multiple existing approaches address the domain gap through complex architectures, recent findings indicate that better generalization can be achieved by stylistically diverse single-camera data. Although this data is easy to collect, it lacks complexity due to minimal cross-view variation. We propose ReText, a novel method trained on a mixture of multi-camera Re-ID data and single-camera data, where the latter is complemented by textual descriptions to enrich semantic cues. During training, ReText jointly optimizes three tasks: (1) Re-ID on multi-camera data, (2) image-text matching, and (3) image reconstruction guided by text on single-camera data. Experiments demonstrate that ReText achieves strong generalization and significantly outperforms state-of-the-art methods on cross-domain Re-ID benchmarks. To the best of our knowledge, this is the first work to explore multimodal joint learning on a mixture of multi-camera and single-camera data in image-based person Re-ID.

翻译:可泛化的基于图像的行人重识别旨在无需重新训练即可在未见过的域中跨摄像头识别个体。虽然现有多种方法通过复杂架构来解决域差异问题,但近期研究表明,风格多样的单摄像头数据可以实现更好的泛化。尽管此类数据易于收集,但由于跨视角变化极小,其缺乏复杂性。我们提出了ReText,一种在多摄像头Re-ID数据与单摄像头数据混合集上训练的新方法,其中后者通过文本描述进行补充以丰富语义线索。在训练期间,ReText联合优化三个任务:(1) 多摄像头数据上的Re-ID,(2) 图文匹配,以及(3) 单摄像头数据上由文本引导的图像重建。实验表明,ReText实现了强大的泛化能力,并在跨域Re-ID基准测试中显著优于现有最先进方法。据我们所知,这是首个在基于图像的行人重识别中探索多摄像头与单摄像头数据混合集上的多模态联合学习的工作。