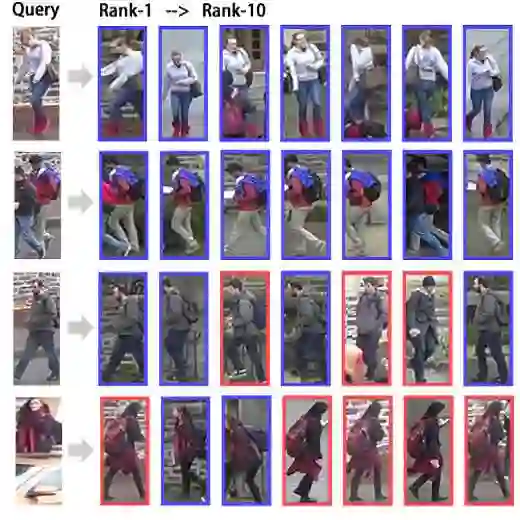

Text-to-image person re-identification (TIReID) aims to retrieve person images from a large gallery given free-form textual descriptions. TIReID is challenging due to the substantial modality gap between visual appearances and textual expressions, as well as the need to model fine-grained correspondences that distinguish individuals with similar attributes such as clothing color, texture, or outfit style. To address these issues, we propose DiCo (Disentangled Concept Representation), a novel framework that achieves hierarchical and disentangled cross-modal alignment. DiCo introduces a shared slot-based representation, where each slot acts as a part-level anchor across modalities and is further decomposed into multiple concept blocks. This design enables the disentanglement of complementary attributes (\textit{e.g.}, color, texture, shape) while maintaining consistent part-level correspondence between image and text. Extensive experiments on CUHK-PEDES, ICFG-PEDES, and RSTPReid demonstrate that our framework achieves competitive performance with state-of-the-art methods, while also enhancing interpretability through explicit slot- and block-level representations for more fine-grained retrieval results.

翻译:文本到图像行人重识别(TIReID)旨在根据给定的自由形式文本描述,从大规模图库中检索出行人图像。由于视觉外观与文本表达之间存在显著的模态鸿沟,且需要建模细粒度对应关系以区分具有相似属性(如服装颜色、纹理或穿搭风格)的个体,TIReID任务极具挑战性。为解决这些问题,我们提出了DiCo(解耦概念表示),一种实现层次化解耦跨模态对齐的新颖框架。DiCo引入了一种共享的基于槽位的表示,其中每个槽位充当跨模态的部件级锚点,并进一步分解为多个概念块。这种设计能够在保持图像与文本间一致部件级对应的同时,解耦互补属性(例如颜色、纹理、形状)。在CUHK-PEDES、ICFG-PEDES和RSTPReid数据集上的大量实验表明,我们的框架取得了与最先进方法相竞争的性能,同时通过显式的槽位级和块级表示增强了可解释性,从而获得更细粒度的检索结果。