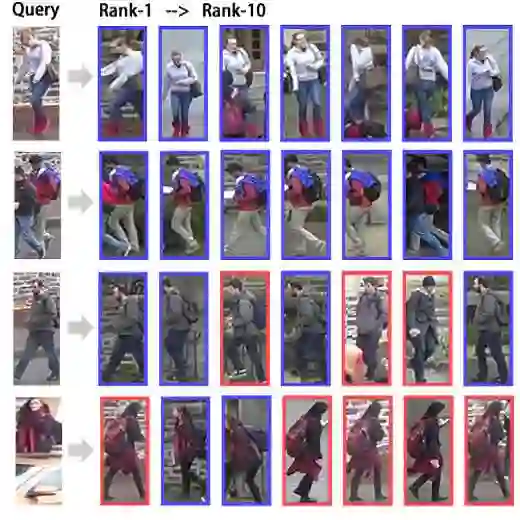

Both fine-grained discriminative details and global semantic features can contribute to solving person re-identification challenges, such as occlusion and pose variations. Vision foundation models (\textit{e.g.}, DINO) excel at mining local textures, and vision-language models (\textit{e.g.}, CLIP) capture strong global semantic difference. Existing methods predominantly rely on a single paradigm, neglecting the potential benefits of their integration. In this paper, we analyze the complementary roles of these two architectures and propose a framework to synergize their strengths by a \textbf{D}ual-\textbf{R}egularized Bidirectional \textbf{Transformer} (\textbf{DRFormer}). The dual-regularization mechanism ensures diverse feature extraction and achieves a better balance in the contributions of the two models. Extensive experiments on five benchmarks show that our method effectively harmonizes local and global representations, achieving competitive performance against state-of-the-art methods.

翻译:细粒度判别细节与全局语义特征均有助于解决行人重识别中的遮挡和姿态变化等挑战。视觉基础模型(例如 DINO)擅长挖掘局部纹理,而视觉-语言模型(例如 CLIP)则能捕捉强全局语义差异。现有方法主要依赖单一范式,忽视了二者融合的潜在优势。本文分析了这两种架构的互补作用,并提出一种通过**双重正则化双向Transformer**(**DRFormer**)协同其优势的框架。双重正则化机制确保了多样化的特征提取,并实现了两种模型贡献的更好平衡。在五个基准数据集上的大量实验表明,我们的方法有效协调了局部与全局表示,取得了与最先进方法相竞争的性能。