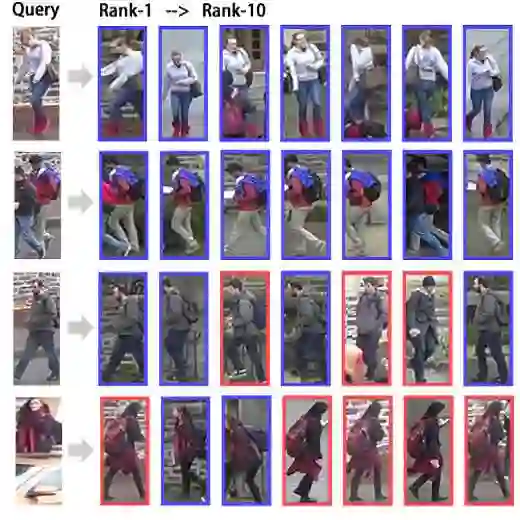

Person Re-Identification (ReID) remains a challenging problem in computer vision. This work reviews various training paradigm and evaluates the robustness of state-of-the-art ReID models in cross-domain applications and examines the role of foundation models in improving generalization through richer, more transferable visual representations. We compare three training paradigms, supervised, self-supervised, and language-aligned models. Through the study the aim is to answer the following questions: Can supervised models generalize in cross-domain scenarios? How does foundation models like SigLIP2 perform for the ReID tasks? What are the weaknesses of current supervised and foundational models for ReID? We have conducted the analysis across 11 models and 9 datasets. Our results show a clear split: supervised models dominate their training domain but crumble on cross-domain data. Language-aligned models, however, show surprising robustness cross-domain for ReID tasks, even though they are not explicitly trained to do so. Code and data available at: https://github.com/moiiai-tech/object-reid-benchmark.

翻译:行人重识别(ReID)仍然是计算机视觉领域的一个具有挑战性的问题。本文综述了多种训练范式,评估了最先进ReID模型在跨域应用中的鲁棒性,并探讨了基础模型如何通过提供更丰富、更具可迁移性的视觉表征来提升泛化能力。我们比较了三种训练范式:监督模型、自监督模型和语言对齐模型。本研究旨在回答以下问题:监督模型能否在跨域场景中泛化?像SigLIP2这样的基础模型在ReID任务中表现如何?当前用于ReID的监督模型和基础模型存在哪些弱点?我们在11个模型和9个数据集上进行了分析。我们的结果显示出一个明显的分化:监督模型在其训练域内表现优异,但在跨域数据上性能崩溃。然而,语言对齐模型在ReID任务中展现出惊人的跨域鲁棒性,尽管它们并未为此进行显式训练。代码与数据获取地址:https://github.com/moiiai-tech/object-reid-benchmark。