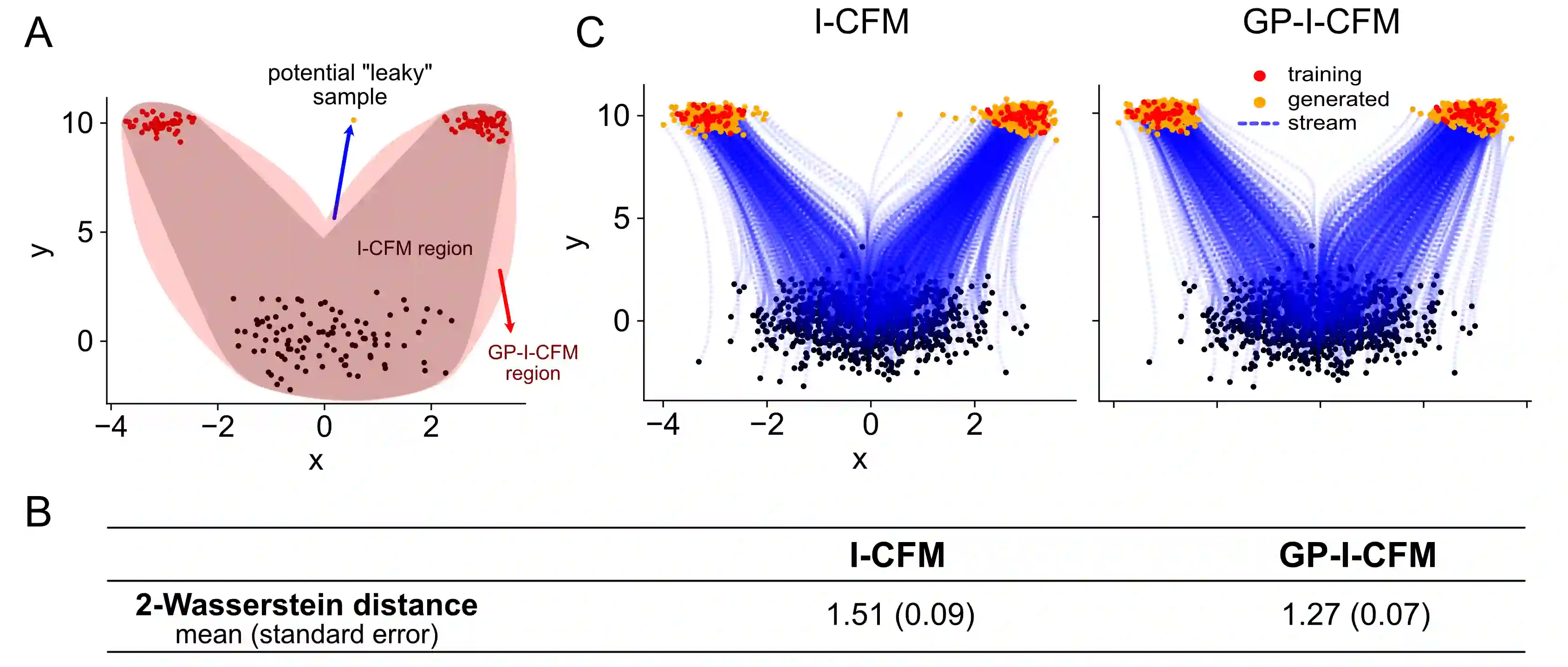

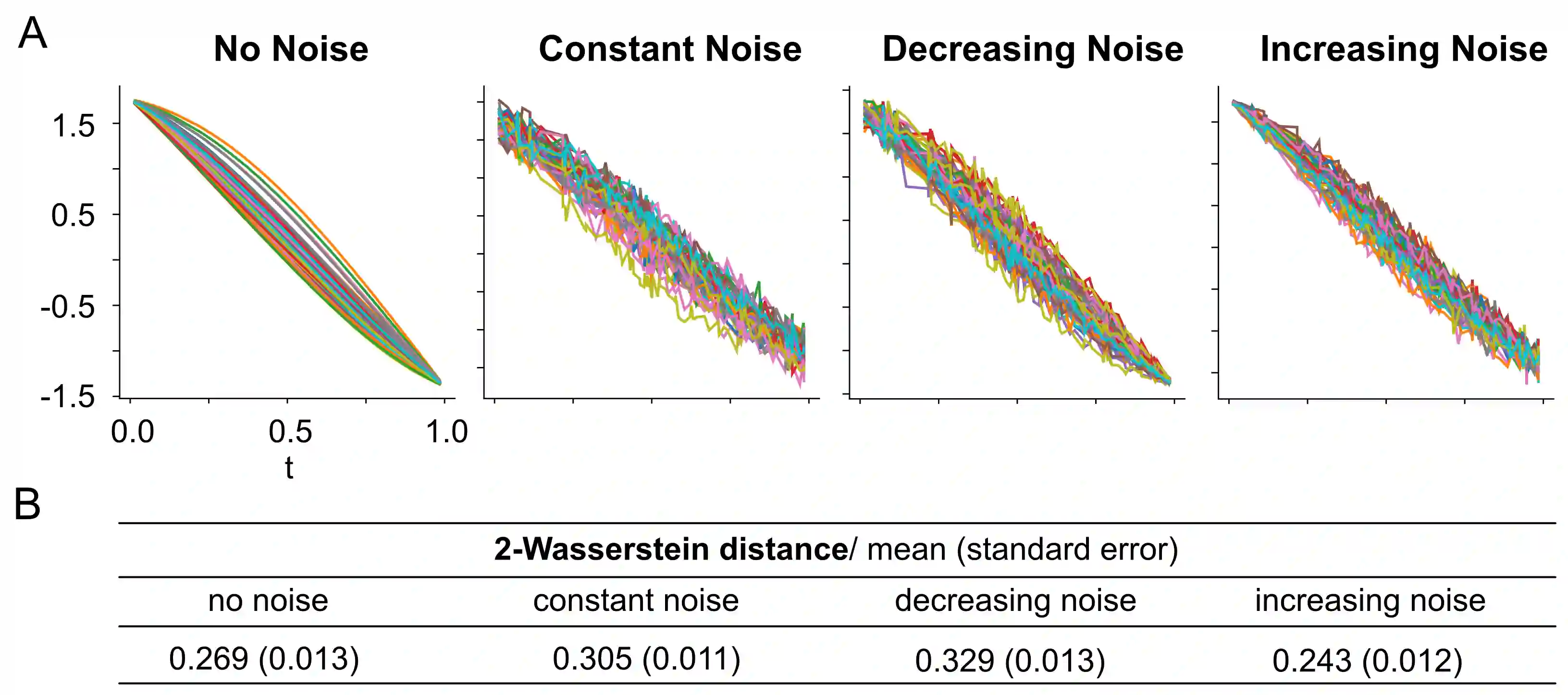

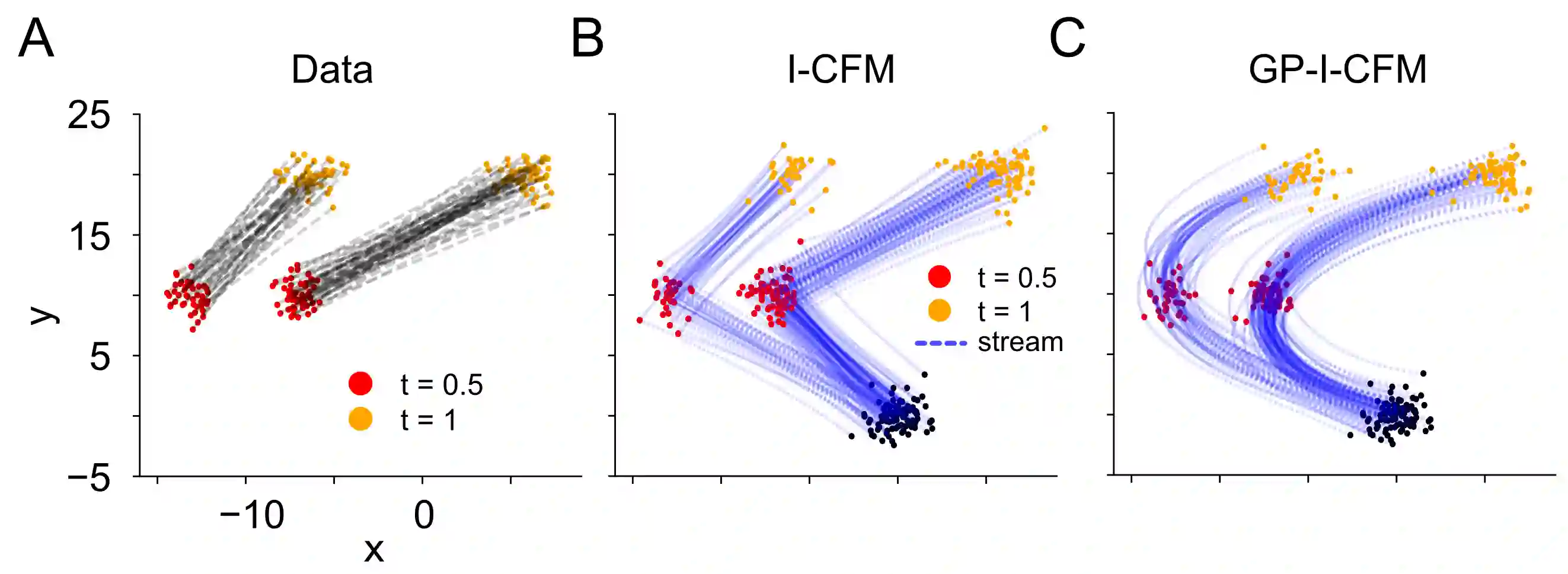

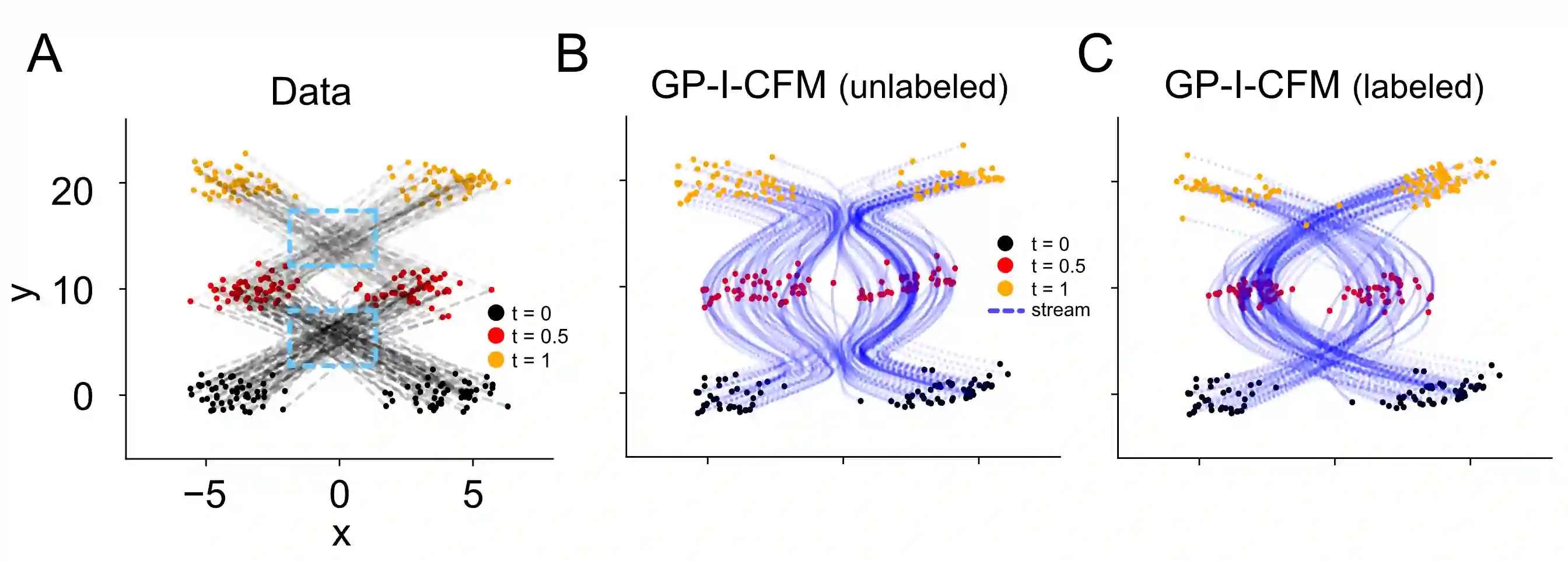

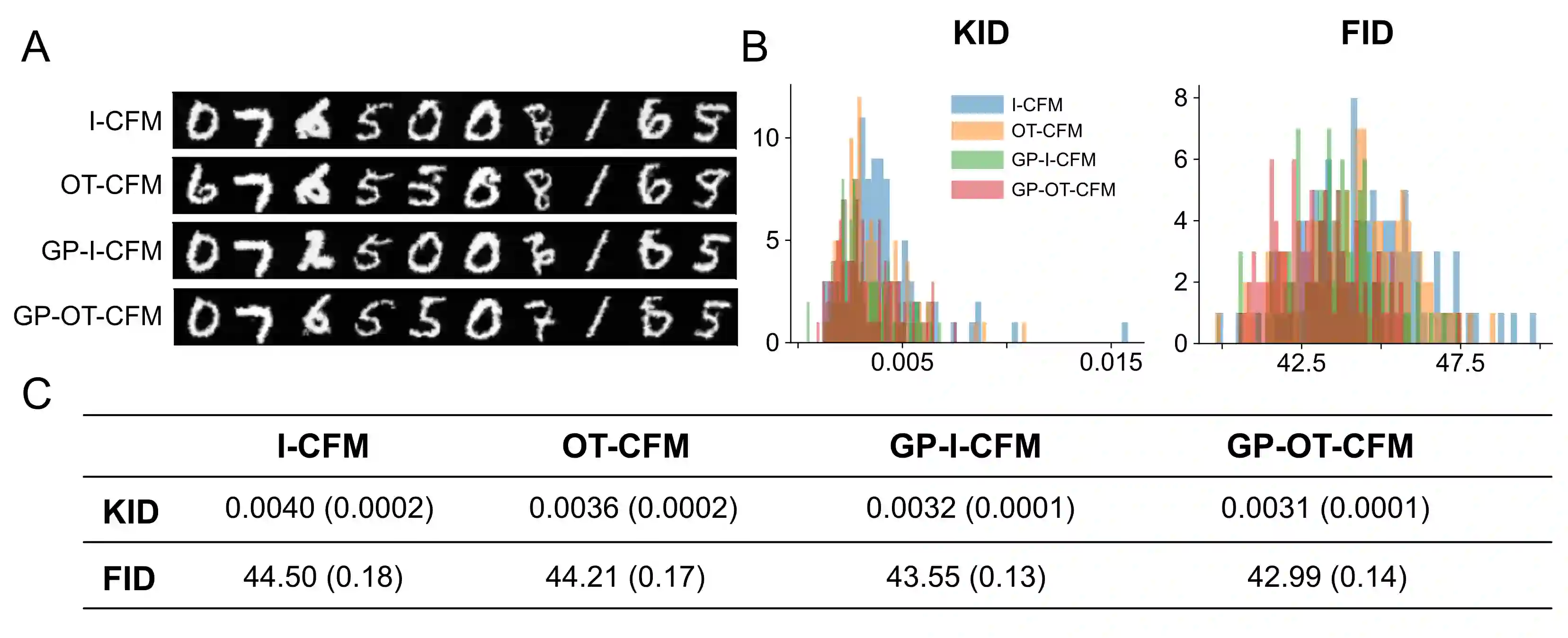

Flow matching (FM) is a family of training algorithms for fitting continuous normalizing flows (CNFs). A standard approach to FM, called conditional flow matching (CFM), exploits the fact that the marginal vector field of a CNF can be learned by fitting least-square regression to the so-called conditional vector field specified given one or both ends of the flow path. We show that viewing CFM training from a Bayesian decision theoretic perspective on parameter estimation opens the door to generalizations of CFM algorithms. We propose one such extension by introducing a CFM algorithm based on defining conditional probability paths given what we refer to as ``streams'', instances of latent stochastic paths that connect pairs of noise and observed data. Further, we advocates the modeling of these latent streams using Gaussian processes (GPs). The unique distributional properties of GPs, and in particular the fact that the velocities of a GP is still a GP, allows drawing samples from the resulting stream-augmented conditional probability path without simulating the actual streams, and hence the ``simulation-free" nature of CFM training is preserved. We show that this generalization of the CFM can substantially reduce the variance in the estimated marginal vector field at a moderate computational cost, thereby improving the quality of the generated samples under common metrics. Additionally, we show that adopting the GP on the streams allows for flexibly linking multiple related training data points (e.g., time series) and incorporating additional prior information. We empirically validate our claim through both simulations and applications to two hand-written image datasets.

翻译:流匹配(FM)是一类用于拟合连续归一化流(CNF)的训练算法。一种称为条件流匹配(CFM)的标准FM方法,利用了CNF的边际向量场可以通过对给定流路径一端或两端指定的所谓条件向量场进行最小二乘回归来学习的事实。我们证明,从贝叶斯决策理论的参数估计视角审视CFM训练,为CFM算法的推广开辟了道路。我们提出了一种此类扩展,通过引入一种基于定义条件概率路径的CFM算法,该路径基于我们称为“流”的潜在随机路径实例,这些流连接了噪声与观测数据对。此外,我们主张使用高斯过程(GPs)对这些潜在流进行建模。GPs独特的分布特性,特别是GP的速度本身仍是一个GP这一事实,允许从所得的流增强条件概率路径中抽取样本,而无需模拟实际流,从而保留了CFM训练“无模拟”的特性。我们证明,这种CFM的推广能够以适度的计算成本显著降低估计边际向量场的方差,从而在常见度量下提高生成样本的质量。此外,我们表明,在流上采用GP允许灵活地关联多个相关的训练数据点(例如时间序列)并融入额外的先验信息。我们通过模拟实验以及应用于两个手写图像数据集,实证验证了我们的主张。