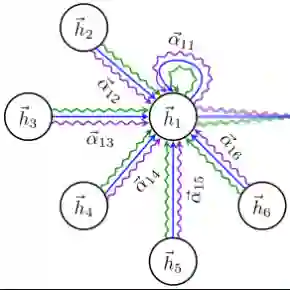

Image classification is a computer vision task where a model analyzes an image to categorize it into a specific label. Vision Transformers (ViT) improve this task by leveraging self-attention to capture complex patterns and long range relationships between image patches. However, a key challenge for ViTs is efficiently incorporating multiscale feature representations, which is inherent in CNNs through their hierarchical structure. In this paper, we introduce the Scale-Aware Graph Attention Vision Transformer (SAG-ViT), a novel framework that addresses this challenge by integrating multi-scale features. Using EfficientNet as a backbone, the model extracts multi-scale feature maps, which are divided into patches to preserve semantic information. These patches are organized into a graph based on spatial and feature similarities, with a Graph Attention Network (GAT) refining the node embeddings. Finally, a Transformer encoder captures long-range dependencies and complex interactions. The SAG-ViT is evaluated on benchmark datasets, demonstrating its effectiveness in enhancing image classification performance.

翻译:图像分类是一项计算机视觉任务,其目标是让模型分析图像并将其归类到特定标签。视觉Transformer(ViT)通过利用自注意力机制来捕获图像块之间的复杂模式和长程关系,从而改进了这一任务。然而,ViT面临的一个关键挑战是如何高效地融入多尺度特征表示,而这正是卷积神经网络(CNN)通过其分层结构所固有的优势。本文提出了一种新颖的框架——尺度感知图注意力视觉Transformer(SAG-ViT),它通过整合多尺度特征来解决这一挑战。该模型以EfficientNet为骨干网络,提取多尺度特征图,并将其划分为多个图像块以保留语义信息。这些图像块根据空间和特征相似性被组织成一个图,并利用图注意力网络(GAT)来精炼节点嵌入。最后,一个Transformer编码器负责捕获长程依赖和复杂的交互关系。SAG-ViT在多个基准数据集上进行了评估,结果证明了其在提升图像分类性能方面的有效性。