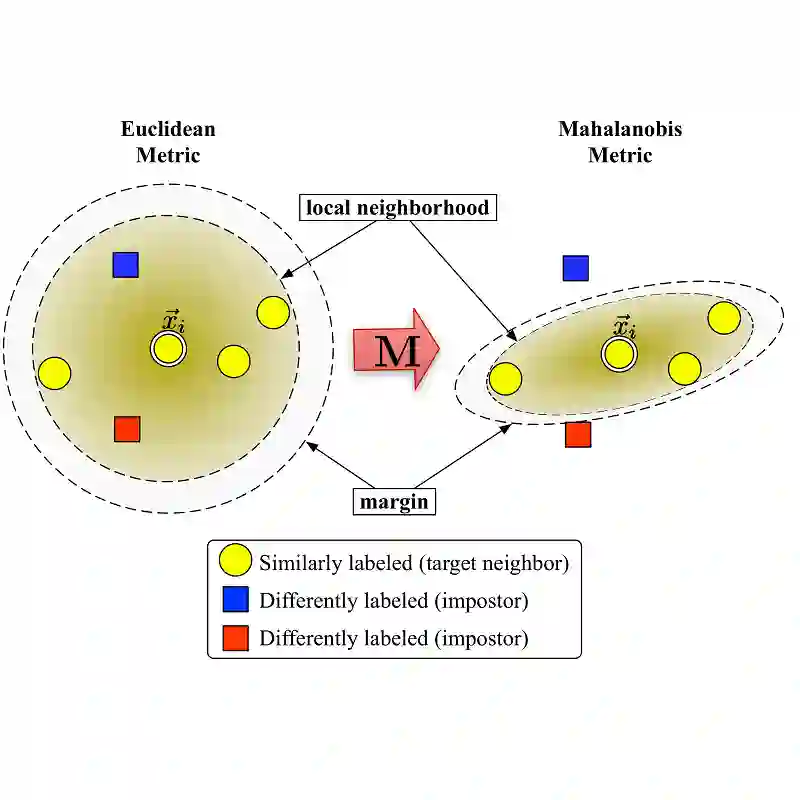

Multi-modal deep metric learning is crucial for effectively capturing diverse representations in tasks such as face verification, fine-grained object recognition, and product search. Traditional approaches to metric learning, whether based on distance or margin metrics, primarily emphasize class separation, often overlooking the intra-class distribution essential for multi-modal feature learning. In this context, we propose a novel loss function called Density-Aware Adaptive Margin Loss(DAAL), which preserves the density distribution of embeddings while encouraging the formation of adaptive sub-clusters within each class. By employing an adaptive line strategy, DAAL not only enhances intra-class variance but also ensures robust inter-class separation, facilitating effective multi-modal representation. Comprehensive experiments on benchmark fine-grained datasets demonstrate the superior performance of DAAL, underscoring its potential in advancing retrieval applications and multi-modal deep metric learning.

翻译:多模态深度度量学习对于有效捕捉人脸验证、细粒度物体识别和产品搜索等任务中的多样化表征至关重要。传统的度量学习方法,无论是基于距离还是边界度量,主要侧重于类别分离,往往忽视了多模态特征学习中至关重要的类内分布。在此背景下,我们提出了一种名为密度感知自适应边界损失(DAAL)的新型损失函数,该函数在保持嵌入向量密度分布的同时,鼓励在每个类别内部形成自适应子簇。通过采用自适应边界策略,DAAL不仅增强了类内方差,还确保了鲁棒的类间分离,从而促进了有效的多模态表征。在基准细粒度数据集上的综合实验证明了DAAL的优越性能,凸显了其在推进检索应用和多模态深度度量学习方面的潜力。