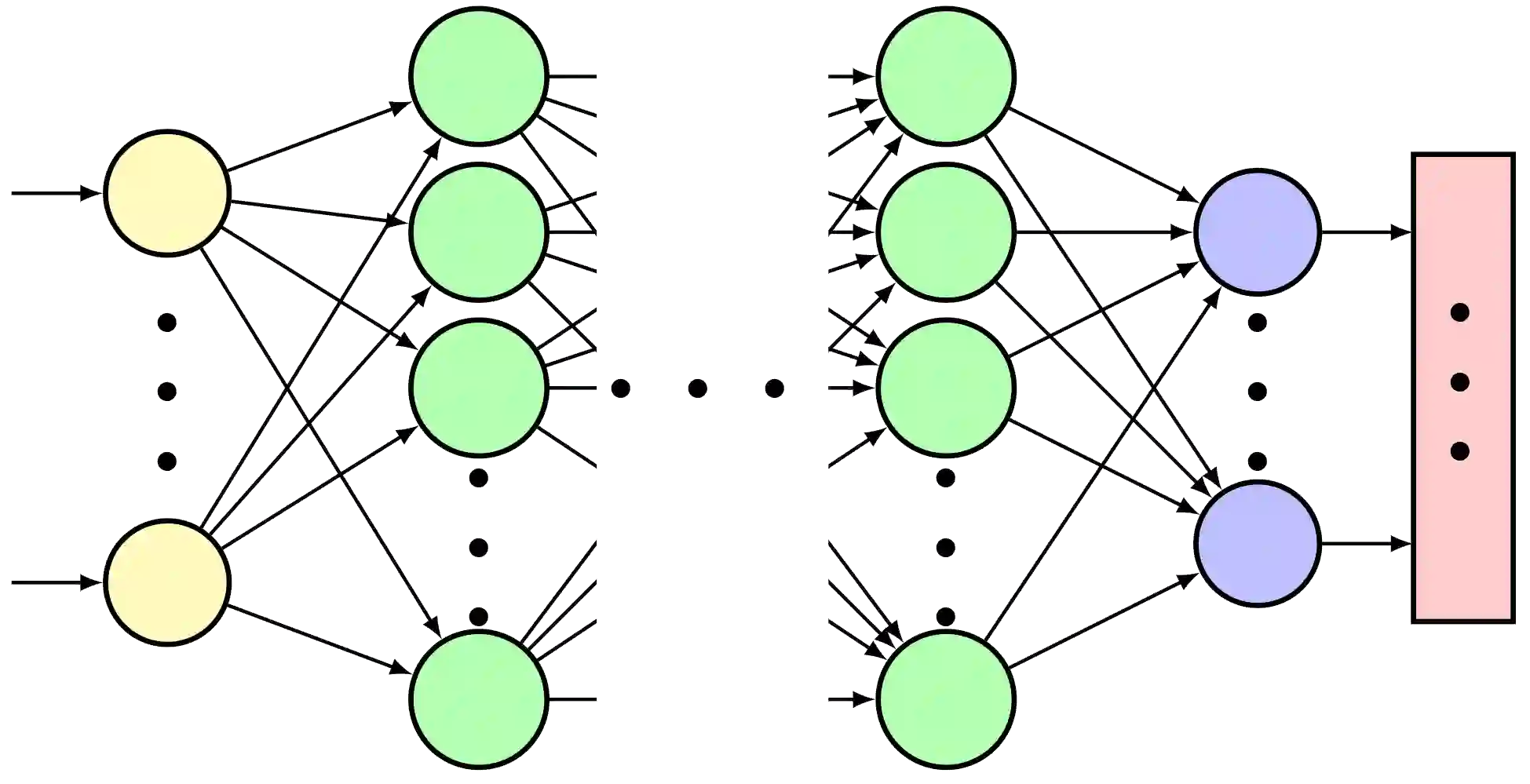

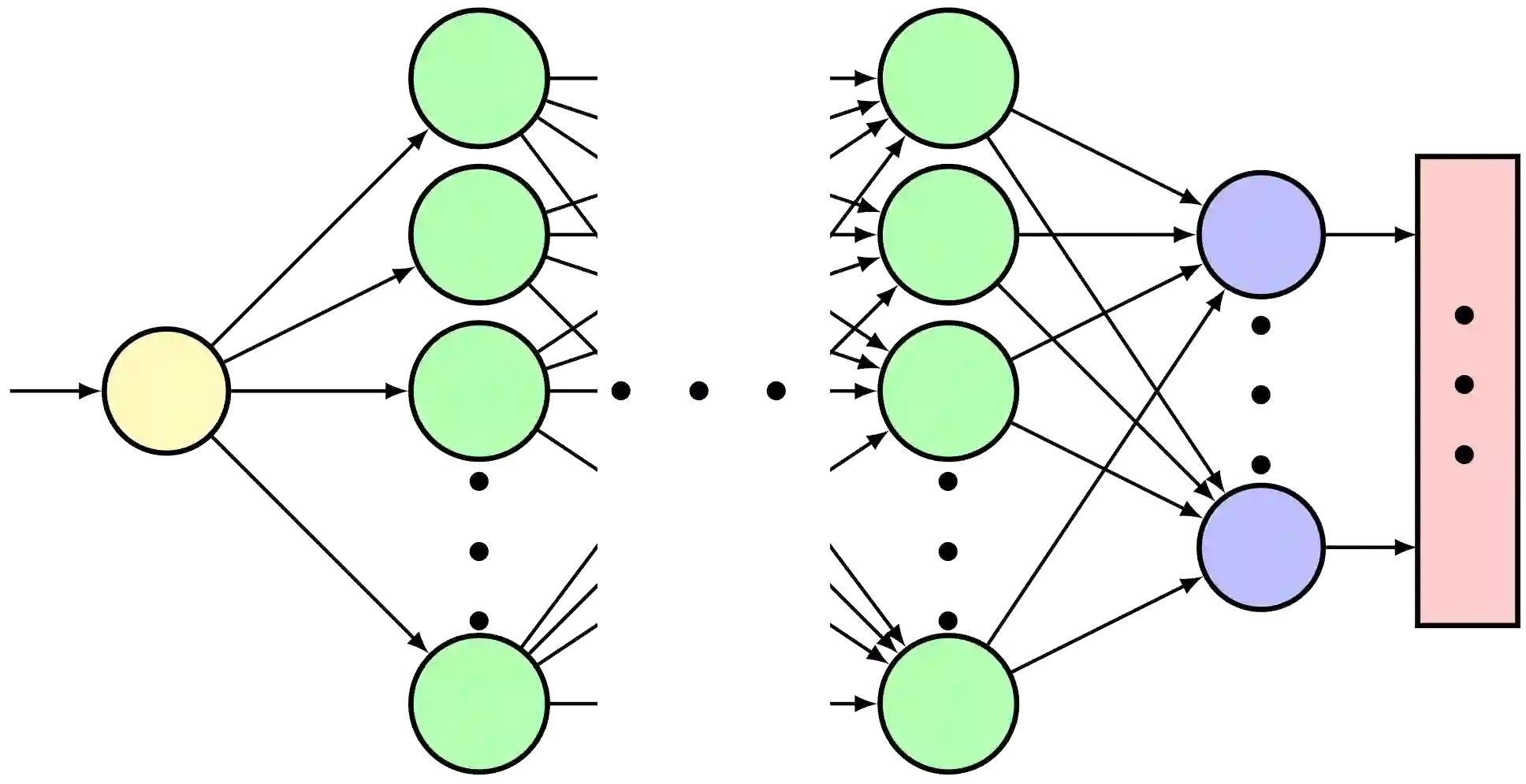

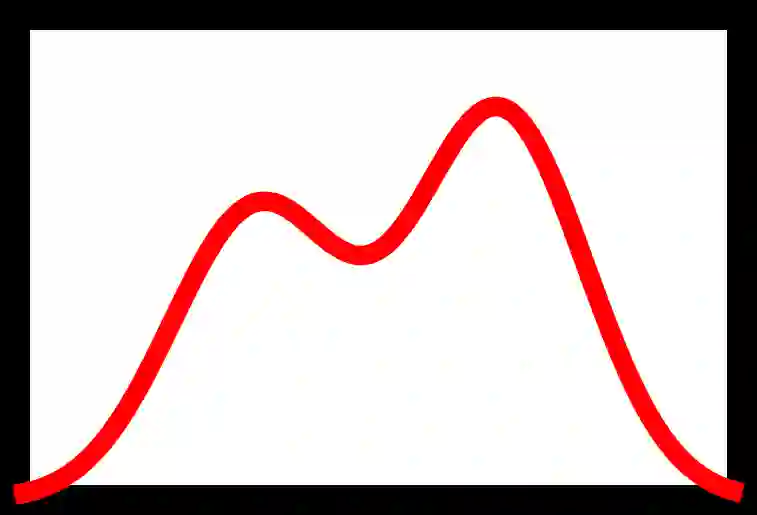

We introduce a novel deep operator network (DeepONet) framework that incorporates generalised variational inference (GVI) using R\'enyi's $\alpha$-divergence to learn complex operators while quantifying uncertainty. By incorporating Bayesian neural networks as the building blocks for the branch and trunk networks, our framework endows DeepONet with uncertainty quantification. The use of R\'enyi's $\alpha$-divergence, instead of the Kullback-Leibler divergence (KLD), commonly used in standard variational inference, mitigates issues related to prior misspecification that are prevalent in Variational Bayesian DeepONets. This approach offers enhanced flexibility and robustness. We demonstrate that modifying the variational objective function yields superior results in terms of minimising the mean squared error and improving the negative log-likelihood on the test set. Our framework's efficacy is validated across various mechanical systems, where it outperforms both deterministic and standard KLD-based VI DeepONets in predictive accuracy and uncertainty quantification. The hyperparameter $\alpha$, which controls the degree of robustness, can be tuned to optimise performance for specific problems. We apply this approach to a range of mechanics problems, including gravity pendulum, advection-diffusion, and diffusion-reaction systems. Our findings underscore the potential of $\alpha$-VI DeepONet to advance the field of data-driven operator learning and its applications in engineering and scientific domains.

翻译:我们提出了一种新颖的深度算子网络(DeepONet)框架,该框架结合了使用Rényi $\alpha$-散度的广义变分推断(GVI),以学习复杂算子并同时量化不确定性。通过将贝叶斯神经网络作为分支网络和主干网络的构建模块,我们的框架赋予了DeepONet不确定性量化的能力。使用Rényi $\alpha$-散度,而非标准变分推断中常用的Kullback-Leibler散度(KLD),缓解了变分贝叶斯DeepONet中普遍存在的先验设定错误问题。这种方法提供了更强的灵活性和鲁棒性。我们证明,修改变分目标函数可以在最小化均方误差和提高测试集负对数似然方面获得更优的结果。我们框架的有效性在各种力学系统中得到了验证,其在预测精度和不确定性量化方面均优于确定性DeepONet和标准的基于KLD的变分推断DeepONet。控制鲁棒性程度的超参数$\alpha$可进行调整,以优化针对特定问题的性能。我们将此方法应用于一系列力学问题,包括重力摆、对流-扩散系统以及扩散-反应系统。我们的研究结果凸显了$\alpha$-VI DeepONet在推动数据驱动的算子学习领域及其在工程和科学领域应用的潜力。