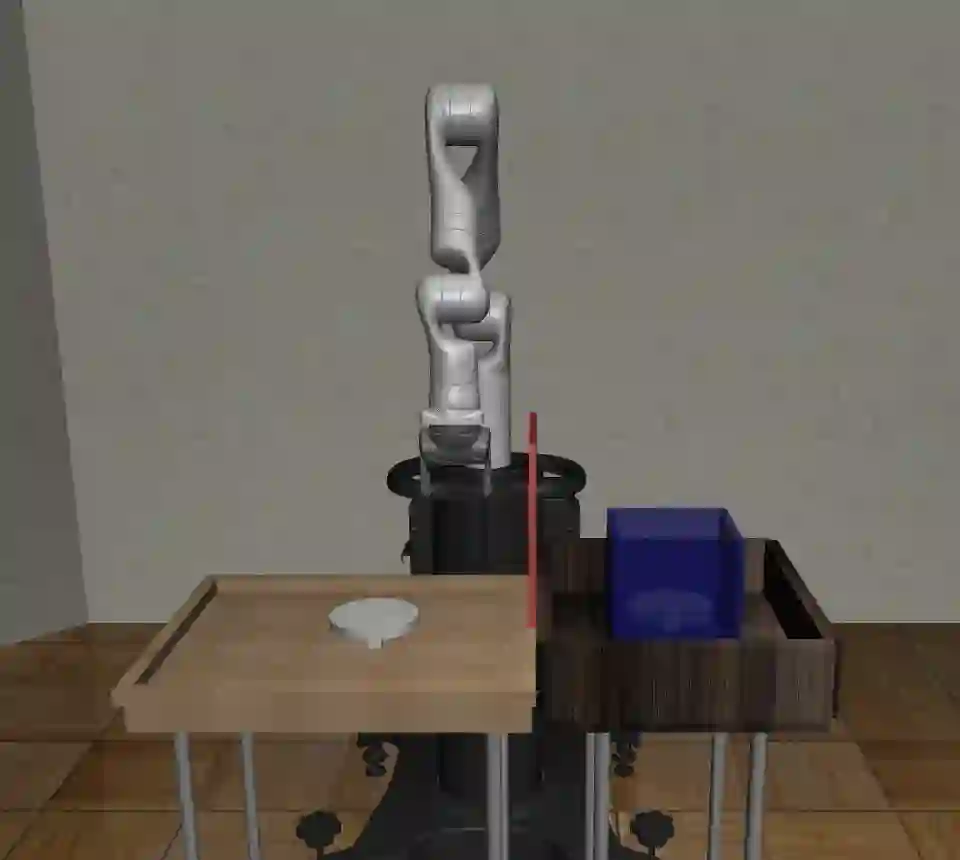

Offline reinforcement learning (RL) is a promising direction that allows RL agents to pre-train on large datasets, avoiding the recurrence of expensive data collection. To advance the field, it is crucial to generate large-scale datasets. Compositional RL is particularly appealing for generating such large datasets, since 1)~it permits creating many tasks from few components, 2)~the task structure may enable trained agents to solve new tasks by combining relevant learned components, and 3)~the compositional dimensions provide a notion of task relatedness. This paper provides four offline RL datasets for simulated robotic manipulation created using the $256$ tasks from CompoSuite [Mendez at al., 2022a]. Each dataset is collected from an agent with a different degree of performance, and consists of $256$ million transitions. We provide training and evaluation settings for assessing an agent's ability to learn compositional task policies. Our benchmarking experiments show that current offline RL methods can learn the training tasks to some extent and that compositional methods outperform non-compositional methods. Yet current methods are unable to extract the compositional structure to generalize to unseen tasks, highlighting a need for future research in offline compositional RL.

翻译:离线强化学习(RL)是一种前景广阔的方向,它允许RL智能体在大型数据集上进行预训练,从而避免重复昂贵的数据收集过程。为了推动该领域发展,生成大规模数据集至关重要。组合强化学习对于生成此类大型数据集尤其具有吸引力,因为:1)它允许从少量组件中创建大量任务;2)任务结构可能使训练后的智能体能够通过组合已学习的相关组件来解决新任务;3)组合维度提供了任务相关性的度量。本文提供了四个用于模拟机器人操作的离线RL数据集,这些数据集利用CompoSuite [Mendez et al., 2022a]中的$256$个任务创建而成。每个数据集均由具有不同性能水平的智能体收集,包含$256$百万条状态转移记录。我们提供了用于评估智能体学习组合任务策略能力的训练与评估设置。基准测试实验表明,当前离线RL方法能够在一定程度上学习训练任务,且组合方法优于非组合方法。然而,现有方法仍无法提取组合结构以泛化至未见任务,这凸显了离线组合强化学习领域未来研究的必要性。