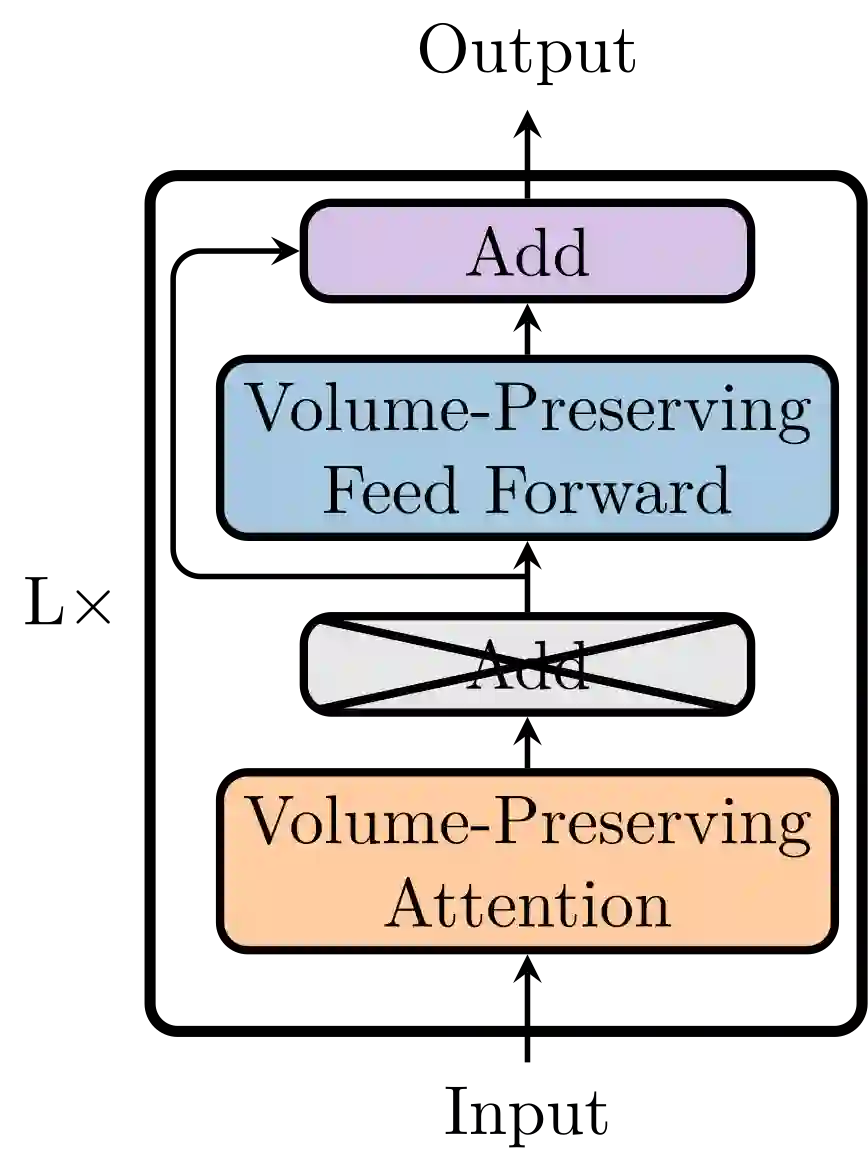

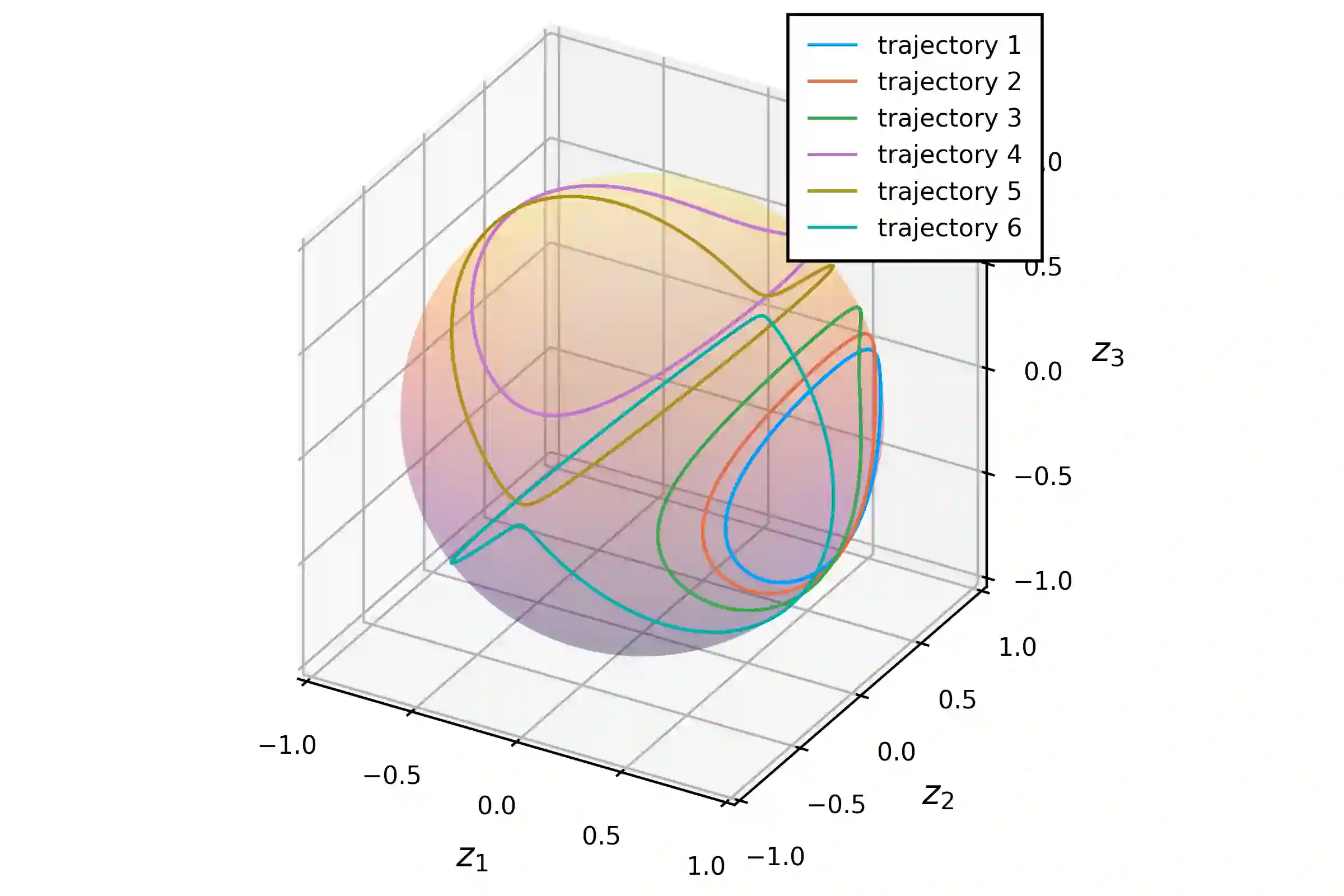

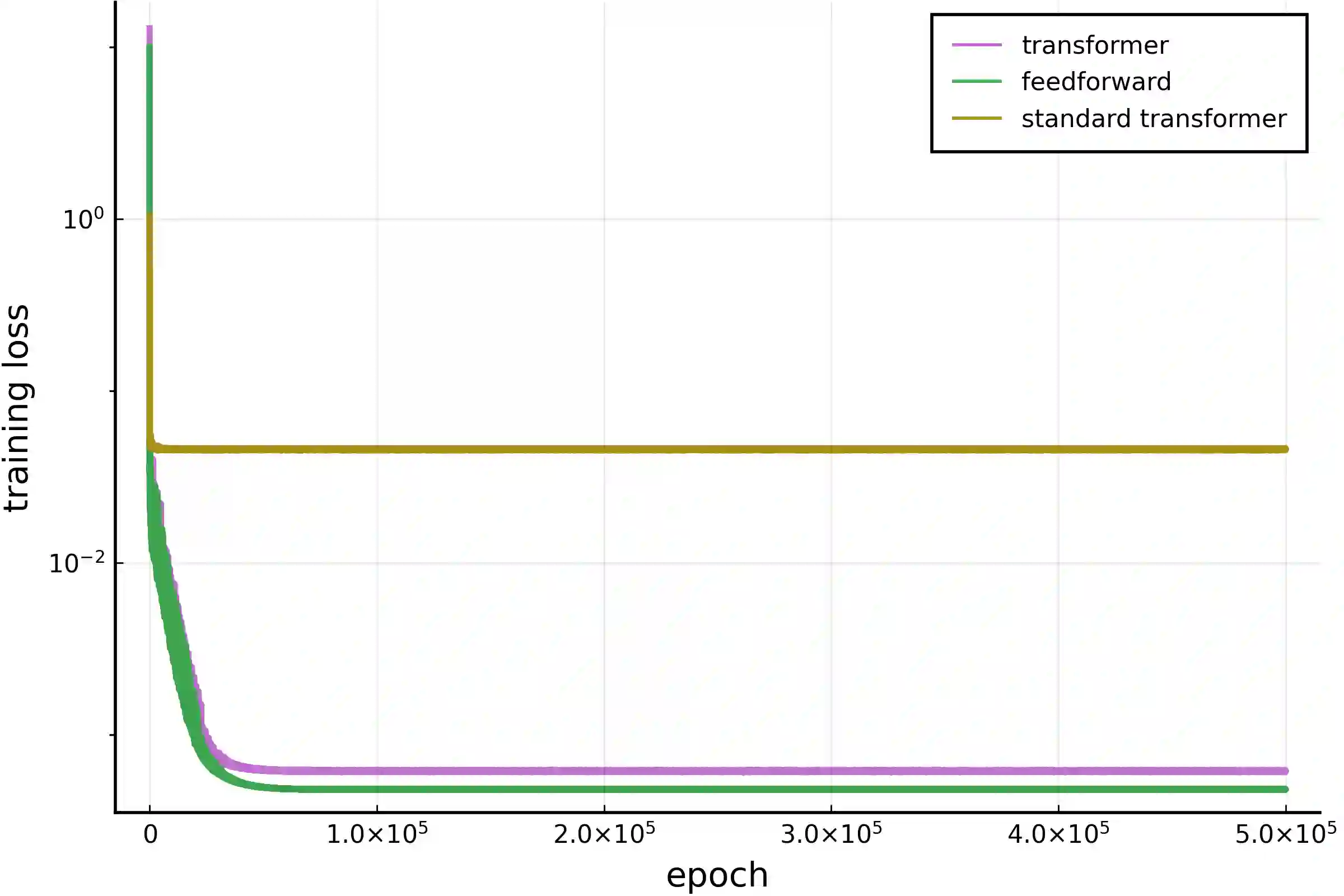

Two of the many trends in neural network research of the past few years have been (i) the learning of dynamical systems, especially with recurrent neural networks such as long short-term memory networks (LSTMs) and (ii) the introduction of transformer neural networks for natural language processing (NLP) tasks. Both of these trends have created enormous amounts of traction, particularly the second one: transformer networks now dominate the field of NLP. Even though some work has been performed on the intersection of these two trends, those efforts was largely limited to using the vanilla transformer directly without adjusting its architecture for the setting of a physical system. In this work we use a transformer-inspired neural network to learn a dynamical system and furthermore (for the first time) imbue it with structure-preserving properties to improve long-term stability. This is shown to be of great advantage when applying the neural network to real world applications.

翻译:过去几年神经网络研究的两大趋势分别是:(i) 学习动力系统,特别是使用长短期记忆网络(LSTM)等循环神经网络;(ii) 引入Transformer神经网络用于自然语言处理(NLP)任务。这两大趋势都产生了巨大影响,尤其是后者:Transformer网络如今主导了NLP领域。尽管已有一些研究尝试结合这两大趋势,但相关工作大多局限于直接使用标准Transformer,未对其架构进行针对物理系统场景的调整。本研究采用受Transformer启发的神经网络来学习动力系统,并首次为其赋予保结构特性以提升长期稳定性。实验表明,将本神经网络应用于实际场景时可获得显著优势。