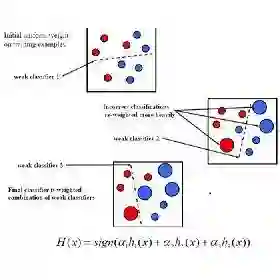

AdaBoost sequentially fits so-called weak learners to minimize an exponential loss, which penalizes misclassified data points more severely than other loss functions like cross-entropy. Paradoxically, AdaBoost generalizes well in practice as the number of weak learners grows. In the present work, we introduce Penalized Exponential Loss (PENEX), a new formulation of the multi-class exponential loss that is theoretically grounded and, in contrast to the existing formulation, amenable to optimization via first-order methods, making it a practical objective for training neural networks. We demonstrate that PENEX effectively increases margins of data points, which can be translated into a generalization bound. Empirically, across computer vision and language tasks, PENEX improves neural network generalization in low-data regimes, often matching or outperforming established regularizers at comparable computational cost. Our results highlight the potential of the exponential loss beyond its application in AdaBoost.

翻译:AdaBoost通过顺序拟合所谓的弱学习器来最小化指数损失,该损失函数对误分类数据点的惩罚比交叉熵等其他损失函数更为严重。矛盾的是,随着弱学习器数量的增加,AdaBoost在实践中展现出良好的泛化性能。在本研究中,我们提出了惩罚指数损失(PENEX),这是一种理论上严谨的多类指数损失新形式。与现有形式不同,PENEX可通过一阶方法进行优化,使其成为训练神经网络的实用目标函数。我们证明PENEX能有效增大数据点的边界裕度,这可以转化为泛化误差界。在计算机视觉和语言任务的实证研究中,PENEX在低数据量场景下显著提升了神经网络的泛化能力,其性能在计算成本相当的情况下常与成熟的正则化方法相当或更优。我们的研究结果揭示了指数损失在AdaBoost应用之外的潜在价值。