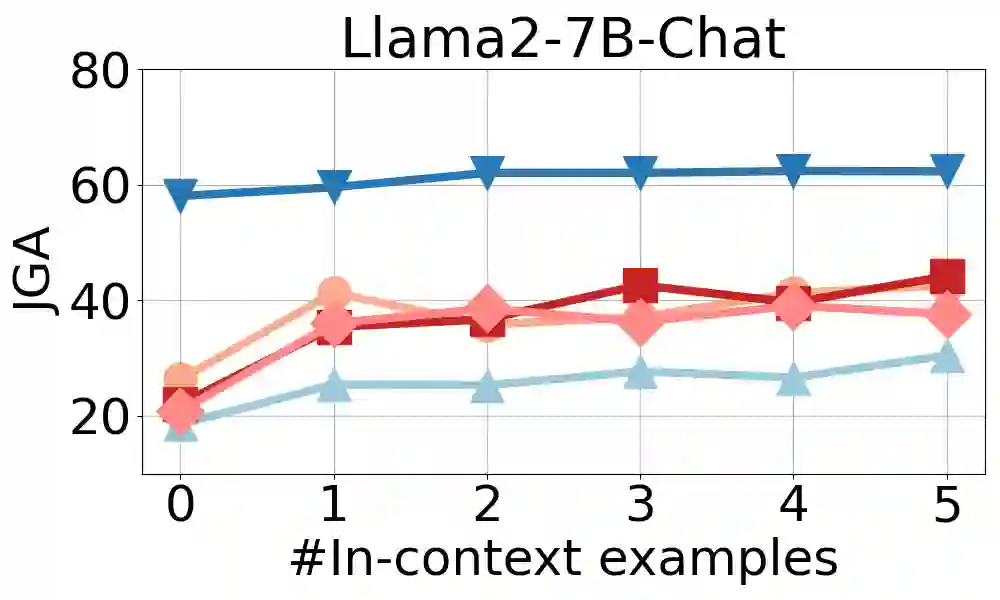

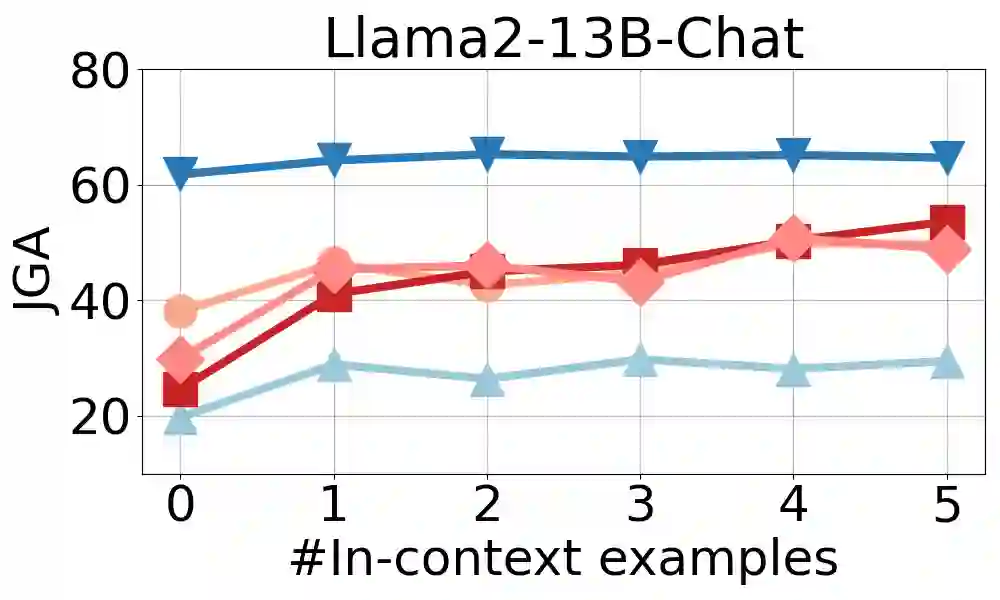

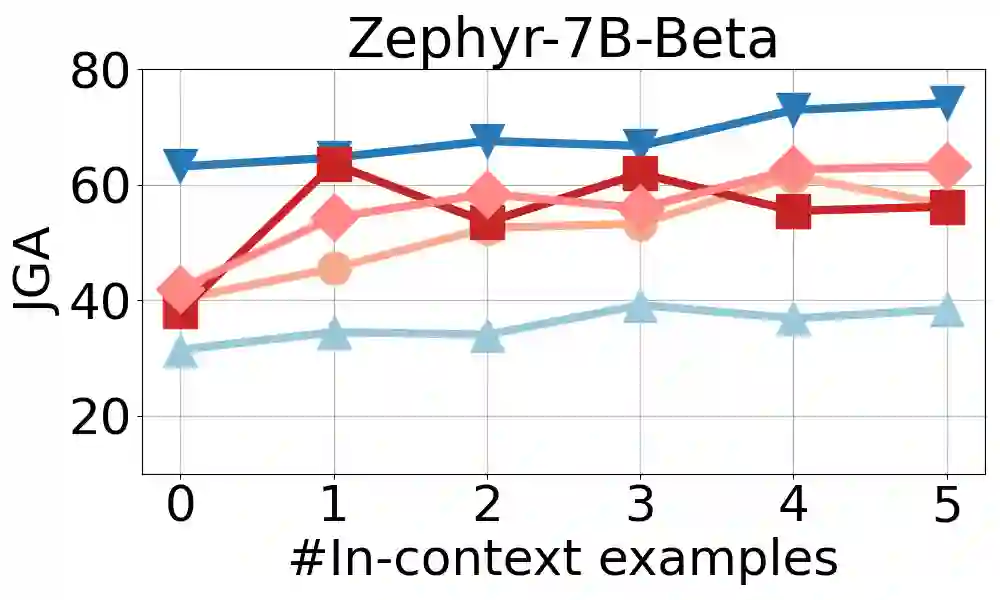

Large language models (LLMs) are increasingly prevalent in conversational systems due to their advanced understanding and generative capabilities in general contexts. However, their effectiveness in task-oriented dialogues (TOD), which requires not only response generation but also effective dialogue state tracking (DST) within specific tasks and domains, remains less satisfying. In this work, we propose a novel approach FnCTOD for solving DST with LLMs through function calling. This method improves zero-shot DST, allowing adaptation to diverse domains without extensive data collection or model tuning. Our experimental results demonstrate that our approach achieves exceptional performance with both modestly sized open-source and also proprietary LLMs: with in-context prompting it enables various 7B or 13B parameter models to surpass the previous state-of-the-art (SOTA) achieved by ChatGPT, and improves ChatGPT's performance beating the SOTA by 5.6% average joint goal accuracy (JGA). Individual model results for GPT-3.5 and GPT-4 are boosted by 4.8% and 14%, respectively. We also show that by fine-tuning on a small collection of diverse task-oriented dialogues, we can equip modest at https://github.com/facebookresearch/FnCTOD

翻译:大型语言模型(LLMs)因其在通用语境中具备先进的理解和生成能力而在对话系统中日益普及。然而,它们在任务导向型对话(TOD)中的效果仍不尽如人意——这类对话不仅需要响应生成,还需要在特定任务和领域内进行有效的对话状态跟踪(DST)。本文提出一种创新方法FnCTOD,通过函数调用利用LLMs解决DST问题。该方法改进了零样本DST,使其无需大量数据收集或模型调优即可适应多种领域。实验结果表明,我们的方法在规模适中的开源LLMs和专有LLMs上均表现卓越:通过上下文提示,使多种7B或13B参数模型超越先前ChatGPT实现的当前最优性能(SOTA),并将ChatGPT的性能进一步提升,以平均联合目标准确率(JGA)衡量超出SOTA达5.6%。GPT-3.5和GPT-4的个体模型结果分别提升4.8%和14%。我们还证明,通过对少量多样化任务导向型对话进行微调,即可使中等规模的模型性能得到提升(代码地址:https://github.com/facebookresearch/FnCTOD)。