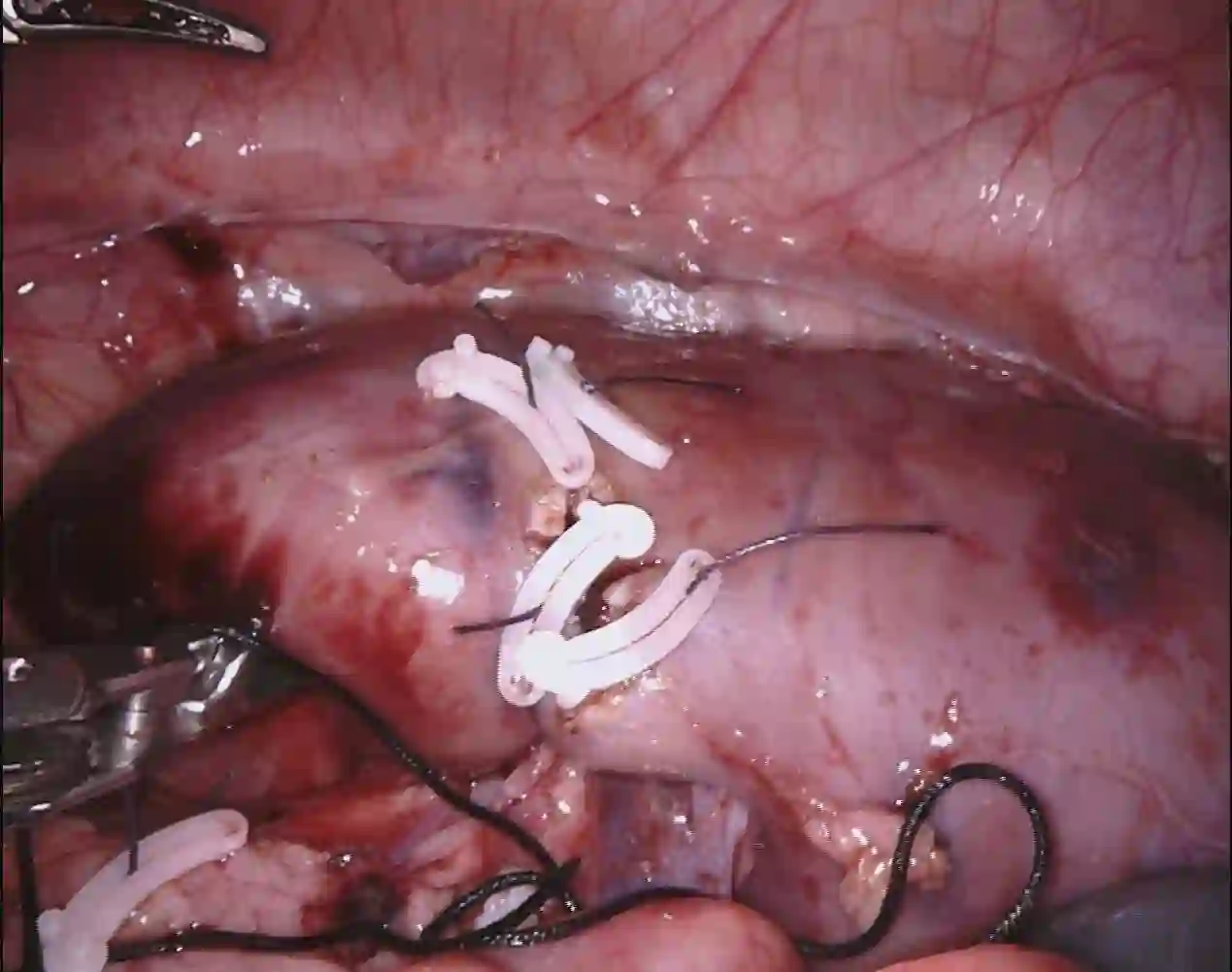

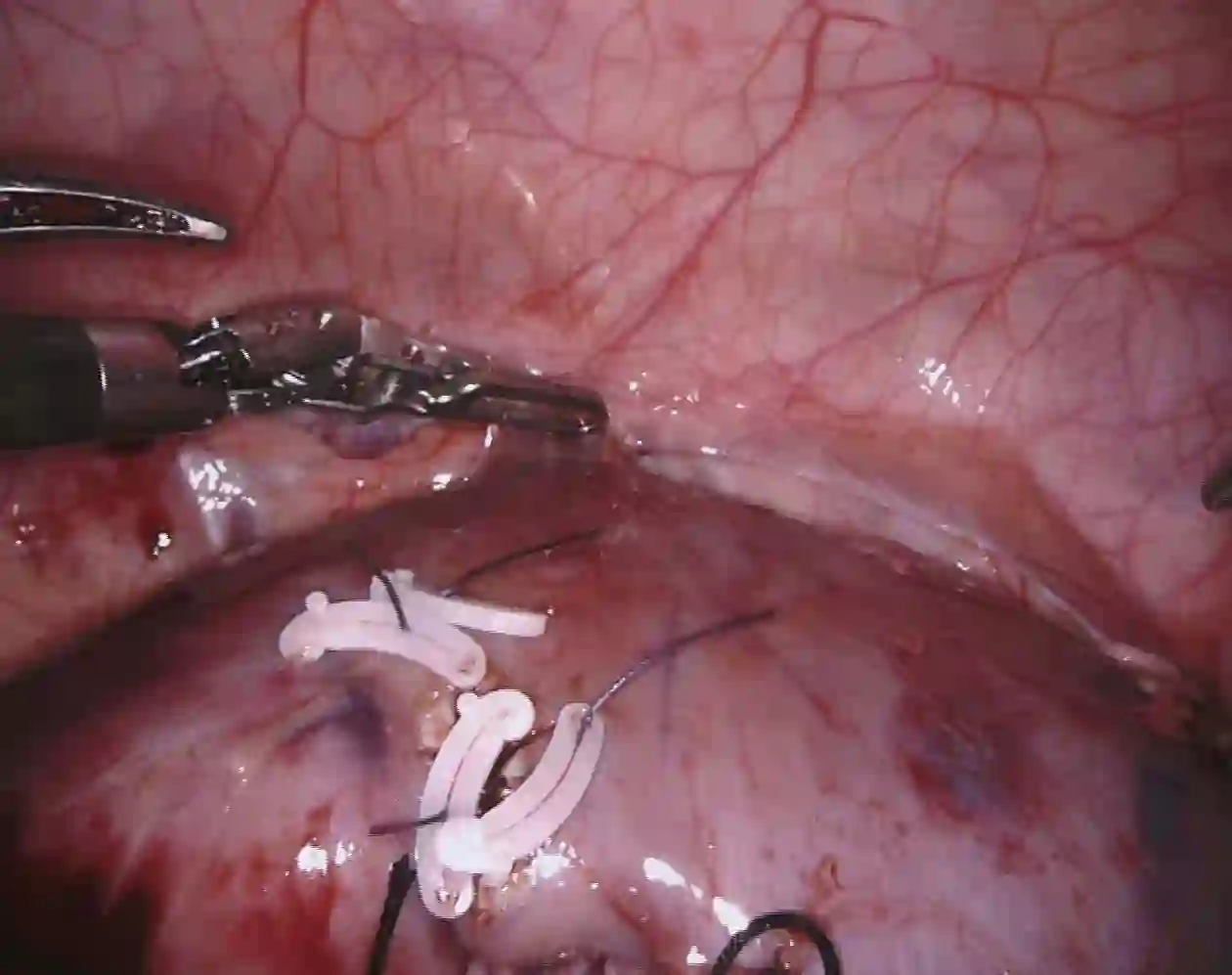

DeepSeek series have demonstrated outstanding performance in general scene understanding, question-answering (QA), and text generation tasks, owing to its efficient training paradigm and strong reasoning capabilities. In this study, we investigate the dialogue capabilities of the DeepSeek model in robotic surgery scenarios, focusing on tasks such as Single Phrase QA, Visual QA, and Detailed Description. The Single Phrase QA tasks further include sub-tasks such as surgical instrument recognition, action understanding, and spatial position analysis. We conduct extensive evaluations using publicly available datasets, including EndoVis18 and CholecT50, along with their corresponding dialogue data. Our comprehensive evaluation results indicate that, when provided with specific prompts, DeepSeek-V3 performs well in surgical instrument and tissue recognition tasks However, DeepSeek-V3 exhibits significant limitations in spatial position analysis and struggles to understand surgical actions accurately. Additionally, our findings reveal that, under general prompts, DeepSeek-V3 lacks the ability to effectively analyze global surgical concepts and fails to provide detailed insights into surgical scenarios. Based on our observations, we argue that the DeepSeek-V3 is not ready for vision-language tasks in surgical contexts without fine-tuning on surgery-specific datasets.

翻译:DeepSeek系列模型凭借其高效的训练范式与强大的推理能力,在通用场景理解、问答(QA)及文本生成任务中展现出卓越性能。本研究探讨了DeepSeek模型在机器人手术场景中的对话能力,重点关注单短语问答、视觉问答及细节描述等任务。单短语问答任务进一步包含手术器械识别、动作理解与空间位置分析等子任务。我们使用公开数据集(包括EndoVis18与CholecT50)及其对应对话数据进行了广泛评估。综合评估结果表明:在特定提示下,DeepSeek-V3在手术器械与组织识别任务中表现良好;然而,该模型在空间位置分析方面存在显著局限,且难以准确理解手术动作。此外,研究发现:在通用提示下,DeepSeek-V3缺乏分析全局手术概念的能力,无法对手术场景提供细致洞察。基于观察结果,我们认为DeepSeek-V3若未经过手术专用数据集的微调,尚无法胜任手术场景下的视觉语言任务。