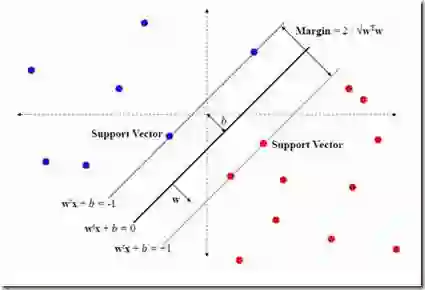

The training of classification models for fault diagnosis tasks using geographically dispersed data is a crucial task for original parts manufacturers (OEMs) seeking to provide long-term service contracts (LTSCs) to their customers. Due to privacy and bandwidth constraints, such models must be trained in a federated fashion. Moreover, due to harsh industrial settings the data often suffers from feature and label uncertainty. Therefore, we study the problem of training a distributionally robust (DR) support vector machine (SVM) in a federated fashion over a network comprised of a central server and $G$ clients without sharing data. We consider the setting where the local data of each client $g$ is sampled from a unique true distribution $\mathbb{P}_g$, and the clients can only communicate with the central server. We propose a novel Mixture of Wasserstein Balls (MoWB) ambiguity set that relies on local Wasserstein balls centered at the empirical distribution of the data at each client. We study theoretical aspects of the proposed ambiguity set, deriving its out-of-sample performance guarantees and demonstrating that it naturally allows for the separability of the DR problem. Subsequently, we propose two distributed optimization algorithms for training the global FDR-SVM: i) a subgradient method-based algorithm, and ii) an alternating direction method of multipliers (ADMM)-based algorithm. We derive the optimization problems to be solved by each client and provide closed-form expressions for the computations performed by the central server during each iteration for both algorithms. Finally, we thoroughly examine the performance of the proposed algorithms in a series of numerical experiments utilizing both simulation data and popular real-world datasets.

翻译:利用地理分散数据训练故障诊断任务的分类模型,是原始设备制造商(OEM)为其客户提供长期服务合同(LTSC)的关键任务。由于隐私和带宽限制,此类模型必须以联邦方式进行训练。此外,由于严苛的工业环境,数据常存在特征和标签不确定性。因此,我们研究在由中央服务器和$G$个客户端组成的网络中,以联邦方式训练分布鲁棒(DR)支持向量机(SVM)的问题,且不共享数据。我们考虑每个客户端$g$的本地数据均从唯一的真实分布$\mathbb{P}_g$中采样,且客户端仅能与中央服务器通信。我们提出了一种新颖的混合Wasserstein球(MoWB)模糊集,该模糊集依赖于以每个客户端数据经验分布为中心的局部Wasserstein球。我们研究了所提模糊集的理论特性,推导了其样本外性能保证,并证明其天然允许DR问题的可分离性。随后,我们提出了两种用于训练全局FDR-SVM的分布式优化算法:i) 基于次梯度方法的算法,以及ii) 基于交替方向乘子法(ADMM)的算法。我们推导了每个客户端需要解决的优化问题,并为两种算法中中央服务器在每次迭代期间执行的计算提供了闭式表达式。最后,我们利用仿真数据和流行的真实数据集,在一系列数值实验中深入检验了所提算法的性能。