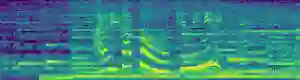

Speech Emotion Recognition (SER) research has faced limitations due to the lack of standard and sufficiently large datasets. Recent studies have leveraged pre-trained models to extract features for downstream tasks such as SER. This work explores the capabilities of Whisper, a pre-trained ASR system, in speech emotion recognition by proposing two attention-based pooling methods, Multi-head Attentive Average Pooling and QKV Pooling, designed to efficiently reduce the dimensionality of Whisper representations while preserving emotional features. We experiment on English and Persian, using the IEMOCAP and ShEMO datasets respectively, with Whisper Tiny and Small. Our multi-head QKV architecture achieves state-of-the-art results on the ShEMO dataset, with a 2.47% improvement in unweighted accuracy. We further compare the performance of different Whisper encoder layers and find that intermediate layers often perform better for SER on the Persian dataset, providing a lightweight and efficient alternative to much larger models such as HuBERT X-Large. Our findings highlight the potential of Whisper as a representation extractor for SER and demonstrate the effectiveness of attention-based pooling for dimension reduction.

翻译:语音情感识别研究因缺乏标准化且足够大规模的数据集而面临局限。近期研究利用预训练模型提取特征以支持下游任务,如语音情感识别。本研究探索了预训练自动语音识别系统Whisper在语音情感识别中的能力,提出了两种基于注意力的池化方法——多头注意力平均池化与QKV池化,旨在高效降低Whisper表征维度的同时保留情感特征。我们在英语和波斯语上分别使用IEMOCAP和ShEMO数据集进行实验,采用Whisper Tiny和Small版本。我们的多头QKV架构在ShEMO数据集上取得了最先进的结果,未加权准确率提升了2.47%。我们进一步比较了不同Whisper编码器层的性能,发现中间层在波斯语数据集上的语音情感识别任务中通常表现更优,这为替代HuBERT X-Large等大型模型提供了轻量高效的方案。我们的研究结果凸显了Whisper作为语音情感识别表征提取器的潜力,并证明了基于注意力的池化方法在降维方面的有效性。