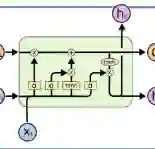

We present LatinPipe, the winning submission to the EvaLatin 2024 Dependency Parsing shared task. Our system consists of a fine-tuned concatenation of base and large pre-trained LMs, with a dot-product attention head for parsing and softmax classification heads for morphology to jointly learn both dependency parsing and morphological analysis. It is trained by sampling from seven publicly available Latin corpora, utilizing additional harmonization of annotations to achieve a more unified annotation style. Before fine-tuning, we train the system for a few initial epochs with frozen weights. We also add additional local relative contextualization by stacking the BiLSTM layers on top of the Transformer(s). Finally, we ensemble output probability distributions from seven randomly instantiated networks for the final submission. The code is available at https://github.com/ufal/evalatin2024-latinpipe.

翻译:我们介绍了LatinPipe,这是EvaLatin 2024依存句法分析共享任务的获胜方案。我们的系统由一个微调后的基础与大型预训练语言模型拼接组成,采用点积注意力头进行句法分析,并配合softmax分类头进行形态分析,以联合学习依存句法分析和形态分析。该系统通过从七个公开可用的拉丁语语料库中采样进行训练,并利用额外的标注协调以实现更统一的标注风格。在微调之前,我们以冻结权重的方式对系统进行了几个初始周期的训练。我们还在Transformer层之上堆叠BiLSTM层,以增加额外的局部相对上下文信息。最后,我们对七个随机初始化的网络输出的概率分布进行集成,作为最终提交结果。代码可在https://github.com/ufal/evalatin2024-latinpipe获取。