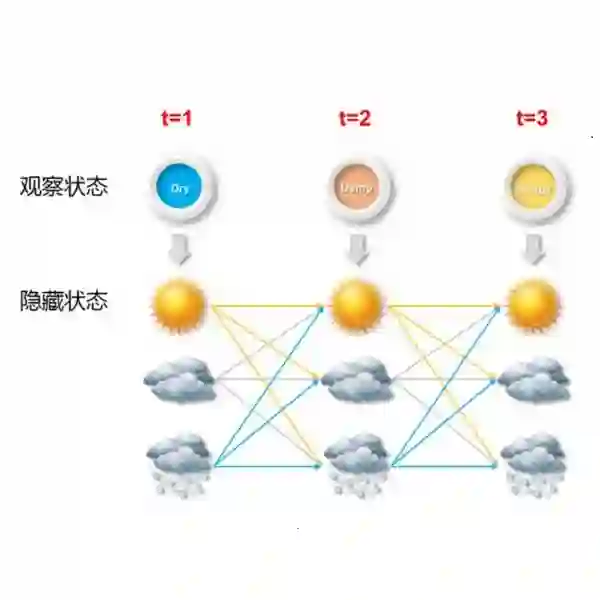

Controlled generation imposes sequence-level constraints (syntax, style, safety) that depend on future tokens, making exact conditioning of an autoregressive LM intractable. Tractable surrogates such as HMMs can approximate continuation distributions and steer decoding, but standard surrogates are often weakly context-aware. We propose Learning to Look Ahead (LTLA), a hybrid method that uses base-LM embeddings to condition a globally learned tractable surrogate: a neural head predicts only a prefix-dependent latent prior, while a shared HMM answers continuation queries exactly. LTLA is designed to avoid two common efficiency traps when adding neural context. First, it avoids vocabulary-sized prefix rescoring (V extra LM evaluations) by scoring all next-token candidates via a single batched HMM forward update. Second, it avoids predicting a new HMM per prefix by learning one shared HMM and conditioning only the latent prior, which enables reuse of cached future-likelihood (backward) messages across decoding steps. Empirically, LTLA improves continuation likelihood over standard HMM surrogates, enables lookahead control for vision--language models by incorporating continuous context, achieves 100% syntactic constraint satisfaction, and improves detoxification while adding only a 14% decoding-time overhead.

翻译:受控生成施加了依赖于未来标记的序列级约束(语法、风格、安全性),使得自回归语言模型的精确条件化变得难以处理。隐马尔可夫模型等可处理替代模型可以近似延续分布并引导解码,但标准替代模型通常上下文感知能力较弱。我们提出“学习前瞻”(LTLA),一种混合方法,它利用基础语言模型的嵌入来条件化一个全局学习的可处理替代模型:神经头仅预测前缀相关的潜在先验,而共享的隐马尔可夫模型则精确地回答延续查询。LTLA的设计旨在避免在增加神经上下文时常见的两种效率陷阱。首先,它通过单个批处理的隐马尔可夫模型前向更新对所有下一个标记候选进行评分,从而避免了词汇表大小的前缀重评分(需要V次额外的语言模型评估)。其次,它通过学习一个共享的隐马尔可夫模型并仅条件化潜在先验,避免了为每个前缀预测一个新的隐马尔可夫模型,这使得缓存的未来似然(后向)消息能够在解码步骤间重复使用。实验表明,LTLA在延续似然性上优于标准的隐马尔可夫模型替代模型,通过融入连续上下文实现了视觉-语言模型的前瞻控制,达到了100%的句法约束满足率,并在仅增加14%解码时间开销的同时改善了去毒性效果。