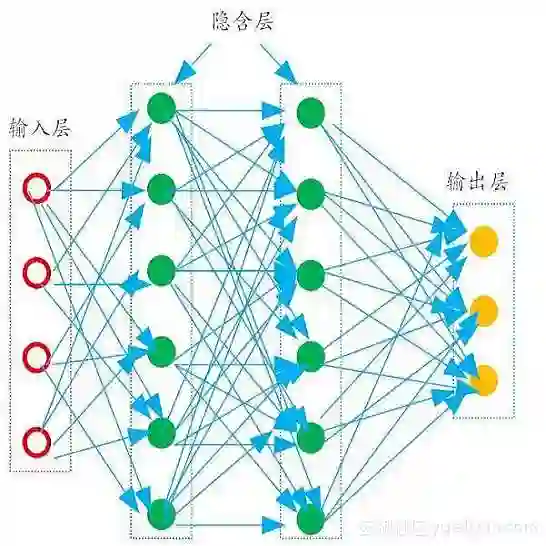

Hyperspectral image (HSI) classification has recently reached its performance bottleneck. Multimodal data fusion is emerging as a promising approach to overcome this bottleneck by providing rich complementary information from the supplementary modality (X-modality). However, achieving comprehensive cross-modal interaction and fusion that can be generalized across different sensing modalities is challenging due to the disparity in imaging sensors, resolution, and content of different modalities. In this study, we propose a Local-to-Global Cross-modal Attention-aware Fusion (LoGoCAF) framework for HSI-X classification that jointly considers efficiency, accuracy, and generalizability. LoGoCAF adopts a pixel-to-pixel two-branch semantic segmentation architecture to learn information from HSI and X modalities. The pipeline of LoGoCAF consists of a local-to-global encoder and a lightweight multilayer perceptron (MLP) decoder. In the encoder, convolutions are used to encode local and high-resolution fine details in shallow layers, while transformers are used to integrate global and low-resolution coarse features in deeper layers. The MLP decoder aggregates information from the encoder for feature fusion and prediction. In particular, two cross-modality modules, the feature enhancement module (FEM) and the feature interaction and fusion module (FIFM), are introduced in each encoder stage. The FEM is used to enhance complementary information by combining the feature from the other modality across direction-aware, position-sensitive, and channel-wise dimensions. With the enhanced features, the FIFM is designed to promote cross-modality information interaction and fusion for the final semantic prediction. Extensive experiments demonstrate that our LoGoCAF achieves superior performance and generalizes well. The code will be made publicly available.

翻译:高光谱图像(HSI)分类近期已触及性能瓶颈。多模态数据融合通过从补充模态(X模态)提供丰富的互补信息,正成为突破该瓶颈的一种有前景的方法。然而,由于不同模态在成像传感器、分辨率和内容上的差异,实现可泛化至不同传感模态的全面跨模态交互与融合具有挑战性。本研究提出一种用于HSI-X分类的局部到全局跨模态注意力感知融合(LoGoCAF)框架,该框架同时兼顾效率、准确性与泛化能力。LoGoCAF采用像素到像素的双分支语义分割架构,分别从HSI和X模态中学习信息。其流程包含一个局部到全局编码器和一个轻量级多层感知机(MLP)解码器。在编码器中,浅层使用卷积编码局部高分辨率的精细细节,而深层使用Transformer整合全局低分辨率的粗略特征。MLP解码器聚合编码器信息以进行特征融合与预测。特别地,在每个编码器阶段引入了两个跨模态模块:特征增强模块(FEM)和特征交互与融合模块(FIFM)。FEM通过结合另一模态的特征,在方向感知、位置敏感和通道维度上增强互补信息。利用增强后的特征,FIFM旨在促进跨模态信息交互与融合,以进行最终语义预测。大量实验表明,所提出的LoGoCAF实现了优越的性能并具有良好的泛化能力。代码将公开提供。