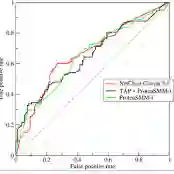

Ensuring data privacy is a significant challenge for machine learning applications, not only during model training but also during evaluation. Federated learning has gained significant research interest in recent years as a result. Current research on federated learning primarily focuses on preserving privacy during the training phase. However, model evaluation has not been adequately addressed, despite the potential for significant privacy leaks during this phase as well. In this paper, we demonstrate that the state-of-the-art AUC computation method for federated learning systems, which utilizes differential privacy, still leaks sensitive information about the test data while also requiring a trusted central entity to perform the computations. More importantly, we show that the performance of this method becomes completely unusable as the data size decreases. In this context, we propose an efficient, accurate, robust, and more secure evaluation algorithm capable of computing the AUC in horizontal federated learning systems. Our approach not only enhances security compared to the current state-of-the-art but also surpasses the state-of-the-art AUC computation method in both approximation performance and computational robustness, as demonstrated by experimental results. To illustrate, our approach can efficiently calculate the AUC of a federated learning system involving 100 parties, achieving 99.93% accuracy in just 0.68 seconds, regardless of data size, while providing complete data privacy.

翻译:确保数据隐私是机器学习应用面临的一大挑战,不仅体现在模型训练阶段,在评估阶段同样如此。近年来,联邦学习因此获得了广泛的研究关注。当前联邦学习的研究主要聚焦于训练阶段的隐私保护。然而,尽管模型评估阶段也可能导致显著的隐私泄露,这一问题却未得到充分解决。在本文中,我们证明了联邦学习系统中当前最先进的AUC计算方法(采用差分隐私技术)仍会泄露测试数据的敏感信息,同时还需要一个可信的中心实体来执行计算。更重要的是,我们发现该方法的性能在数据规模较小时会完全失效。基于此,我们提出了一种高效、准确、鲁棒且更安全的评估算法,能够在水平联邦学习系统中计算AUC。实验结果表明,与现有最先进方法相比,我们的方法不仅在安全性上有所提升,而且在逼近性能和计算鲁棒性方面均超越了当前最优的AUC计算方法。举例而言,我们的方法能够高效计算包含100个参与方的联邦学习系统的AUC值,无论数据规模大小,均可在0.68秒内达到99.93%的准确率,同时提供完全的数据隐私保护。