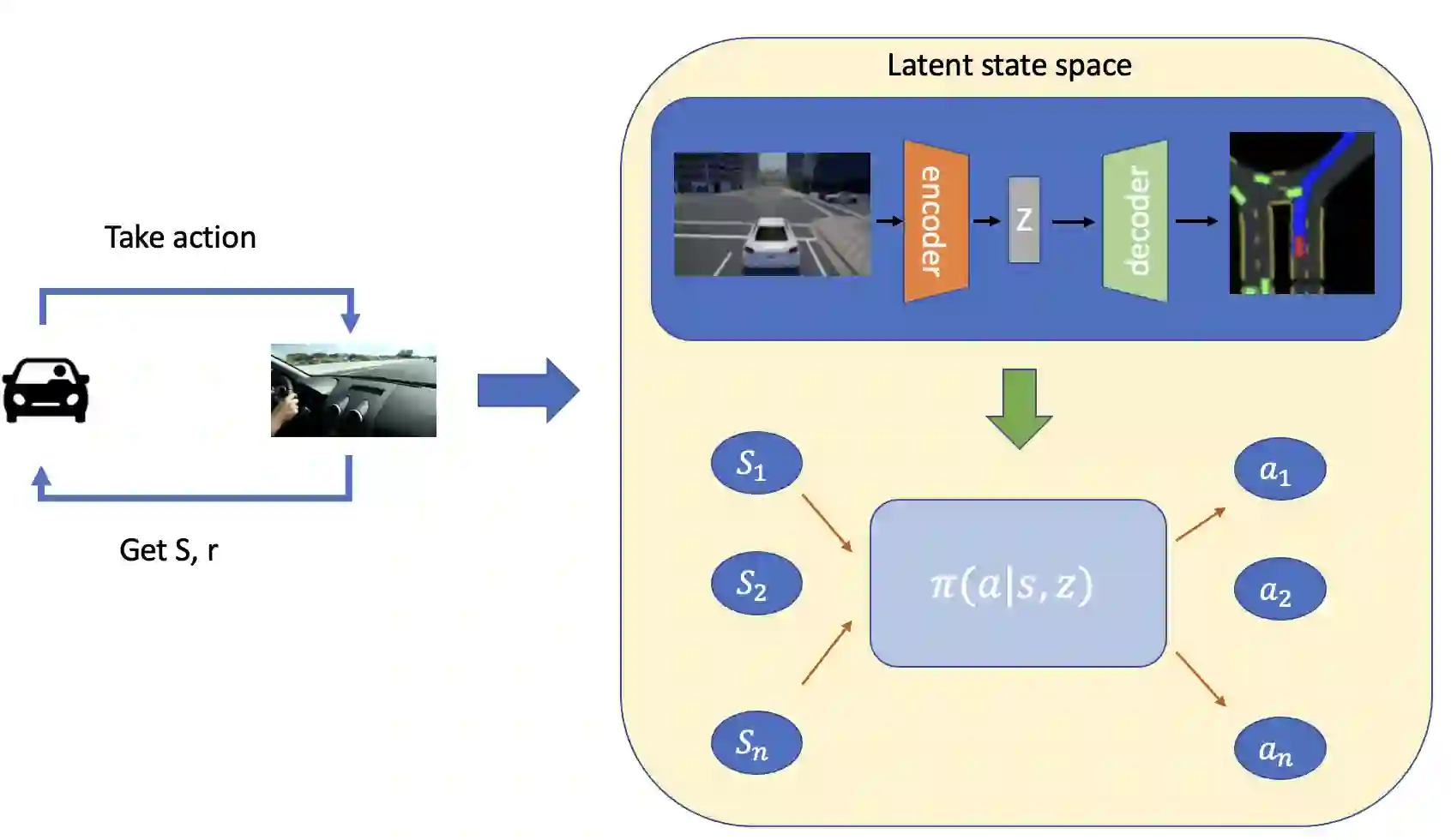

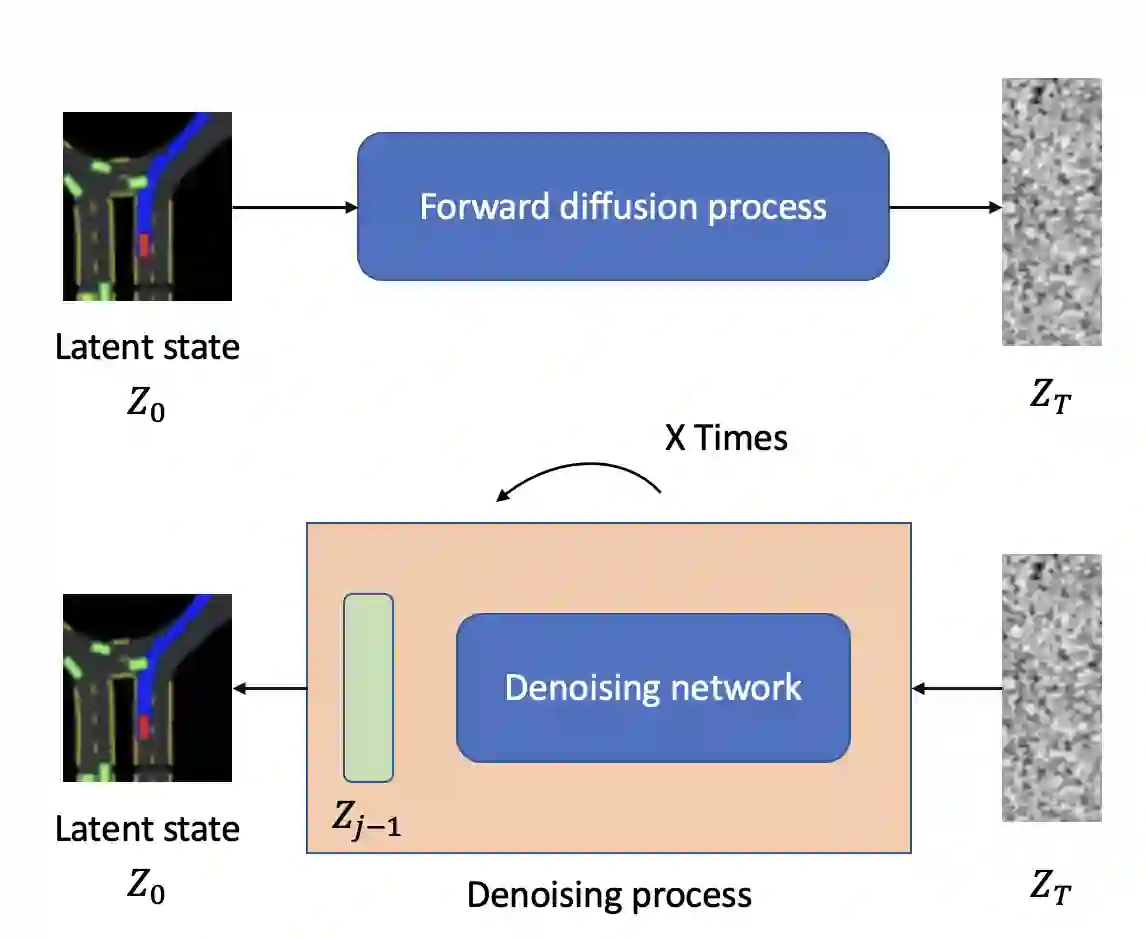

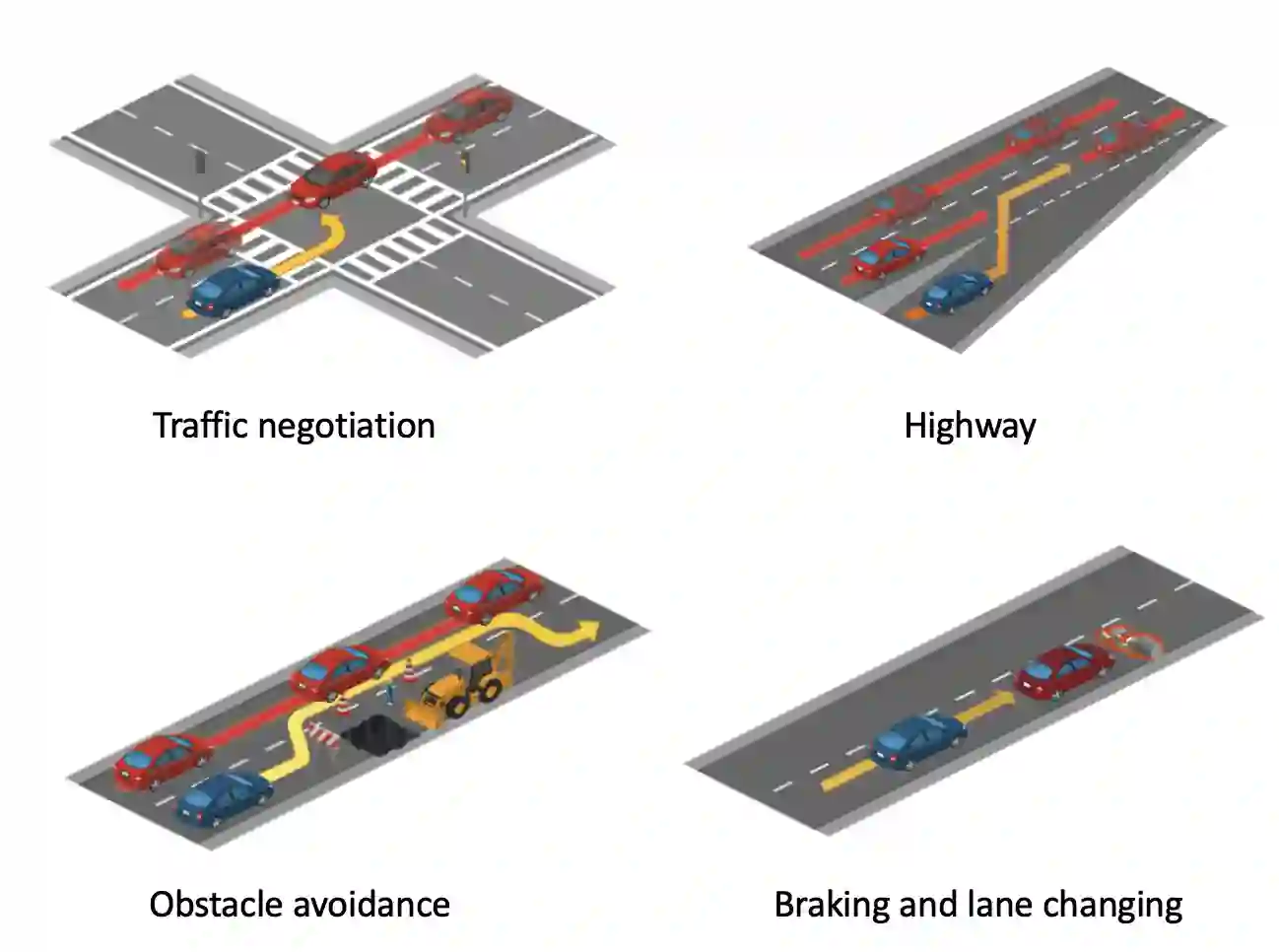

With the advancement of autonomous driving, ensuring safety during motion planning and navigation is becoming more and more important. However, most end-to-end planning methods suffer from a lack of safety. This research addresses the safety issue in the control optimization problem of autonomous driving, formulated as Constrained Markov Decision Processes (CMDPs). We propose a novel, model-based approach for policy optimization, utilizing a conditional Value-at-Risk based Soft Actor Critic to manage constraints in complex, high-dimensional state spaces effectively. Our method introduces a worst-case actor to guide safe exploration, ensuring rigorous adherence to safety requirements even in unpredictable scenarios. The policy optimization employs the Augmented Lagrangian method and leverages latent diffusion models to predict and simulate future trajectories. This dual approach not only aids in navigating environments safely but also refines the policy's performance by integrating distribution modeling to account for environmental uncertainties. Empirical evaluations conducted in both simulated and real environment demonstrate that our approach outperforms existing methods in terms of safety, efficiency, and decision-making capabilities.

翻译:随着自动驾驶技术的进步,在运动规划与导航过程中确保安全性变得愈发重要。然而,大多数端到端规划方法存在安全性不足的问题。本研究针对自动驾驶控制优化问题中的安全挑战展开探讨,该问题被形式化为约束马尔可夫决策过程(CMDPs)。我们提出了一种新颖的基于模型的策略优化方法,利用基于条件风险价值的柔性演员-评论家算法,在复杂高维状态空间中有效管理约束。该方法引入最坏情况演员以引导安全探索,确保即使在不可预测的场景下也能严格遵守安全要求。策略优化采用增广拉格朗日方法,并利用潜在扩散模型来预测和模拟未来轨迹。这种双重策略不仅有助于安全导航环境,还通过集成分布建模来考虑环境不确定性,从而提升策略性能。在仿真和真实环境中进行的实证评估表明,我们的方法在安全性、效率和决策能力方面均优于现有方法。