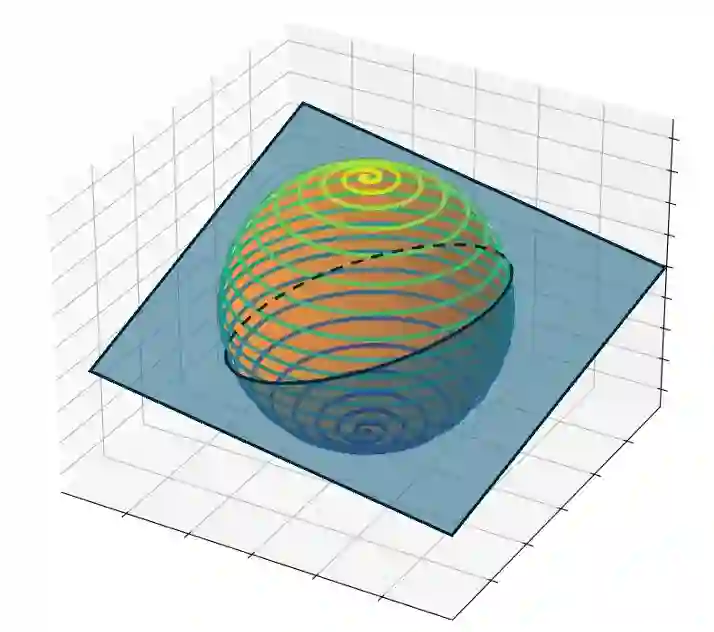

The outstanding performance of large foundational models across diverse tasks-from computer vision to speech and natural language processing-has significantly increased their demand. However, storing and transmitting these models pose significant challenges due to their massive size (e.g., 350GB for GPT-3). Recent literature has focused on compressing the original weights or reducing the number of parameters required for fine-tuning these models. These compression methods typically involve constraining the parameter space, for example, through low-rank reparametrization (e.g., LoRA) or quantization (e.g., QLoRA) during model training. In this paper, we present MCNC as a novel model compression method that constrains the parameter space to low-dimensional pre-defined and frozen nonlinear manifolds, which effectively cover this space. Given the prevalence of good solutions in over-parameterized deep neural networks, we show that by constraining the parameter space to our proposed manifold, we can identify high-quality solutions while achieving unprecedented compression rates across a wide variety of tasks. Through extensive experiments in computer vision and natural language processing tasks, we demonstrate that our method, MCNC, significantly outperforms state-of-the-art baselines in terms of compression, accuracy, and/or model reconstruction time.

翻译:大型基础模型在从计算机视觉到语音和自然语言处理等多样化任务中表现卓越,这显著增加了对其的需求。然而,由于这些模型的庞大规模(例如GPT-3高达350GB),其存储与传输带来了巨大挑战。近期研究集中于压缩原始权重或减少微调这些模型所需的参数量。这些压缩方法通常涉及约束参数空间,例如在模型训练期间通过低秩重参数化(如LoRA)或量化(如QLoRA)实现。本文提出MCNC作为一种新颖的模型压缩方法,它将参数空间约束到低维、预定义且固定的非线性流形上,这些流形能有效覆盖该空间。鉴于过参数化深度神经网络中存在大量优质解,我们证明通过将参数空间约束到所提出的流形上,我们能够在多种任务中识别高质量解,同时实现前所未有的压缩率。通过在计算机视觉和自然语言处理任务中的大量实验,我们证明MCNC方法在压缩率、精度和/或模型重建时间方面显著优于现有最先进的基线方法。