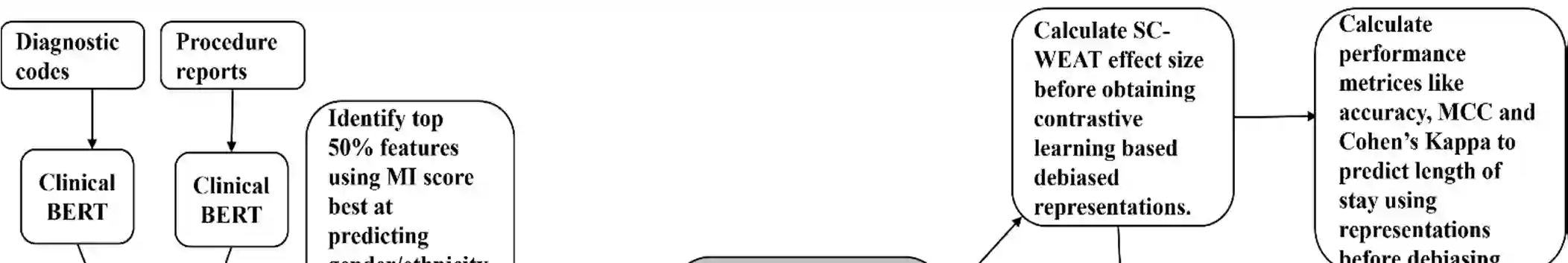

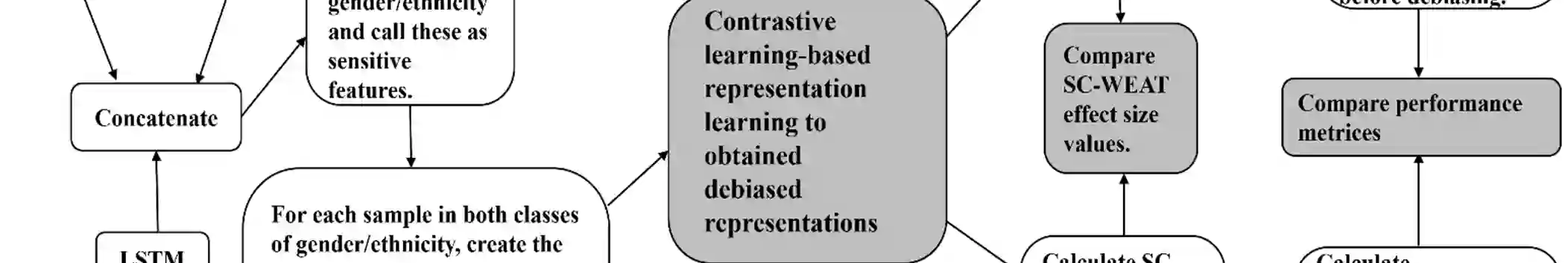

Artificial intelligence based predictive models trained on the clinical notes can be demographically biased. This could lead to adverse healthcare disparities in predicting outcomes like length of stay of the patients. Thus, it is necessary to mitigate the demographic biases within these models. We proposed an implicit in-processing debiasing method to combat disparate treatment which occurs when the machine learning model predict different outcomes for individuals based on the sensitive attributes like gender, ethnicity, race, and likewise. For this purpose, we used clinical notes of heart failure patients and used diagnostic codes, procedure reports and physiological vitals of the patients. We used Clinical BERT to obtain feature embeddings within the diagnostic codes and procedure reports, and LSTM autoencoders to obtain feature embeddings within the physiological vitals. Then, we trained two separate deep learning contrastive learning frameworks, one for gender and the other for ethnicity to obtain debiased representations within those demographic traits. We called this debiasing framework Debias-CLR. We leveraged clinical phenotypes of the patients identified in the diagnostic codes and procedure reports in the previous study to measure fairness statistically. We found that Debias-CLR was able to reduce the Single-Category Word Embedding Association Test (SC-WEAT) effect size score when debiasing for gender and ethnicity. We further found that to obtain fair representations in the embedding space using Debias-CLR, the accuracy of the predictive models on downstream tasks like predicting length of stay of the patients did not get reduced as compared to using the un-debiased counterparts for training the predictive models. Hence, we conclude that our proposed approach, Debias-CLR is fair and representative in mitigating demographic biases and can reduce health disparities.

翻译:基于人工智能的预测模型在临床记录上进行训练时可能存在人口统计学偏差。这可能导致在预测患者住院时长等结果时产生不利的医疗差异。因此,有必要减轻这些模型中的人口统计学偏差。我们提出了一种隐式处理中的去偏方法,以应对机器学习模型基于性别、民族、种族等敏感属性对个体做出不同预测时所发生的差别对待问题。为此,我们使用了心力衰竭患者的临床记录,并利用了患者的诊断代码、手术报告和生理体征数据。我们采用Clinical BERT从诊断代码和手术报告中获取特征嵌入,并使用LSTM自编码器从生理体征数据中获取特征嵌入。随后,我们分别训练了两个独立的深度学习对比学习框架,一个针对性别,另一个针对民族,以获取在这些人口统计学特征上去偏后的表征。我们将此去偏框架命名为Debias-CLR。我们利用先前研究中从诊断代码和手术报告识别出的患者临床表型,对公平性进行了统计度量。我们发现,在针对性别和民族进行去偏时,Debias-CLR能够降低单类别词嵌入关联测试(SC-WEAT)的效应量分数。我们进一步发现,使用Debias-CLR在嵌入空间中获取公平表征时,预测模型在下游任务(如预测患者住院时长)上的准确性,相较于使用未去偏表征训练预测模型的情况并未降低。因此,我们得出结论:我们提出的Debias-CLR方法在减轻人口统计学偏差方面是公平且具有代表性的,并能够减少医疗差异。