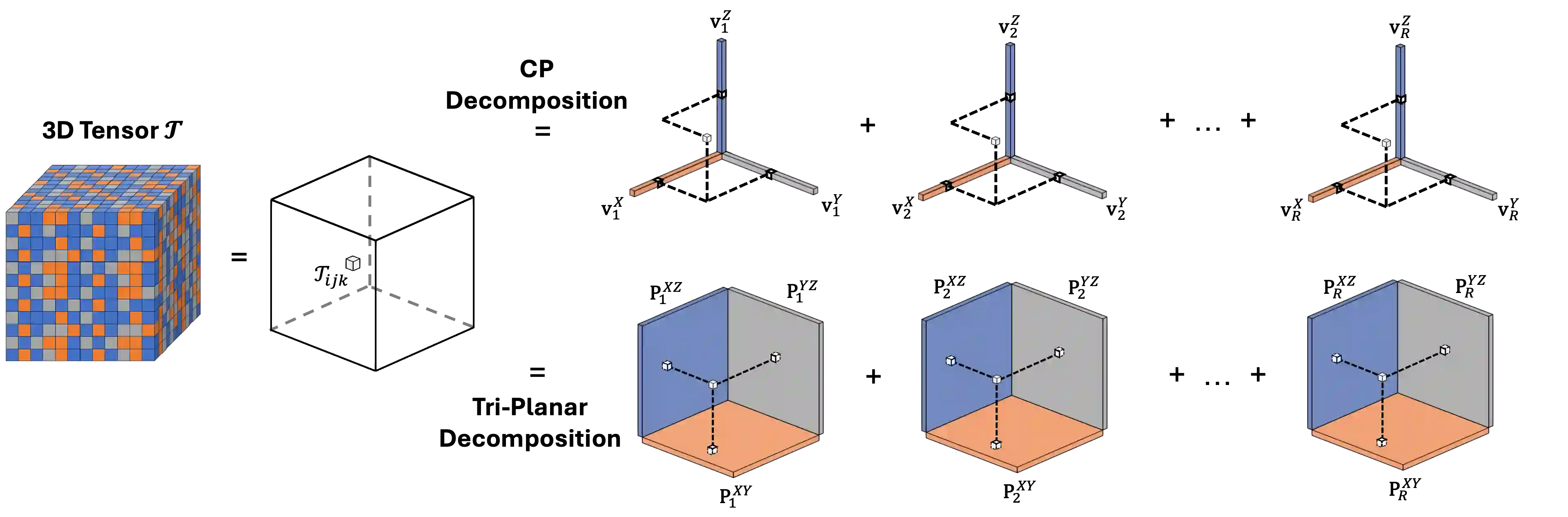

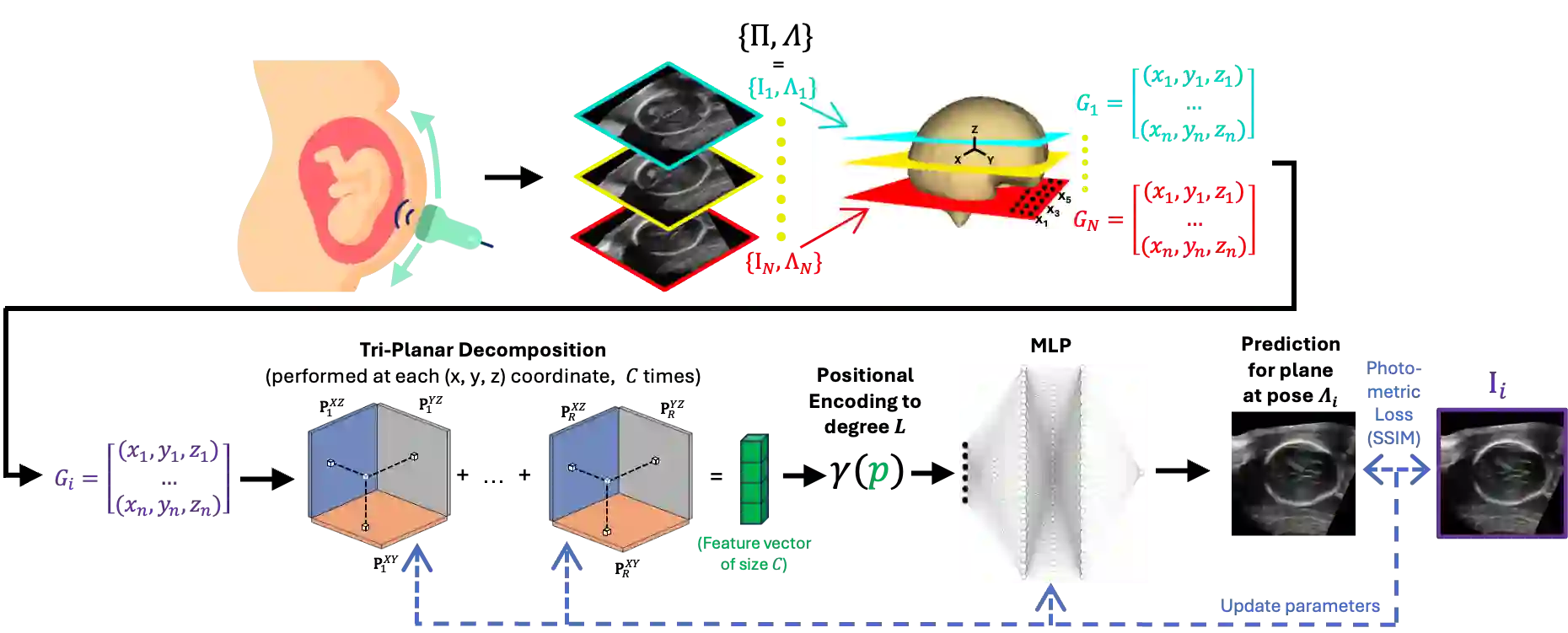

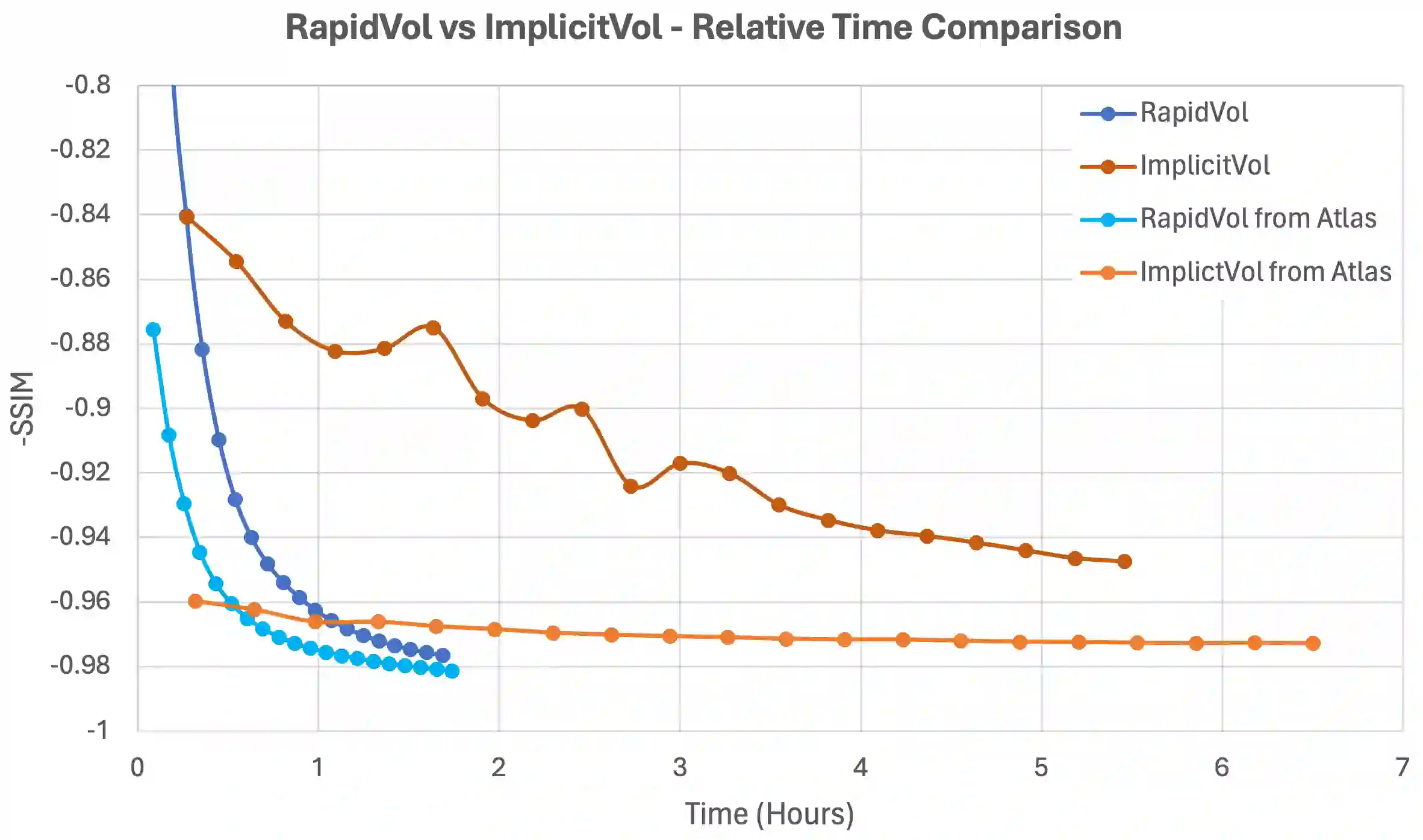

Two-dimensional (2D) freehand ultrasonography is one of the most commonly used medical imaging modalities, particularly in obstetrics and gynaecology. However, it only captures 2D cross-sectional views of inherently 3D anatomies, losing valuable contextual information. As an alternative to requiring costly and complex 3D ultrasound scanners, 3D volumes can be constructed from 2D scans using machine learning. However this usually requires long computational time. Here, we propose RapidVol: a neural representation framework to speed up slice-to-volume ultrasound reconstruction. We use tensor-rank decomposition, to decompose the typical 3D volume into sets of tri-planes, and store those instead, as well as a small neural network. A set of 2D ultrasound scans, with their ground truth (or estimated) 3D position and orientation (pose) is all that is required to form a complete 3D reconstruction. Reconstructions are formed from real fetal brain scans, and then evaluated by requesting novel cross-sectional views. When compared to prior approaches based on fully implicit representation (e.g. neural radiance fields), our method is over 3x quicker, 46% more accurate, and if given inaccurate poses is more robust. Further speed-up is also possible by reconstructing from a structural prior rather than from scratch.

翻译:二维(2D)手持式超声检查是最常用的医学成像方式之一,尤其在妇产科领域。然而,它仅能捕捉三维解剖结构中的二维截面视图,丢失了宝贵的空间上下文信息。作为成本高昂且复杂的三维超声扫描仪的替代方案,可通过机器学习从二维扫描构建三维体积,但这通常需要较长的计算时间。本文提出RapidVol:一种用于加速切片到体积超声重建的神经表示框架。我们采用张量秩分解,将典型的三维体积分解为多组三平面并存储这些平面及一个小型神经网络。仅需一组二维超声扫描及其真实(或估计的)三维位置和方向(位姿),即可完成完整的三维重建。基于真实胎儿脑部扫描进行重建,并通过请求新截面视图进行评估。与基于完全隐式表示的先前方法(如神经辐射场)相比,我们的方法速度提升3倍以上,精度提高46%,且在给定不准确位姿时更具鲁棒性。通过从结构先验而非零开始重建,还可实现进一步加速。