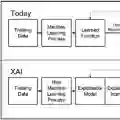

Feature attributions are post-training analysis methods that assess how various input features of a machine learning model contribute to an output prediction. Their interpretation is straightforward when features act independently, but it becomes less clear when the predictive model involves interactions, such as multiplicative relationships or joint feature contributions. In this work, we propose a general theory of higher-order feature attribution, which we develop on the foundation of Integrated Gradients (IG). This work extends existing frameworks in the literature on explainable AI. When using IG as the method of feature attribution, we discover natural connections to statistics and topological signal processing. We provide several theoretical results that establish the theory, and we validate our theory on a few examples.

翻译:特征归因是一种训练后分析方法,用于评估机器学习模型中各输入特征对输出预测的贡献程度。当特征独立作用时,其解释较为直接;但当预测模型涉及交互作用(如乘法关系或联合特征贡献)时,解释则变得不够清晰。本研究基于积分梯度法(IG)提出了一套高阶特征归因的通用理论,拓展了可解释人工智能领域的现有框架。当采用IG作为特征归因方法时,我们发现了其与统计学及拓扑信号处理之间的自然联系。我们提供了若干建立该理论的理论结果,并通过多个示例验证了该理论。