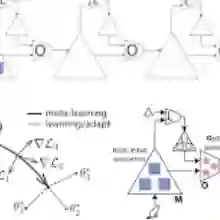

Inspired by the human learning and memory system, particularly the interplay between the hippocampus and cerebral cortex, this study proposes a dual-learner framework comprising a fast learner and a meta learner to address continual Reinforcement Learning~(RL) problems. These two learners are coupled to perform distinct yet complementary roles: the fast learner focuses on knowledge transfer, while the meta learner ensures knowledge integration. In contrast to traditional multi-task RL approaches that share knowledge through average return maximization, our meta learner incrementally integrates new experiences by explicitly minimizing catastrophic forgetting, thereby supporting efficient cumulative knowledge transfer for the fast learner. To facilitate rapid adaptation in new environments, we introduce an adaptive meta warm-up mechanism that selectively harnesses past knowledge. We conduct experiments in various pixel-based and continuous control benchmarks, revealing the superior performance of continual learning for our proposed dual-learner approach relative to baseline methods. The code is released in https://github.com/datake/FAME.

翻译:受人类学习与记忆系统(尤其是海马体与大脑皮层的交互机制)启发,本研究提出一种由快速学习器与元学习器构成的双学习器框架,以解决持续强化学习问题。这两个学习器相互耦合,承担着不同但互补的角色:快速学习器专注于知识迁移,而元学习器则确保知识整合。与通过平均回报最大化实现知识共享的传统多任务强化学习方法不同,我们的元学习器通过显式最小化灾难性遗忘来逐步整合新经验,从而为快速学习器提供高效的累积知识迁移支持。为促进新环境中的快速适应,我们引入了一种自适应元预热机制,该机制能够选择性利用过往知识。我们在多种基于像素的连续控制基准测试中进行了实验,结果表明所提出的双学习器方法在持续学习方面相较于基线方法具有更优越的性能。代码发布于 https://github.com/datake/FAME。