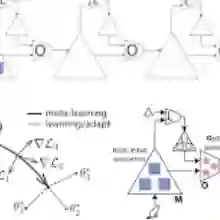

The statelessness of foundation models bottlenecks agentic systems' ability to continually learn, a core capability for long-horizon reasoning and adaptation. To address this limitation, agentic systems commonly incorporate memory modules to retain and reuse past experience, aiming for continual learning during test time. However, most existing memory designs are human-crafted and fixed, which limits their ability to adapt to the diversity and non-stationarity of real-world tasks. In this paper, we introduce ALMA (Automated meta-Learning of Memory designs for Agentic systems), a framework that meta-learns memory designs to replace hand-engineered memory designs, therefore minimizing human effort and enabling agentic systems to be continual learners across diverse domains. Our approach employs a Meta Agent that searches over memory designs expressed as executable code in an open-ended manner, theoretically allowing the discovery of arbitrary memory designs, including database schemas as well as their retrieval and update mechanisms. Extensive experiments across four sequential decision-making domains demonstrate that the learned memory designs enable more effective and efficient learning from experience than state-of-the-art human-crafted memory designs on all benchmarks. When developed and deployed safely, ALMA represents a step toward self-improving AI systems that learn to be adaptive, continual learners.

翻译:基础模型的无状态特性限制了智能系统持续学习的能力,而持续学习是实现长期推理与适应的核心能力。为突破这一限制,智能系统通常引入记忆模块来保存和复用历史经验,旨在测试阶段实现持续学习。然而,现有记忆设计大多为人工构建且固定不变,难以适应现实任务中的多样性与非平稳性。本文提出ALMA(面向智能系统的自动化记忆设计元学习框架),该框架通过元学习生成记忆设计以替代人工设计的记忆方案,从而最大限度减少人工干预,使智能系统能够跨领域实现持续学习。我们的方法采用元智能体,以开放式搜索的方式探索以可执行代码形式表达的记忆设计,理论上能够发现任意形式的记忆结构,包括数据库模式及其检索与更新机制。在四个序列决策领域的广泛实验表明,相较于所有基准测试中最先进的人工设计记忆方案,通过本方法习得的记忆设计能够更高效地从经验中学习。若能在安全前提下开发部署,ALMA标志着人工智能系统向具备自适应能力的持续学习型自我改进系统迈出了重要一步。