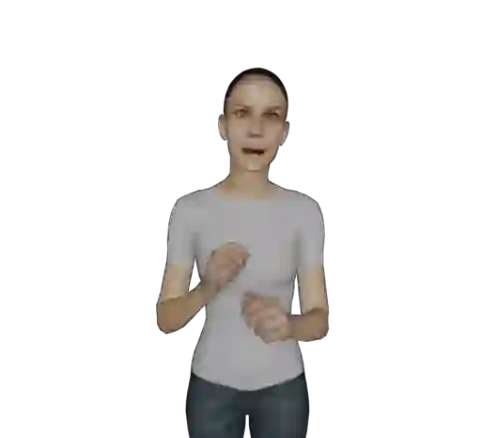

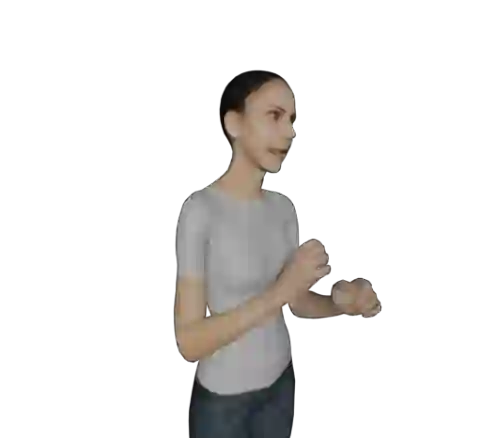

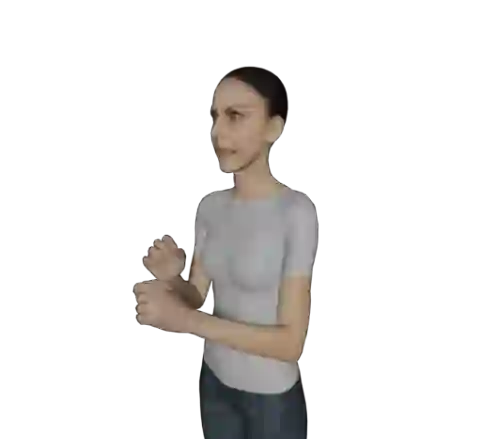

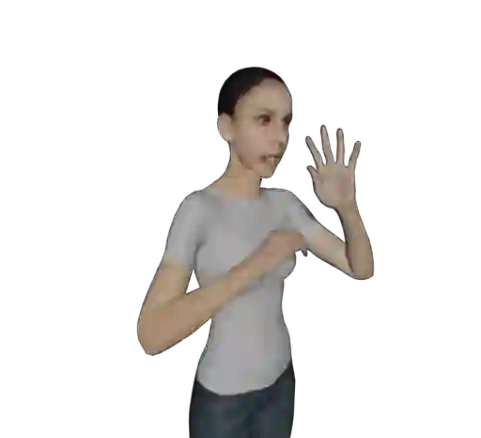

The objective of this paper is to develop a functional system for translating spoken languages into sign languages, referred to as Spoken2Sign translation. The Spoken2Sign task is orthogonal and complementary to traditional sign language to spoken language (Sign2Spoken) translation. To enable Spoken2Sign translation, we present a simple baseline consisting of three steps: 1) creating a gloss-video dictionary using existing Sign2Spoken benchmarks; 2) estimating a 3D sign for each sign video in the dictionary; 3) training a Spoken2Sign model, which is composed of a Text2Gloss translator, a sign connector, and a rendering module, with the aid of the yielded gloss-3D sign dictionary. The translation results are then displayed through a sign avatar. As far as we know, we are the first to present the Spoken2Sign task in an output format of 3D signs. In addition to its capability of Spoken2Sign translation, we also demonstrate that two by-products of our approach-3D keypoint augmentation and multi-view understanding-can assist in keypoint-based sign language understanding. Code and models are available at https://github.com/FangyunWei/SLRT.

翻译:本文的目标是开发一个将口语翻译为手语的功能性系统,称为Spoken2Sign翻译任务。Spoken2Sign任务与传统的手语到口语(Sign2Spoken)翻译任务相互正交且互补。为实现Spoken2Sign翻译,我们提出一个由三个步骤构成的简单基准方法:1)利用现有Sign2Spun基准数据集构建手语词素-视频词典;2)为词典中的每个手语视频估计对应的三维手语动作;3)借助生成的手语词素-三维动作词典,训练由文本到手语词素翻译器、手语动作连接器和渲染模块组成的Spoken2Sign模型。翻译结果通过手语虚拟角色呈现。据我们所知,本研究首次以三维手语动作作为输出格式呈现Spoken2Sign任务。除了实现Spoken2Sign翻译功能外,我们还证明本方法的两个副产品——三维关键点数据增强和多视角理解——能够辅助基于关键点的手语理解任务。代码与模型已发布于https://github.com/FangyunWei/SLRT。